Why autonomous AI agents for news need a maintenance plan

Demos are easy. Shipping autonomous AI agents for news is hard. Most teams fail. They must process 200,000 articles with 94% accuracy. They also must not hallucinate and harm your brand. I have been seeing a massive reliability gap in my recent talks with Series B CTOs. They have built impressive prototypes. However, when they hit production volume, the systems crumble. They start seeing context bleeding, hallucinations, and a complete breakdown in classification logic.

At Islands, we faced this challenge head-on when building the autonomous news agent for Goodable. We were not interested in building a simple chat interface. We built a high-volume news engine that handles massive datasets autonomously. To do this, we had to move beyond simple LLM prompts to a specialized orchestration layer. This is the difference between a toy and a production-grade system. As I analyzed in Islands 2026, task-specialized agents solve the scaling problems that single general-purpose models cannot.

The maintenance tax is mandatory

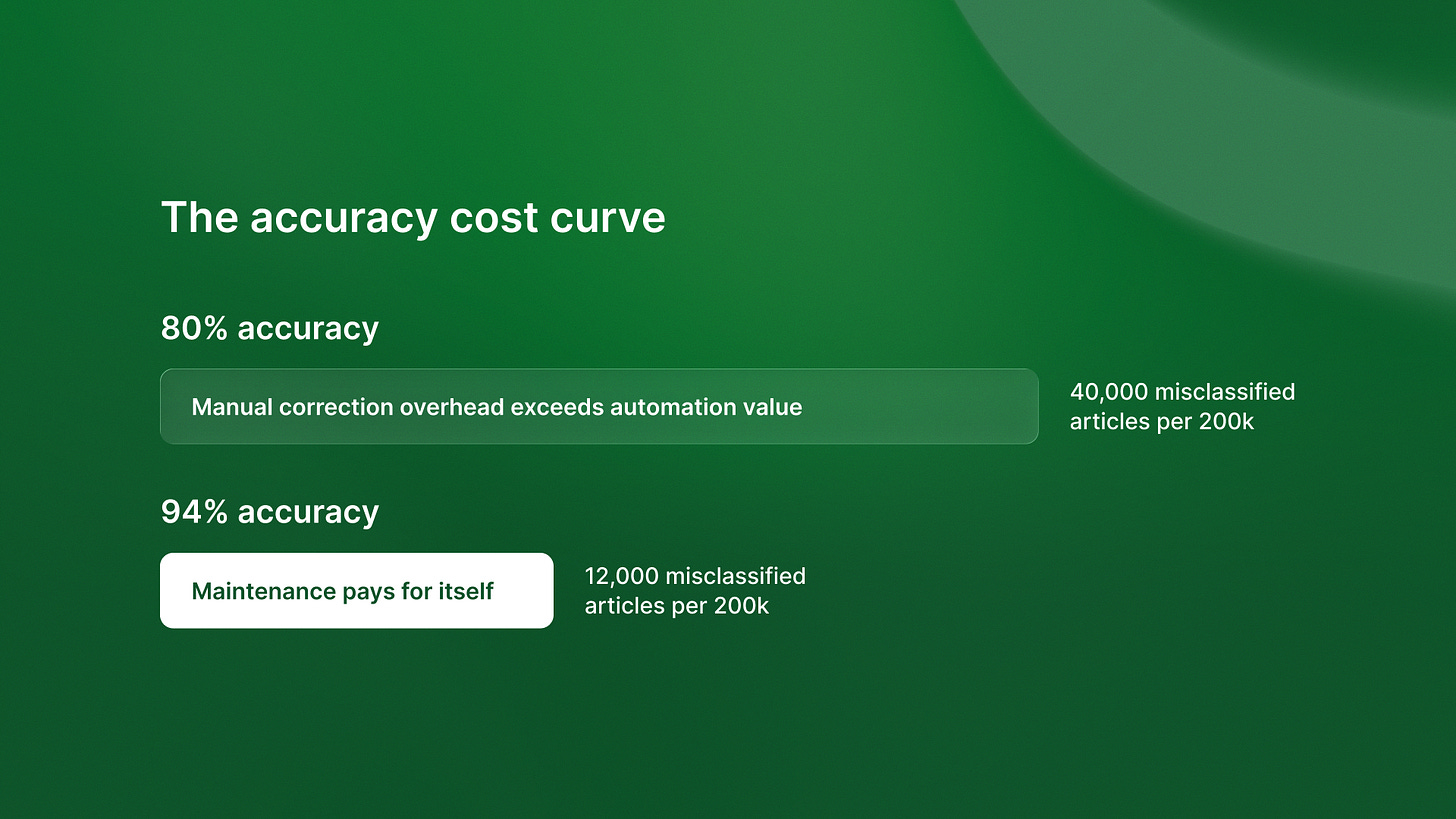

Production-ready autonomous systems require rigorous AI agent maintenance plans. Many engineering leaders assume that once an agent is trained, it stays performant forever. That is a dangerous mistake. In high-volume environments, data drift and model updates can quickly degrade accuracy. We found that maintaining 94% accuracy is an economic decision, not a technical one. High-volume data environments demand automated classification. This minimizes hallucination risks to prevent downstream cost spikes.

I have found that teams often skip the infrastructure work required for these systems. Multi-agent systems require significant infrastructure work that most teams underestimate. Without this foundation, you are just building a fragile wrapper. Islands 2026 shows that production-ready AI agents need ongoing updates. They also need state management to stay fast over time. This architectural discipline separates sustainable systems from those that fail within months. Shoreline 2026 highlights a similar pattern in hiring: without structured frameworks, you cannot distinguish signal from noise.

Building autonomous AI agents for news with high accuracy

To achieve 90% plus accuracy at scale, we follow a specific Goodable AI architecture:

State management: Use persistent storage like PostgreSQL to track the context and history of every interaction.

Validation checkpoints: Implement automated checks at every step of the workflow to catch hallucinations before they reach the user.

Failure recovery protocols: Define exactly what the agent should do when it encounters an edge case it cannot handle.

Specialized model training: Use domain-specific training to improve classification logic beyond what a general-purpose model can provide.

This approach is how QA flow 2026 achieved 96% accuracy in bug detection through specialized agent training. When you treat AI as a shared layer, not a single feature, you can keep high standards as volume grows. This requires high accuracy AI classification that doesn’t rely on manual review. Even in unrelated sectors, like beauty, Blanka 2026 shows that consumer trust is built on consistent, high-quality execution.

The economics of reliability

If your agent has an 80% accuracy rate, it is often costing you more than it saves. This is a concept we explored regarding Timecapsule 2026. In that case, low accuracy forces manual corrections that consume billable hours. In the news space, an 80% accuracy rate means 40,000 misclassified articles for every 200,000 processed. That is a brand disaster waiting to happen.

By investing in a maintenance plan, you reduce the long-term cost of ownership. You avoid the architectural trap of failing prototypes. ReachSocial has seen similar success by embedding AI into integrated workflows. This helps eliminate operational overhead while preserving strategic judgment. The goal is to build a system that works while you sleep, not one that requires a 24-hour monitoring team. Companies that ignore this often need specialized GrowTal expertise. It helps keep their content visible in AI search results.

Choosing your path

The window for building autonomous AI agents for news as a competitive advantage is closing. However, the penalty for shipping unreliable systems is higher than ever. Maintenance is not an afterthought. It is the architecture itself. If you build without a plan for state management and AI hallucination management, you are building on sand.

I encourage you to look at your current AI initiatives. Are you building a system that can handle 200,000 tasks, or are you just building a demo that looks good in a board meeting? Choose accordingly. If you need a partner who understands the unsexy realities of high-volume orchestration, let’s talk.

What is the biggest reliability issue you are facing with your AI agents? Reply and share your thoughts.

Ready to move beyond the demo phase? Build production-grade AI agents with Islands today.