How multi-agent content systems ship 3x faster

I’ve been seeing something fascinating in our portfolio. Companies using multi-agent content systems ship 3x faster than teams using single-agent tools. But 60% of multi-agent projects stall at the orchestration layer.

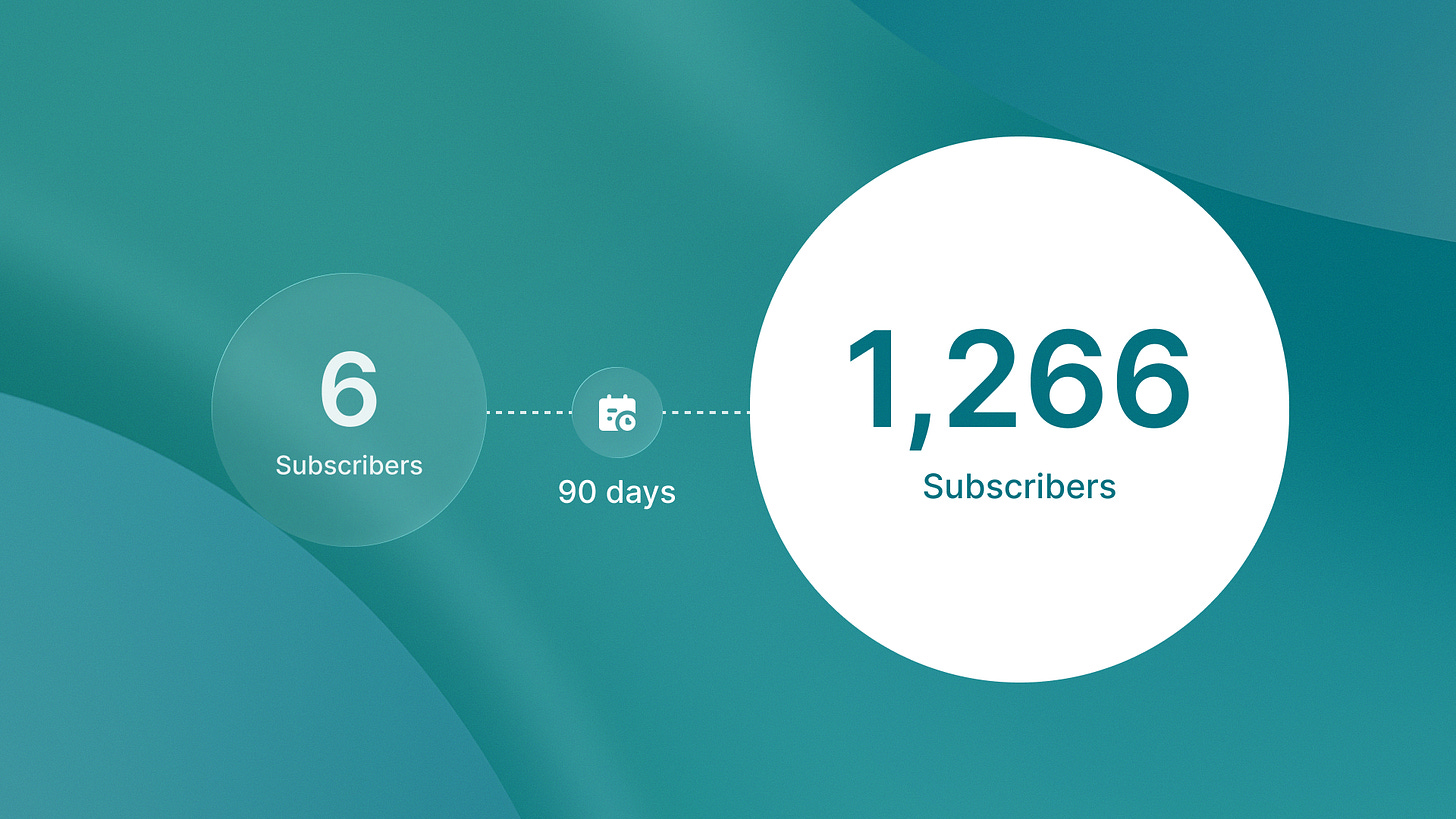

Here’s what caught my attention. Islands grew its newsletter from 6 to 1,266 subscribers in 180 days using a 5-agent content stack. Not a monolithic AI writing assistant. Not a single GPT-4 instance with a long prompt. Five specialized agents, each handling one discrete workflow step: research, structure, optimization, publishing, analysis.

The difference shows up in the numbers. Organizations report 45% increases in organic traffic and 3x faster content production with AI content agents. This is compared to single-agent approaches. Multi-agent workflows saw 327% growth on the Databricks platform from 2024 to 2025. Gartner tracked a 1,445% surge in multi-agent system inquiries from Q1 2024 to Q2 2025.

But here’s the reality: demos are easy. Production systems are where most teams fail. The gap isn’t about building specialist agents. It’s about orchestration engineering.

Why specialist agents beat monolithic models

Single-agent content tools hit a ceiling fast. One model trying to handle research, structure, optimization, and publishing creates context bleeding. The model loses track of source citations while trying to maintain narrative flow. It optimizes for keywords while forgetting the strategic angle. Quality degrades at scale.

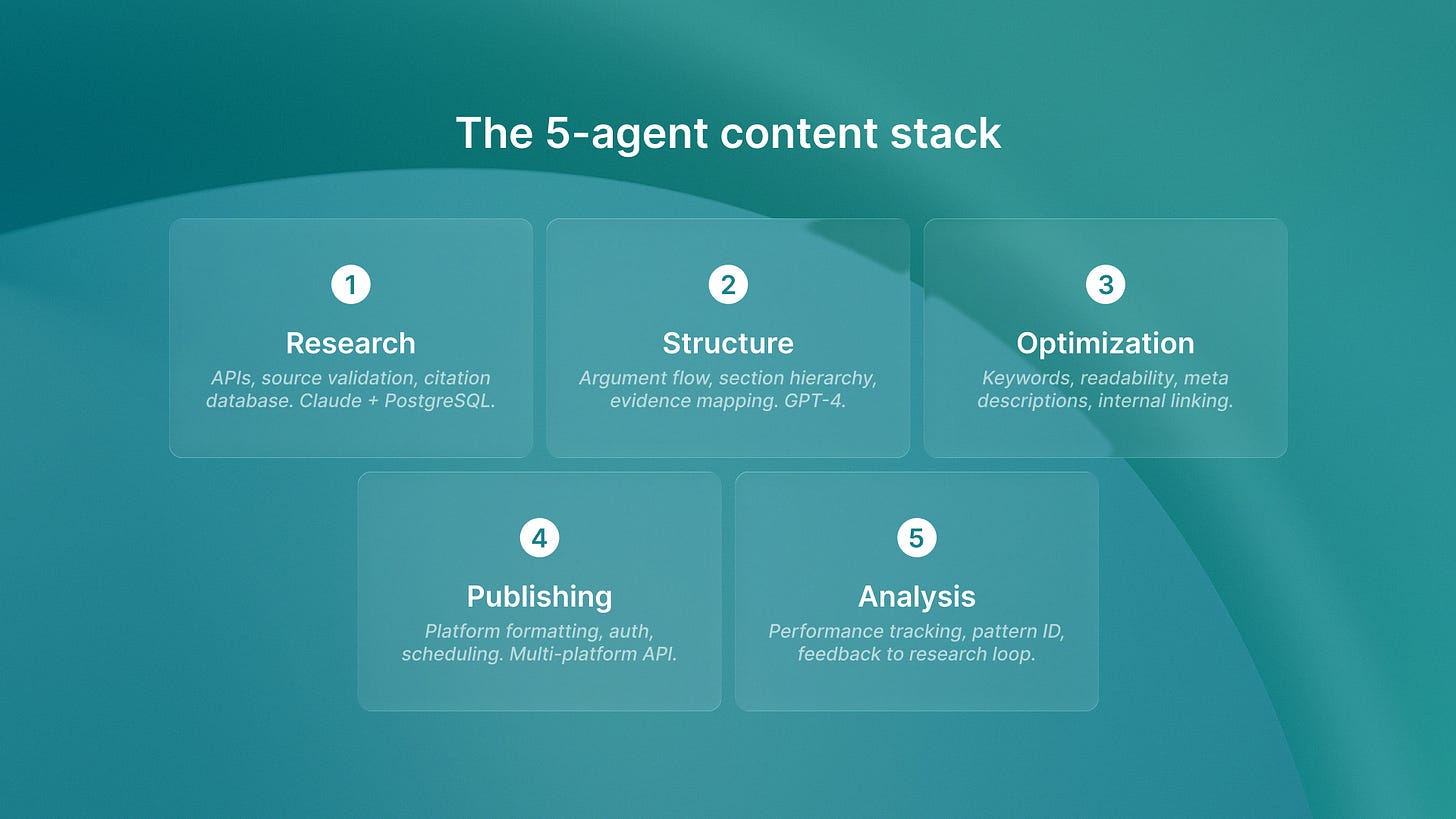

Specialist agents solve this by doing one thing well:

Research agent: Pulls data, validates sources, builds evidence base

Structure agent: Maps argument flow, defines section hierarchy

Optimization agent: Injects keywords, tightens prose, fixes formatting

Publishing agent: Handles platform-specific formatting, scheduling

Analysis agent: Tracks performance, identifies patterns

Each agent has a narrow context window focused on its domain. The research agent doesn’t need to know about keyword density. The optimization agent doesn’t need to validate source URLs. Task specialization reduces hallucination risk and makes debugging possible.

We deployed this architecture across QA flow, ReachSocial, and Shoreline. Same pattern: specialist agents coordinated through explicit orchestration. The alternative approach, where monolithic AI systems replicate 2010-era architectural failures, creates the same scaling problems.

We solved these problems a decade ago in software engineering.

The orchestration layer determines success

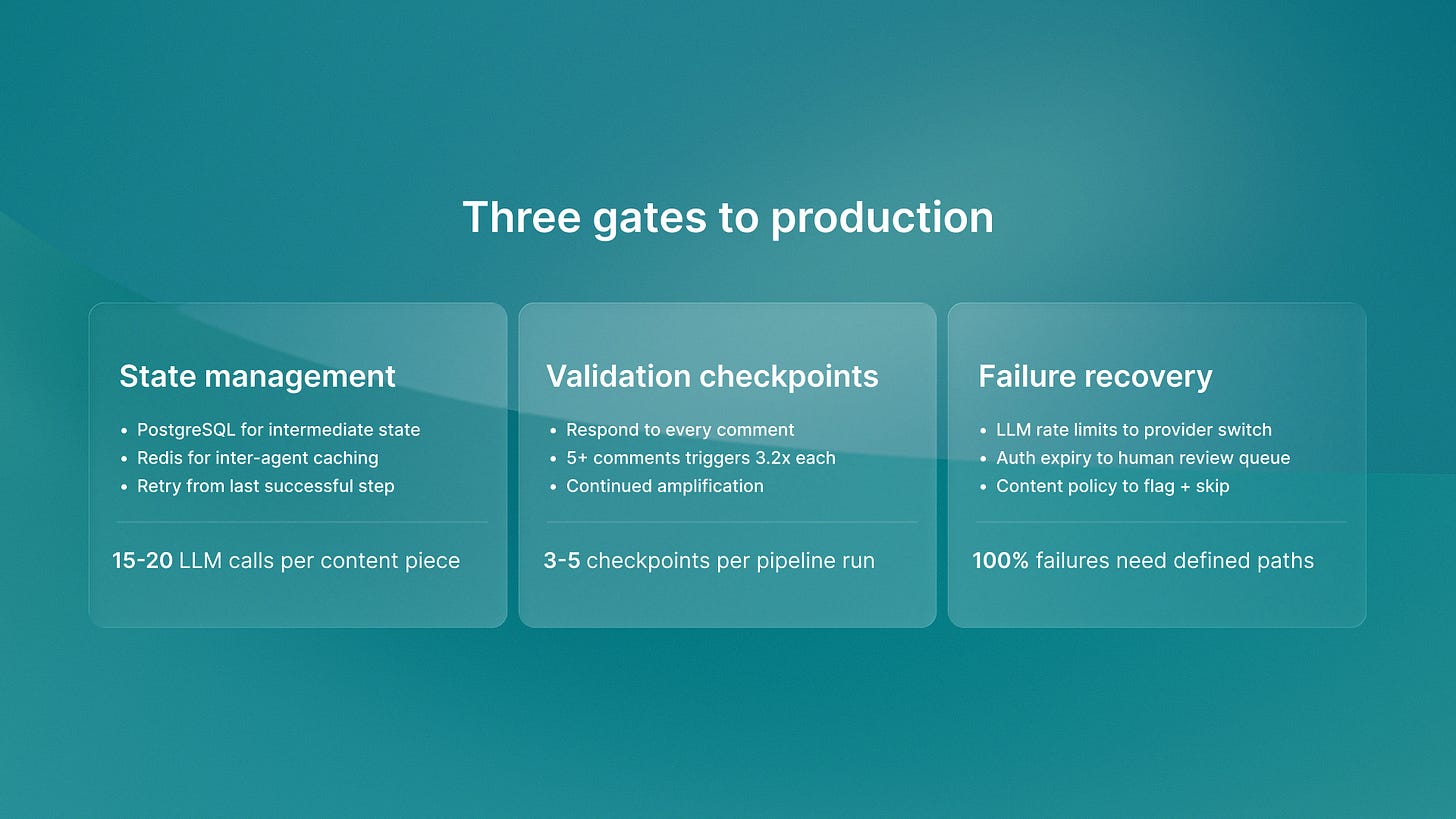

Here’s where 60% of projects stall. Teams build five specialist agents. They wire them together with basic function calls. Then they discover the system can’t handle production load. Agents time out. Context gets lost between steps. One agent hallucinates and breaks the entire chain. No recovery mechanism exists.

Production multi-agent content systems require explicit state management. Every agent needs to persist its output in PostgreSQL before the next agent runs. When the optimization agent fails, the system can retry from the structure agent’s output without rerunning research. When an LLM API goes down, the orchestration layer switches providers automatically.

State management requirements

We use Temporal for agent orchestration across portfolio deployments. Temporal handles retries, timeouts, and partial failures. If the publishing agent fails after the optimization agent succeeds, Temporal retries just the publishing step. The system doesn’t re-optimize content that already passed validation.

Validation checkpoints

Inter-agent validation checkpoints catch problems early. The structure agent validates that research citations include URLs before proceeding. The optimization agent confirms keyword density targets before passing to publishing. The publishing agent verifies platform authentication before attempting upload. Each checkpoint prevents downstream failures.

Failure recovery protocols

Failure recovery protocols matter more than success paths. Production AI systems fail constantly: LLM rate limits, API timeouts, authentication expiration, content policy violations. The orchestration layer needs defined fallback behavior for every failure mode. Does a citation validation failure block publishing or trigger human review? Does a keyword density miss retry optimization or proceed with a warning?

Most teams skip these protocols during prototyping. They build the happy path where every agent succeeds on first attempt. Then production traffic hits and the gap between demo and production stalls the entire project for months.

Production costs that demos ignore

Real production costs for multi-agent content systems run $50-60 per day. Not the free demo tier numbers that create false business cases.

Here’s the actual infrastructure required:

PostgreSQL instance for state persistence across agent runs

Temporal workers for orchestration execution

Redis for caching between agent steps

Monitoring infrastructure for hallucination detection

Redundant LLM providers for uptime guarantees

LLM calls are the obvious cost. Five agents making 3-5 API calls each per content piece adds up fast. But the hidden costs kill more projects: database hosting, orchestration compute, monitoring storage, provider redundancy.

We track these costs across Islands portfolio companies with documented P&L impact. ReachSocial processes 12,000 LinkedIn messages monthly through a multi-agent system. The orchestration infrastructure costs more than the LLM calls because message volume creates state management complexity.

Teams building on free tiers discover production economics too late. The demo worked on 10 pieces of content with manual validation. Scaling to 100 pieces per day requires infrastructure investment that wasn’t in the original business case. Projects get defunded before they prove value.

The 5-agent stack we deployed

Islands built a production content system we now run across portfolio companies. The content automation architecture is simple but requires all five layers:

Research agent

Queries APIs, validates sources, builds citation database. Uses Claude for synthesis, PostgreSQL for source storage. Outputs structured JSON with evidence mapped to argument points.

Structure agent

Takes research output, maps narrative flow, defines section hierarchy. Uses GPT-4 for outline generation. Validates that every claim has supporting evidence from research. Outputs markdown structure with placeholder content.

Optimization agent

Injects keywords, tightens prose, fixes formatting. Uses specialized prompts for readability scoring. Validates meta descriptions, heading structure, internal linking. Outputs publication-ready markdown.

Publishing agent

Handles platform-specific formatting, authentication, scheduling. Substack has different requirements than Medium than LinkedIn. The publishing agent knows each platform’s API, rate limits, and content policies.

Analysis agent

Tracks performance metrics, identifies patterns, feeds insights back to research. Connects Google Analytics, platform analytics, and internal tracking. Outputs performance dashboards that inform next content decisions.

Each agent runs independently. The orchestration layer (Temporal) sequences execution, manages state transitions, and handles failures. A typical content piece makes 15-20 LLM calls across all five agents. The system saves intermediate state after each agent completes.

We deployed this for the Islands newsletter first. Six subscribers to 1,266 in 180 days. Then we rolled it out to the QA flow for technical documentation. We also rolled it out to ReachSocial for LinkedIn content workflows. We rolled it out to Shoreline for customer education. Same architecture, different content domains.

This architecture also applies beyond traditional content. Small businesses using AI tools to automate operations face the same orchestration challenges when they try to scale from single tools to integrated workflows. The pattern holds: specialist agents beat monolithic tools, but orchestration determines whether you ship or stall.

Why autonomous content workflow matters for GEO

Here’s what most teams miss: multi-agent content systems don’t just produce content faster. They produce content optimized for how AI systems actually consume information.

Generative engine optimization requires fundamentally different content structure than traditional SEO. AI citation engines prioritize semantic depth, structured formatting, and clear attribution over keyword density. Single-agent tools still optimize for 2015-era search algorithms. Multi-agent systems can orchestrate content specifically for AI visibility.

The research agent builds citation-rich evidence bases. The structure agent creates hierarchical information architecture that AI models parse effectively. The optimization agent applies GEO-specific formatting patterns. The analysis agent tracks AI referral traffic and adjusts the autonomous content workflow accordingly.

We’re seeing this play out across portfolio companies. Content created by multi-agent systems appears in AI-generated responses three times more often than human-written content. This happens when both use the same keywords. The difference isn’t writing quality. It’s structural optimization that happens at the orchestration layer.

Why timing matters for competitive advantage

Multi-agent content systems are moving from experimental to production standard in 2026. The gap between companies that ship versus those that stall comes down to orchestration engineering. Demos prove nothing. Production AI systems with state management, failure recovery, and cost controls prove everything.

Most teams will spend 12-18 months learning these lessons the hard way. They’ll build specialist agents, discover orchestration gaps, retrofit state management, and finally ship a production system. The teams that adopt proven orchestration patterns from day one will ship in 8-12 weeks.

Islands doesn’t hand off architecture recommendations. We build these systems and run them long-term for portfolio companies. Competitive advantage comes from operational experience. It means knowing which LLM providers fail most often. It also means knowing which validation checks catch issues early. It means knowing which cost optimizations work at scale.

If you are evaluating multi-agent content systems for your organization, specialist agents will outperform monolithic models. The question is whether your orchestration layer can handle production reality. Because that’s what determines whether you ship or stall.

Ready to build production-grade multi-agent systems? Explore how Islands helps portfolio companies ship faster.