Your team is coding 25% faster. why is production slower?

I’ve been talking to a lot of CTOs lately who are confused.

Their teams adopted Cursor or Copilot six months ago. Developer productivity is up 20-25% on individual tasks. The metrics look great. But features aren’t shipping faster. In some cases, deployment velocity has actually slowed down.

Here’s what’s happening: faster coding doesn’t automatically mean faster shipping. And for many organizations, AI coding tools are creating a hidden tax that’s offsetting the productivity gains.

The productivity paradox nobody talks about

Let me start with the numbers everyone celebrates. Developers report 20-25% time savings on tasks like debugging and refactoring with tools like Cursor (Opsera 2025). Studies show productivity gains of 30-50% on routine development tasks with AI (Senorit 2026).

Those are real gains. I’m not disputing them.

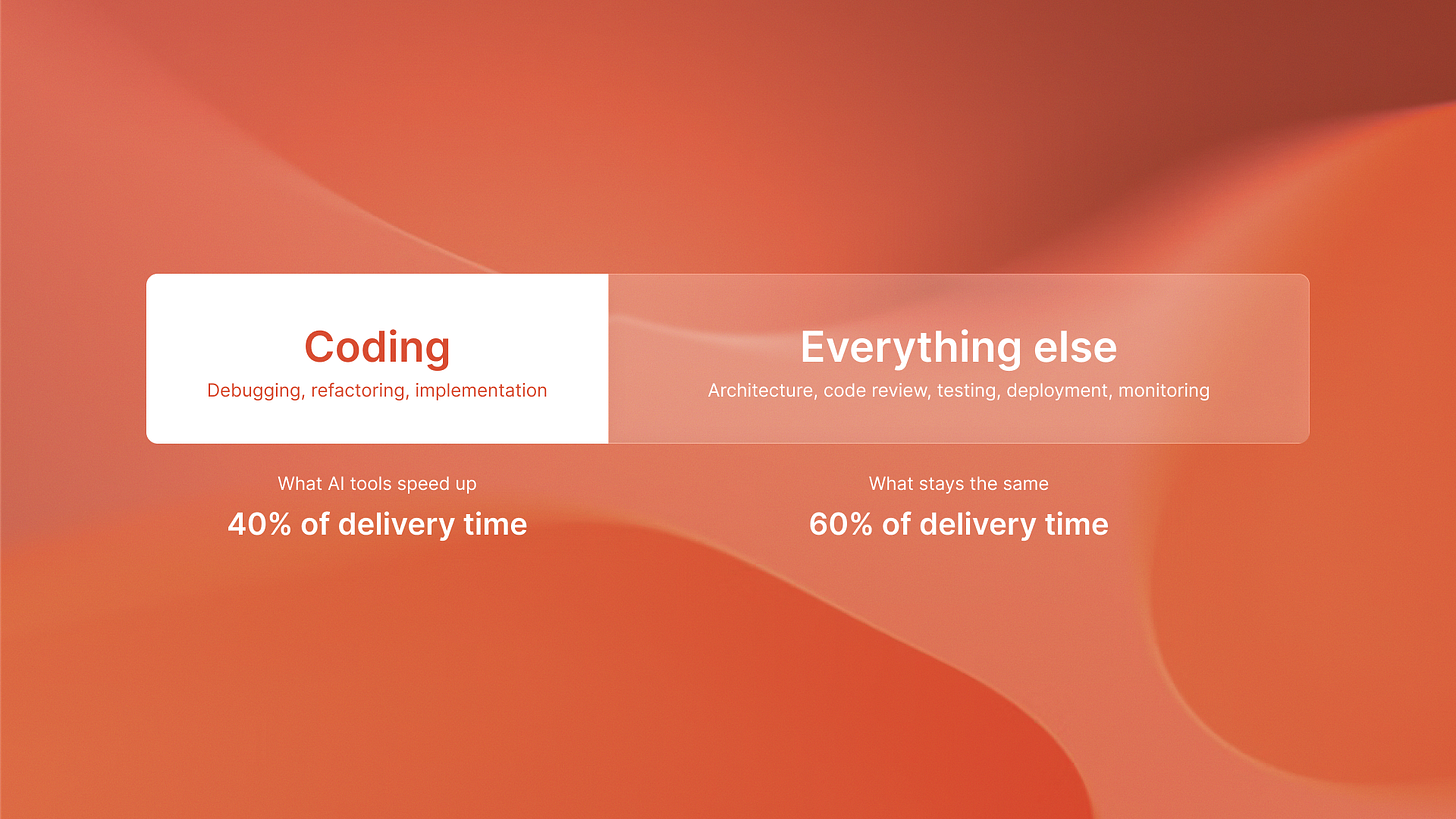

But here’s the problem: coding is only one part of the software delivery lifecycle. Even if coding is 25% faster, you only optimize about 40% of the time from idea to production. The other 60% is architecture decisions, code review, testing, deployment, and monitoring.

When you speed up one part of the system, but not the other bottlenecks, you won’t get equal gains. You get local optimization that can actually slow down the system as a whole.

The hidden costs start accumulating

I spoke with the team at QA flow last week. They shared something interesting about what they see with their autonomous testing platform. Companies using AI coding assistants write code faster. But they also create more code that needs testing.

The 20–25% time saved on debugging can be offset. This happens when you must debug AI-generated code. The code may not follow architectural patterns. It can also add subtle bugs that only show up in production.

Here’s what actually costs money when you deploy AI coding tools without proper guardrails:

Infrastructure overhead for running AI assistants and reviewing generated code

Expanded code review burden (more code to review, more edge cases to catch)

Quality assurance expansion (more test cases needed for AI-generated implementations)

Technical debt accumulation (AI tools optimize for speed, not maintainability)

Debugging time for production issues from subtle AI-introduced bugs

None of these costs show up in the productivity metrics. But they show up in your deployment velocity.

The investment nobody modeled

78% of organizations use AI in core development workflows. AI-savvy professionals earn a 40% pay premium. (McKinsey and Upwork via Medium, 2026). That’s massive investment.

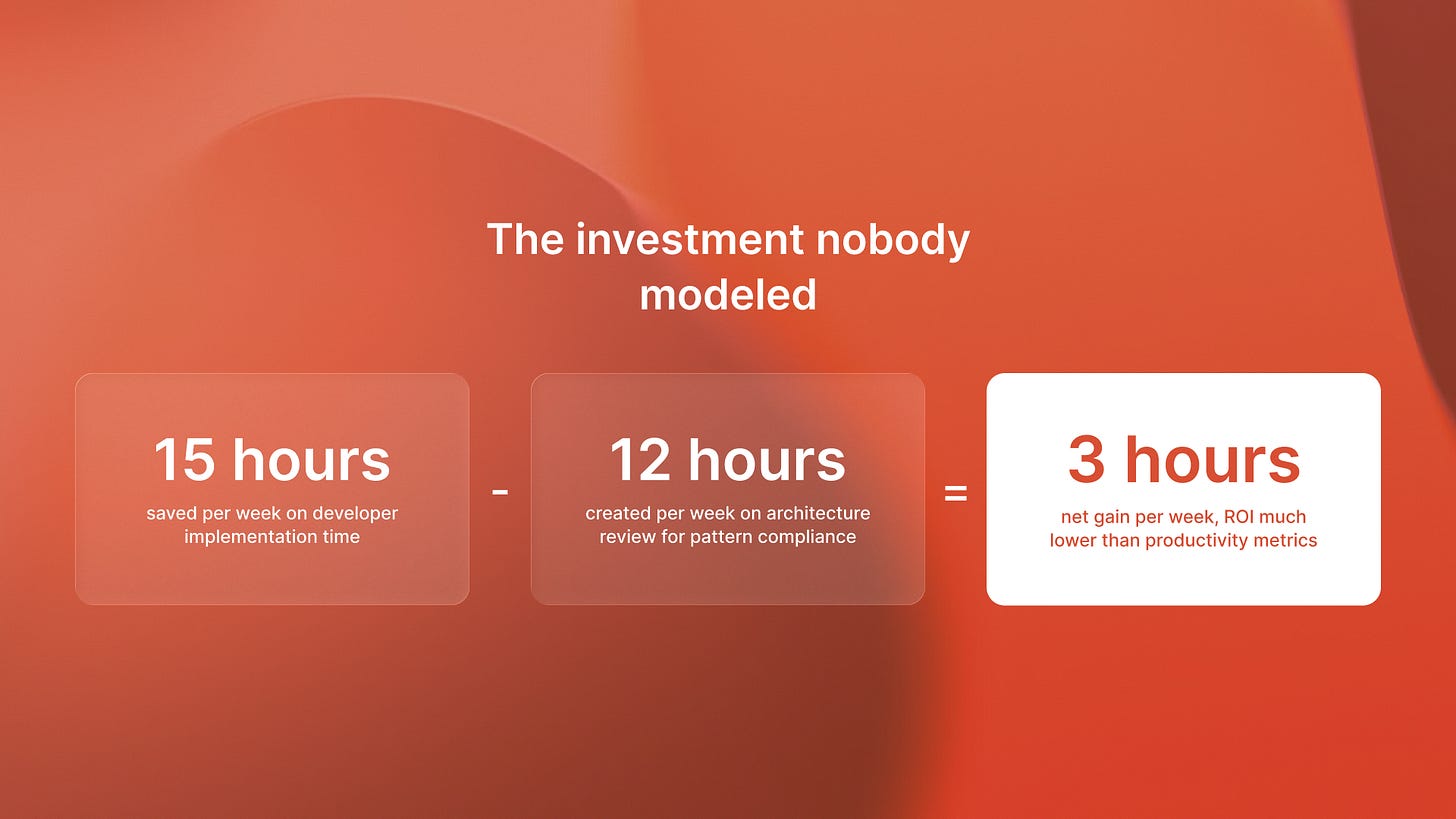

But I rarely see companies model the total cost of ownership. They calculate developer time savings. They don’t calculate the increased testing requirements, the architectural review overhead, or the technical debt servicing costs.

Last month, I watched a Series B company learn their AI coding assistant saved developers 15 hours a week. It also created 12 extra hours a week for the architecture team. They spent that time reviewing generated code for pattern compliance. Net gain: 3 hours per week. ROI: much lower than the productivity metrics suggested.

The companies I work with at Islands are learning this the hard way. Fast coding without discipline can create code that works but fails to integrate well. It may not scale cleanly. It can become expensive to maintain.

What actually works

The companies winning with AI coding tools are doing something different. They’re architecting for autonomous agents first, then using AI assistants to accelerate implementation of well-defined patterns.

Notice that 30-50% productivity gain is highest on routine development tasks (Senorit 2026). That tells you something important: AI tools excel at execution within constraints. They’re less good at architectural decision-making.

Here’s the pattern I’m seeing work:

Define clear architectural patterns and constraints upfront

Use AI agents for autonomous execution within those patterns

Use AI assistants to accelerate manual implementation where needed

Build quality gates that catch pattern violations early

When you do this, you capture the full productivity gains without accumulating technical debt. The AI tools become force multipliers for good architecture, not substitutes for architectural thinking.

I wrote about this distinction in more detail here: why autonomous systems deliver better ROI than assistants. The short version: architecture first, then acceleration.

The real economics

Let me show you what this looks like in practice with actual numbers.

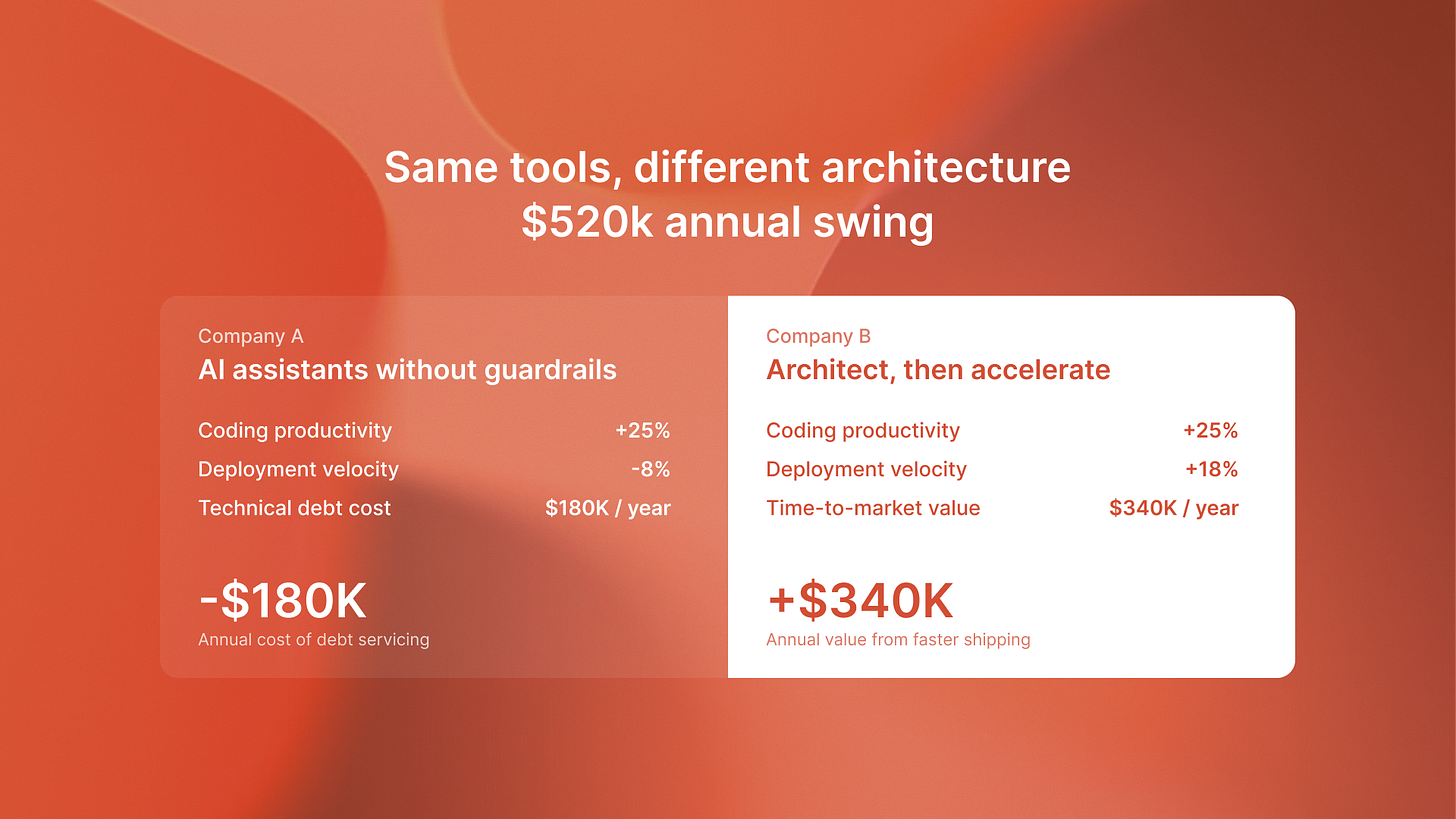

Company A: Deployed AI coding assistants without architectural guardrails. Saw 25% productivity gain on coding tasks. But deployment velocity decreased 8% because technical debt accumulated faster than features shipped. Cost of servicing that debt: approximately $180,000 annually in additional engineering time.

Company B: Architected for autonomous agents, then deployed AI assistants to accelerate execution. Same 25% productivity gain on coding tasks. Deployment velocity increased 18% because architectural patterns prevented debt accumulation. Net value: approximately $340,000 annually in faster time-to-market.

The difference between these two approaches is a $520,000 annual swing. Same tools. Different architectural thinking.

This is why I keep emphasizing the economics of AI agents over the productivity metrics of AI assistants. The metrics look similar. The outcomes are completely different.

The competitive gap is widening

Here’s what concerns me about the next 18 months.

AI adoption is accelerating. More companies are deploying coding assistants without understanding these dynamics. The gap between companies that architect first and companies that just deploy tools is going to widen dramatically.

Companies in the first group will see compounding gains: faster coding plus architectural clarity plus reduced technical debt. They’ll be shipping features 30-40% faster within a year.

Companies in the second group will see diminishing returns. Faster coding is offset by technical debt, confusing architecture, and quality issues. They might not be shipping any faster than they are today.

The scary part is both groups will have similar productivity metrics. The difference will show up in time-to-production, system reliability, and ability to respond to market changes.

When this analysis doesn’t apply

Let me be clear about when speed matters more than architectural discipline.

Early-stage startups pre-product-market fit should optimize for learning speed, not architectural purity. If you’re still figuring out what to build, faster coding with technical debt is often the right tradeoff. You can clean it up later if you find PMF.

Companies with exceptional architectural discipline already in place will see better results from AI coding tools immediately. If you have strong patterns, good testing, and architectural review processes, AI assistants will accelerate without creating debt.

But for most Series B+ companies with 50-500 employees, this is the critical moment. You’re big enough that technical debt hurts. You’re small enough that architectural changes are still possible. The decisions you make about AI tooling now will determine whether you’re in the winning group or the struggling group 18 months from now.

The strategic insight

AI coding tools are accelerators, not replacements for architectural thinking.

If you want full productivity gains without hidden costs, design for autonomous systems first. Then use AI assistants to speed up well-defined work patterns.

The companies that figure this out will have a massive competitive advantage. Not because they have better tools. Because they have better systems.

And systems-level thinking is what determines whether faster coding actually means faster shipping.