Your team adopted AI tools. Your workflows didn't.

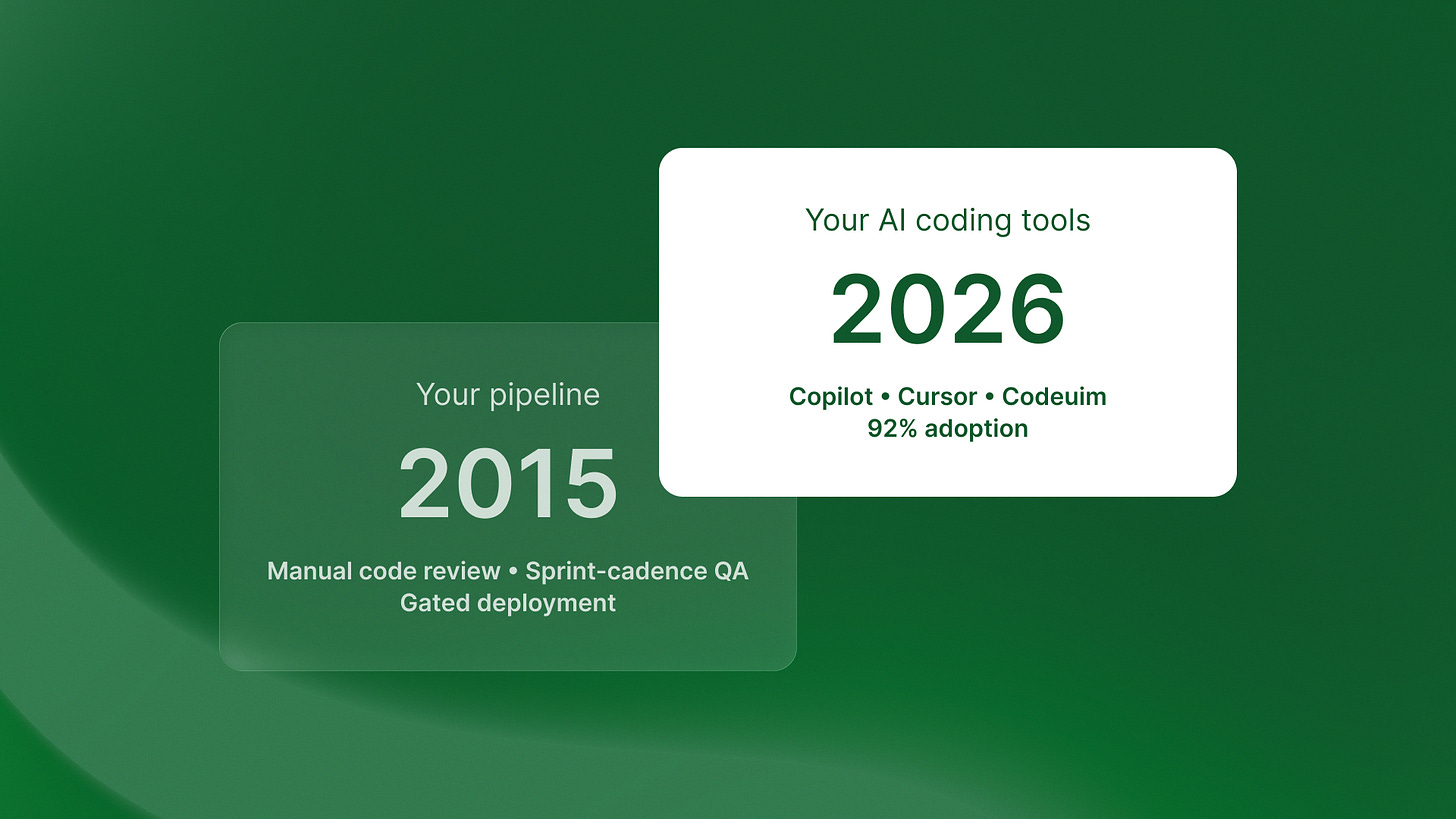

I’ve been watching something unfold across our portfolio that nobody’s talking about publicly. Your engineering teams jumped on AI coding tools. GitHub Copilot, Cursor, Codeium. The adoption numbers are staggering: 92% of US developers now use these tools. Globally, we’re at 84%, up from 76% just last year.

But here’s what I’m seeing in production: most teams are still running 2015-era workflows. Same code review processes. Same testing pipelines. Same deployment gates. They bolted AI onto legacy processes instead of rebuilding for the new reality.

The gap between tool adoption and workflow evolution is creating technical debt at unprecedented scale. Let me show you what’s actually breaking.

The volume shift nobody prepared for

41% of worldwide code is now AI-generated. Think about that number for a second. Nearly half the code shipping to production wasn’t written by human hands. It was generated, adapted, and merged by AI assistants working alongside your developers.

This isn’t a productivity enhancement. It’s a fundamental architecture shift.

I was talking to the team at QA flow last week, and they shared something that perfectly illustrates the problem. One of their clients, a fintech with about 120 engineers, adopted AI coding tools across the entire team last year. Developer velocity went up 3x immediately. Features that used to take two weeks were shipping in four days.

But their QA pipeline didn’t change. Same manual testing cadence. Same human review process designed for the old volume. Within three months, they had a backlog of 200+ untested features and their bug escape rate doubled. The AI was writing code faster than humans could validate it.

Here’s what actually broke: their architecture assumed human-paced development. Code review took 24-48 hours because that matched how fast developers could write features. Testing ran in weekly sprints because that aligned with the old velocity. Every gate in the pipeline was calibrated for manual coding speed.

When AI 10x’d the code output, every downstream process became a bottleneck.

The workflow mismatch problem

Most teams are treating AI tools as productivity enhancers for existing workflows. That’s the wrong mental model. AI coding tools aren’t faster typewriters. They’re a complete paradigm shift in how code gets written, reviewed, and deployed.

The old workflow: Developer writes feature → PR review → Manual testing → Staging deploy → Production.

This made sense when writing code was the bottleneck. Now validation is the bottleneck. You can’t manually review 41% more code volume with the same team size and maintain quality.

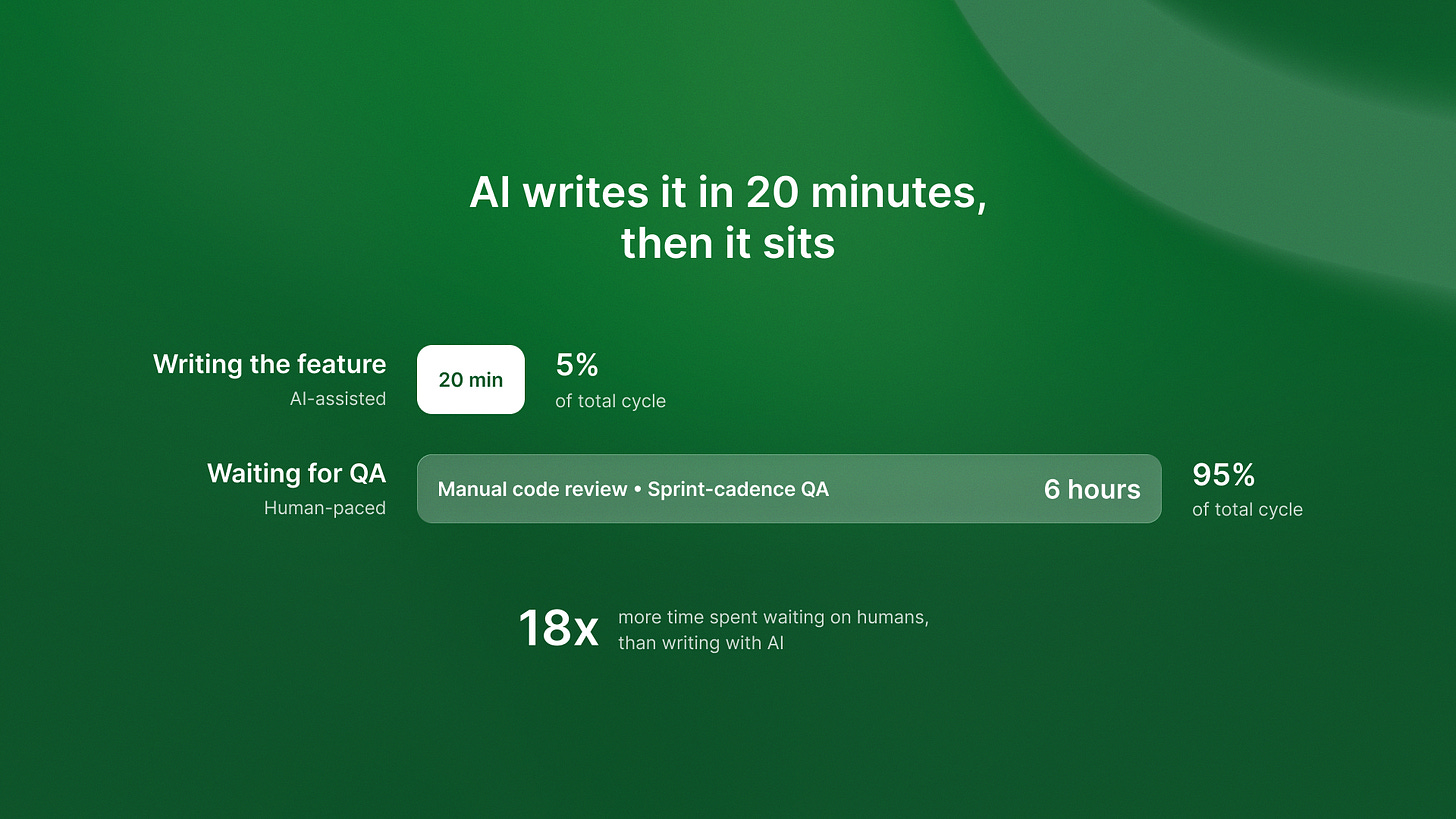

I noticed this pattern at Timecapsule recently. They’re tracking real-time profit across features. They found something unexpected. AI-made features cost more per hour than human-written ones. This happened even though AI features shipped faster. Why? Because the review and testing overhead hadn’t scaled down with the reduced writing time.

Their developers spent 20 minutes writing a feature with AI help. Then they waited 6 hours for human review and manual testing. The AI eliminated the wrong bottleneck.

Here’s what they changed: they rebuilt their testing pipeline around autonomous validation. Instead of waiting for human QA, they implemented continuous automated testing that validates AI-generated code in real-time. Features now go from AI generation to production-ready in under 2 hours. Test coverage is better than with the manual process.

The competitive advantage went to redesigning the entire development lifecycle, not just adding AI to the front end.

What needs to rebuild

Three core areas break when you layer high-volume AI generation onto legacy workflows: architecture, testing, and deployment.

Architecture: from review-heavy to validation-native

Traditional architecture assumes expensive-to-write, cheap-to-review code. AI flips this. Code is now cheap to write, expensive to validate.

Your architecture needs autonomous testing at every layer. Not after code is written. During generation. Real-time validation that catches issues while the AI is still in context.

Example: Our work with Islands managing fractional CTO services across multiple clients showed this clearly. One client was using AI to generate API integrations across 15 different services. Traditional approach: write all the code, then test integration points manually. New approach: AI generates code with embedded validation hooks that test integration contracts in real-time during generation.

Result: Integration bugs dropped 67% and deployment time went from 5 days to 8 hours.

Testing: from periodic to continuous

When 41% of your codebase is AI-generated, you can’t test in sprints anymore. The volume is too high. You need continuous autonomous testing that keeps pace with AI code generation.

This means rebuilding your testing infrastructure around automation-first workflows. Not manual testing with some automation. Pure autonomous testing with human oversight.

We wrote about the economics of this shift in our AI agent ROI breakdown. The key insight: autonomous testing systems deliver compound returns because they scale with code volume without linear cost increases. Manual testing creates a ceiling on how fast you can ship.

Deployment: from gated to continuous

Your deployment gates were designed for human-paced releases. Weekly or bi-weekly cycles made sense when writing and testing features took weeks. With AI generation, that cadence becomes artificial friction.

The teams winning right now are moving to continuous deployment with automated rollback. Ship small changes constantly, monitor in real-time, roll back automatically if issues emerge. This requires rethinking your entire deployment architecture, but it’s the only way to match the new velocity.

The compounding advantage timeline

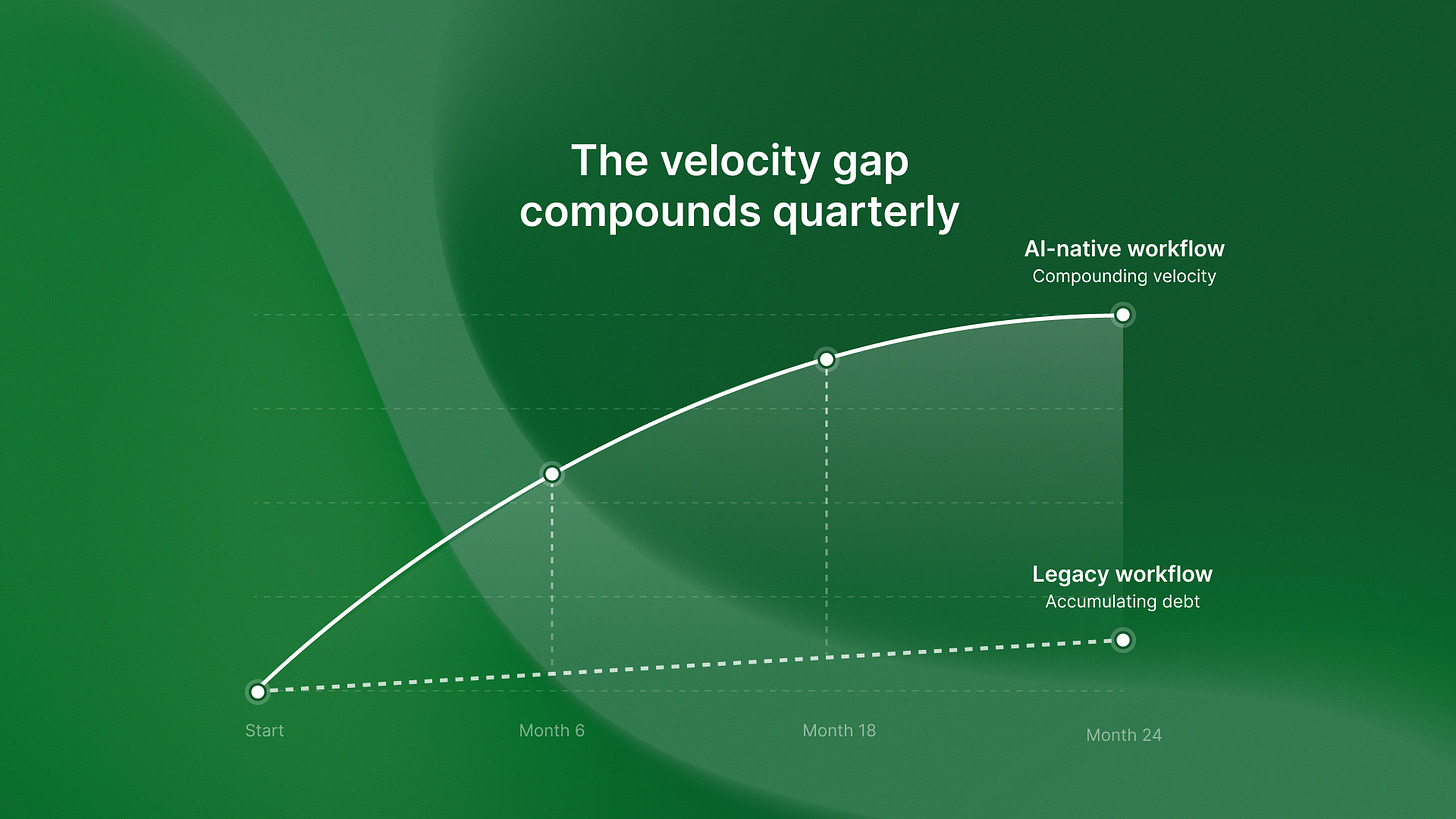

Here is the strategic reality. Teams that rebuild their development lifecycle around AI-native workflows in 2026 will gain an advantage. They will keep that advantage for 18 to 24 months before it becomes table stakes.

The math is simple. If your competitors still use old workflows with AI added on, they create technical debt with every AI-made feature. You’re building validation velocity that compounds quarter over quarter. After 18 months, the gap becomes nearly impossible to close without a complete rebuild.

I saw this play out with a client who rebuilt their testing infrastructure around autonomous validation early. Six months later, they were shipping features 4x faster than competitors with similar engineering headcount. A year after that, the velocity gap was 7x. The competitors finally started rebuilding, but they were now 18 months behind. They also had technical debt to pay down first.

That said, I need to acknowledge when traditional workflows still make sense. Highly regulated industries with 20+ year system lifespans can’t move to continuous deployment. Teams under 10 engineers might not have the complexity to justify autonomous testing infrastructure. Some systems require human judgment that AI can’t replicate yet.

But for most Series B+ startups with 50 to 500 engineers shipping software at scale, the workflow mismatch is real. It gets worse every quarter.

If you want to learn how to build these systems, we made a detailed playbook.

See our 30-day AI agent guide. It covers the perception, reasoning, action, and learning layers you need to build autonomous validation that scales with AI code generation.

The fundamental question isn’t whether to adopt AI coding tools. You’ve already done that. The question is whether you’ll rebuild your workflows to match. Or you’ll keep adding technical debt until the gap forces a painful migration later.

The teams redesigning their development lifecycle right now are building the infrastructure for the next decade of software development. The ones waiting are betting that human-paced workflows can somehow absorb AI-paced code generation.

That bet hasn’t worked out well so far.