Why you're building assistants when you need agents

I’ve been talking to a lot of engineering leaders lately who are making the same mistake.

They have budget. They have mandate. They know AI transformation is the strategic priority for 2026. But when I ask what they’re building, they describe assistants when their business actually needs agents.

This isn’t a semantic distinction. It’s the difference between 100%+ ROI and marginal productivity gains.

Here’s the reality: 62% of organizations expect over 100% ROI from agentic AI investments. This is according to the Enterprise AI Survey 2025. Companies using agentic workflows see 1.7x ROI on average compared to assistant implementations (Second Talent AI Agents Statistics 2026).

But most engineering teams don’t have a clear framework for the foundational architecture decision. They’re defaulting to assistant builds because that’s what they see in the market. GitHub Copilot suggests code. ChatGPT drafts emails. Salesforce Einstein surfaces insights.

Assistants enhance. Agents replace.

That distinction shapes everything that follows: your infrastructure needs, your production timeline, and your business value. It shows if you are replacing a workflow or just speeding up current processes.

The choice you make in the next 90 days will decide if you build competitive moats. Or you chase small gains while competitors automate entire workflows.

The architecture decision nobody’s talking about

Let me frame this properly.

Assistants augment human workflows. They suggest, recommend, and accelerate. You still make the decisions. You still execute the critical steps. The human remains in the loop at every decision point.

Agents replace entire workflows through autonomous execution. They perceive context, make decisions, take actions, and handle exceptions without human oversight. The workflow runs end-to-end without you.

I was talking to a team last week building what they called an “AI agent” for customer support. When I dug into the architecture, it was suggesting responses that humans reviewed and edited before sending. That’s an assistant. A very good one, but still fundamentally human-in-the-loop.

Contrast that with what we built at QA flow. The system takes in Figma designs, creates test cases, and runs them on its own. It files GitHub issues when it finds bugs. It also runs regression suites on every deploy. No human reviews test cases. No approval workflow before filing issues. It’s true workflow replacement.

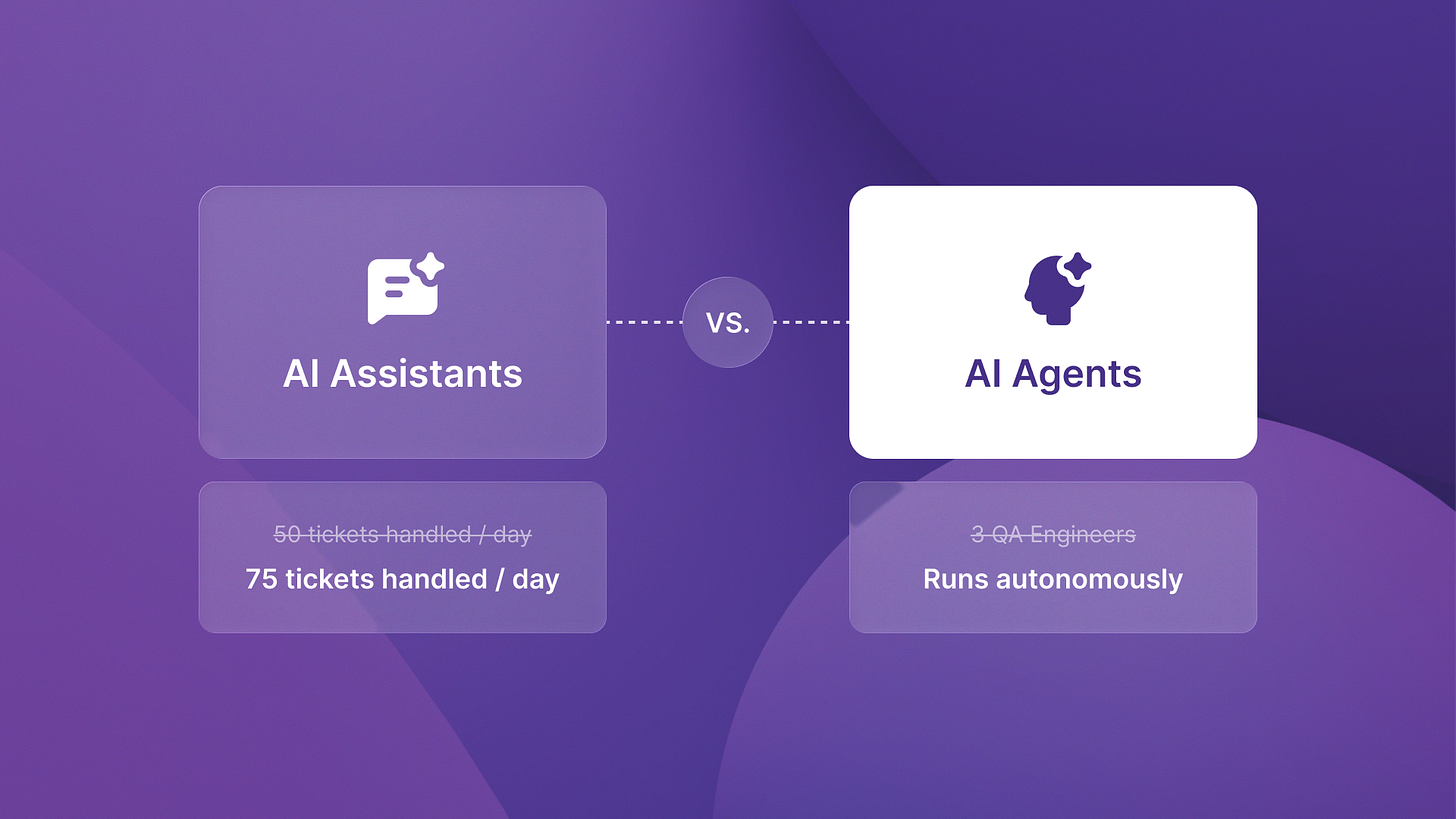

The economic difference is structural. Assistants deliver productivity multipliers. If your support team handles 50 tickets per day with an assistant, maybe they handle 75. That’s a 50% productivity gain. Valuable, but you still need the same headcount.

Agents eliminate labor costs entirely. The workflow that required three QA engineers now runs autonomously. That’s not a multiplier. That’s replacement economics.

Here’s what caught my attention in the data. 88% of senior executives plan to increase AI budgets in 12 months. They cite agentic AI opportunities (PwC 2025). The money is flowing toward workflow replacement, not workflow enhancement.

Companies that build the right architecture now will capture those budgets. Companies that spend 2026 building assistants will be rebuilding from scratch in 2027.

The three-part evaluation framework

So how do you actually decide? I’ve been using a three-part framework with portfolio companies that maps directly to architecture choice.

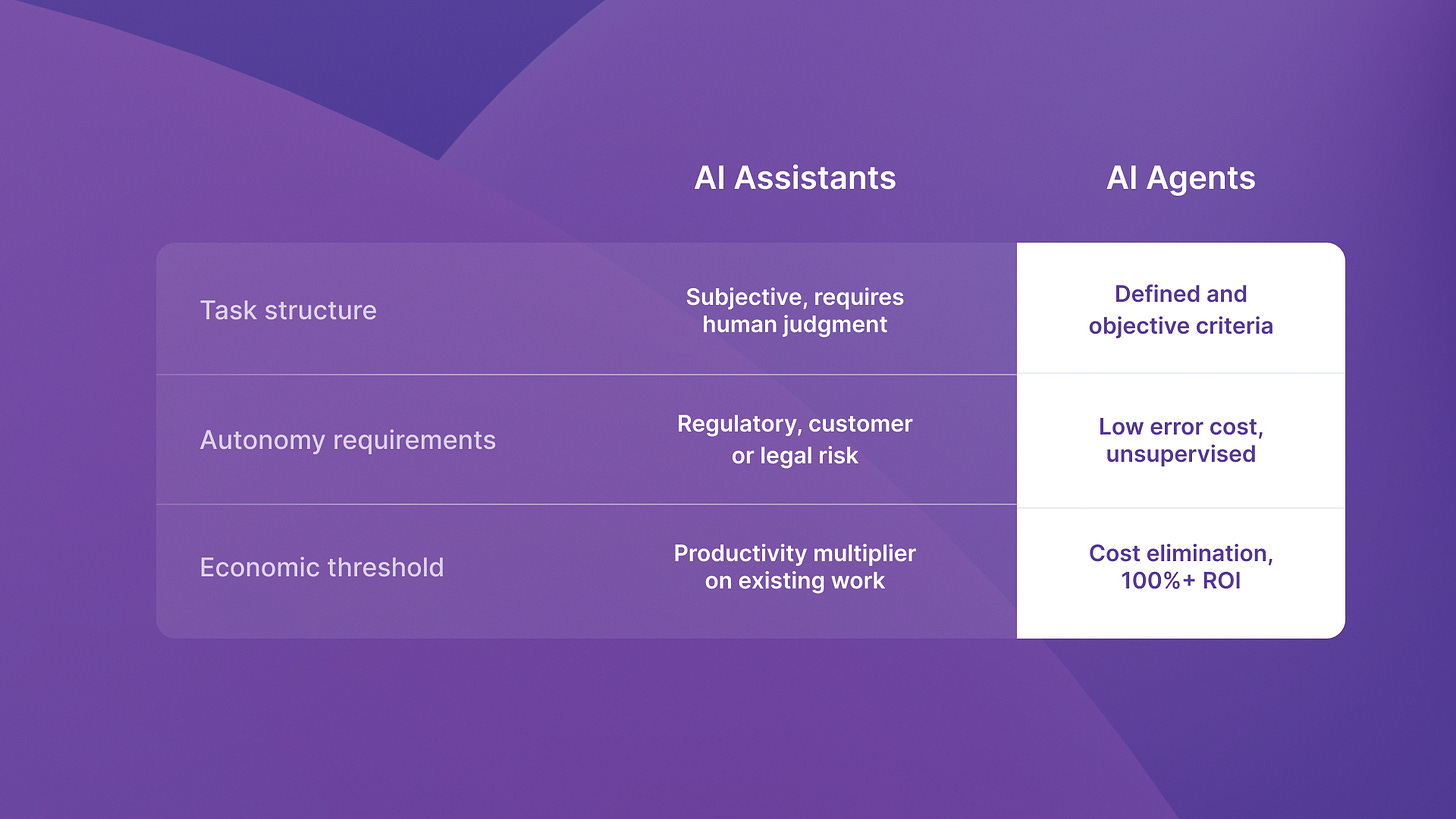

Task structure: defined vs. ambiguous

Agents need well-defined task boundaries. The workflow must have clear inputs, deterministic steps, and measurable outputs. If the task requires creative judgment, contextual interpretation, or subjective evaluation at multiple decision points, you’re looking at assistant architecture.

Example: Bug detection from visual designs is well-defined. Input is Figma file. Output is list of UI inconsistencies with screenshots. The evaluation criteria (spacing, alignment, color matching) are objective and measurable.

Content strategy is ambiguous. What makes a good headline? When is a metaphor effective? Those require human judgment at every step. Assistant architecture fits better.

Last month I was reviewing a project at Islands where a client wanted to automate technical documentation. We mapped the workflow and found 40% of decisions needed judging audience sophistication. We also made strategic trade-offs between completeness and readability. That’s assistant territory.

But that same client used a deployment process with 23 manual steps. Each step had clear success criteria and rollback procedures. That’s agent territory. We’re building autonomous deployment orchestration that eliminates the entire manual workflow.

Autonomy requirements: supervised vs. unsupervised

This is about error tolerance and decision authority.

If mistakes create immediate customer impact, regulatory risk, or require expensive rollback, you need human oversight. That’s assistant architecture by definition. The human reviews before action.

If the system can make decisions, take actions, and handle exceptions within acceptable risk, you can build agent architecture. The key question: Can you tolerate autonomous decision-making without approval workflows?

We built ReachSocial as an agent specifically because LinkedIn engagement has low error cost. If the system engages with a post that’s tangentially relevant rather than perfectly relevant, the downside is minimal. The workflow can run unsupervised.

Contract review for legal compliance? That needs human sign-off at multiple points. Assistant architecture makes sense even if you automate large portions of the analysis.

Economic thresholds: productivity gain vs. cost elimination

This is where the architecture choice becomes a financial decision.

If your goal is helping existing teams work faster, assistant architecture delivers that. But if you must justify the infrastructure costs, timeline, and engineering resources that agents need, use workflow replacement economics.

Real-world agent deployments use tools like Temporal for workflow orchestration, PostgreSQL for state management, monitoring systems like Datadog. That’s significant infrastructure overhead. It only makes economic sense when you’re targeting cost elimination, not productivity multiplication.

I saw this play out recently with a team building autonomous testing. They spent eight weeks on infrastructure: state management, error recovery, retry logic, monitoring. That investment only made sense because they were eliminating manual QA costs entirely. If the goal was just helping QA teams work faster, the infrastructure overhead wouldn’t have justified the ROI.

Here’s the calculation:

If you spend six months and major engineering resources, you need to cut whole workflows to justify it. If you’re delivering productivity multipliers, simpler assistant architecture gets you to production faster with better ROI.

Production deployment: what actually changes

The architecture choice determines your infrastructure reality.

Assistants integrate into existing workflows. They plug into Slack, Surface suggestions in your IDE, and add intelligence layers to current tools. Deployment complexity is relatively contained because humans remain in control.

Agents require orchestration infrastructure. You need state management so the system knows what it’s doing across sessions. Error recovery so failures don’t cascade. Monitoring so you catch issues before they compound. Webhook handling so agents can respond to external events.

When we built the autonomous testing platform for QA flow, we used Temporal for workflow orchestration. Every test run is a durable workflow. It survives process restarts, handles failures well, and keeps state throughout the test lifecycle. PostgreSQL tracks test history, flakiness patterns, and regression coverage. Datadog monitors success rates, execution times, and error patterns.

That infrastructure lets us deploy autonomous website audits that run end-to-end without human oversight. But it took months to build correctly.

Assistant architecture skips most of that complexity. You’re not replacing workflows, so you don’t need the same level of reliability and autonomous error handling.

The infrastructure choice follows from the architecture choice, which follows from your evaluation framework.

The competitive window is right now

Here’s what I’m watching in Q1 2026.

88% of senior executives are increasing AI budgets this year. That money is flowing toward companies that demonstrate workflow replacement economics, not incremental productivity gains.

The companies moving fast with agent architecture will capture those budgets and build competitive moats. The companies spending 2026 building assistants will face a painful reality in 12 to 18 months. They will need to rebuild from scratch when the market demands autonomous workflows.

I wrote about this pattern in detail when comparing autonomous agents to assistants. The ROI gap isn’t marginal. It’s structural.

The architecture decision happens once. Get it wrong and you’re not iterating. You’re rebuilding.

Think through your task structure. Evaluate your autonomy requirements honestly. Calculate whether you need productivity multiplication or cost elimination. Those three criteria determine your architecture. Everything else follows.

Companies that make the right choice now will benefit in 2026. They will capture the gains from replacing workflows. Meanwhile, competitors will waste engineering time on assistants that cannot scale. Those assistants will not meet the ROI the business needs.

The window to establish competitive advantage through autonomous agents is narrowing. Not because the technology is getting harder. Because the architecture choice is becoming obvious to everyone.

Choose accordingly.