Why your AI agent works in demos but fails in production

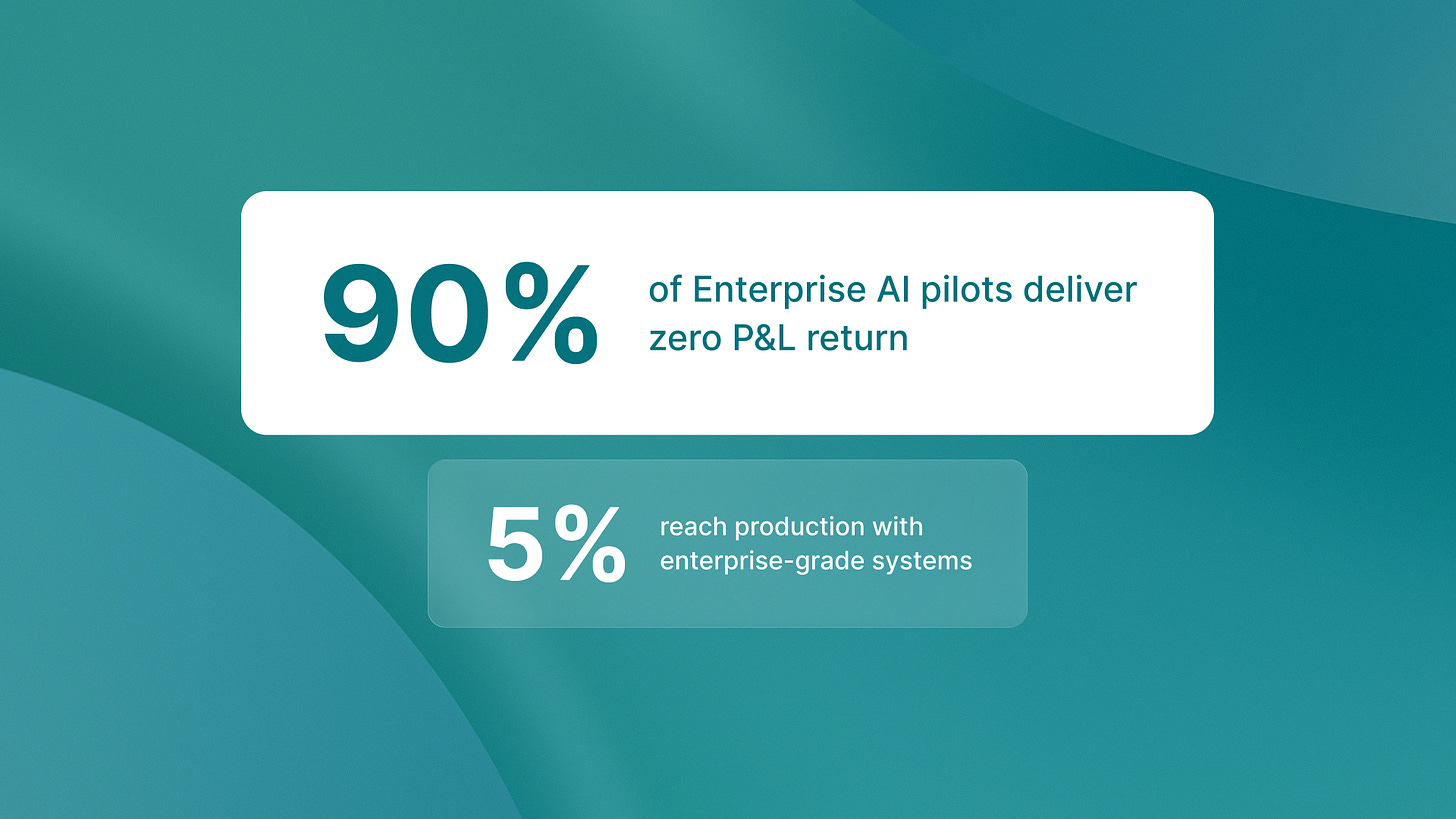

I need to tell you something most AI consultants won’t: 95% of enterprise AI pilots show no measurable return on P&L.

That statistic comes from MIT NANDA’s 2025 State of AI in Business report. It’s not a capability problem. It’s an architecture problem.

Most companies are building AI agents with demo-grade architecture that collapses under production loads. The systems handle happy paths beautifully. They impress stakeholders in controlled environments. Then they hit real business complexity and require constant human intervention to function.

Gartner predicts over 40% of agentic AI projects will be canceled by end of 2027. The reason: escalating costs, unclear business value, inadequate risk controls. All symptoms of the same architectural mistake.

The gap between pilot and production

Here’s what the data shows. Only 5% of organizations reach production with enterprise-grade AI systems. 60% get stuck evaluating tools. 20% reach pilot stage before hitting architectural walls they can’t overcome.

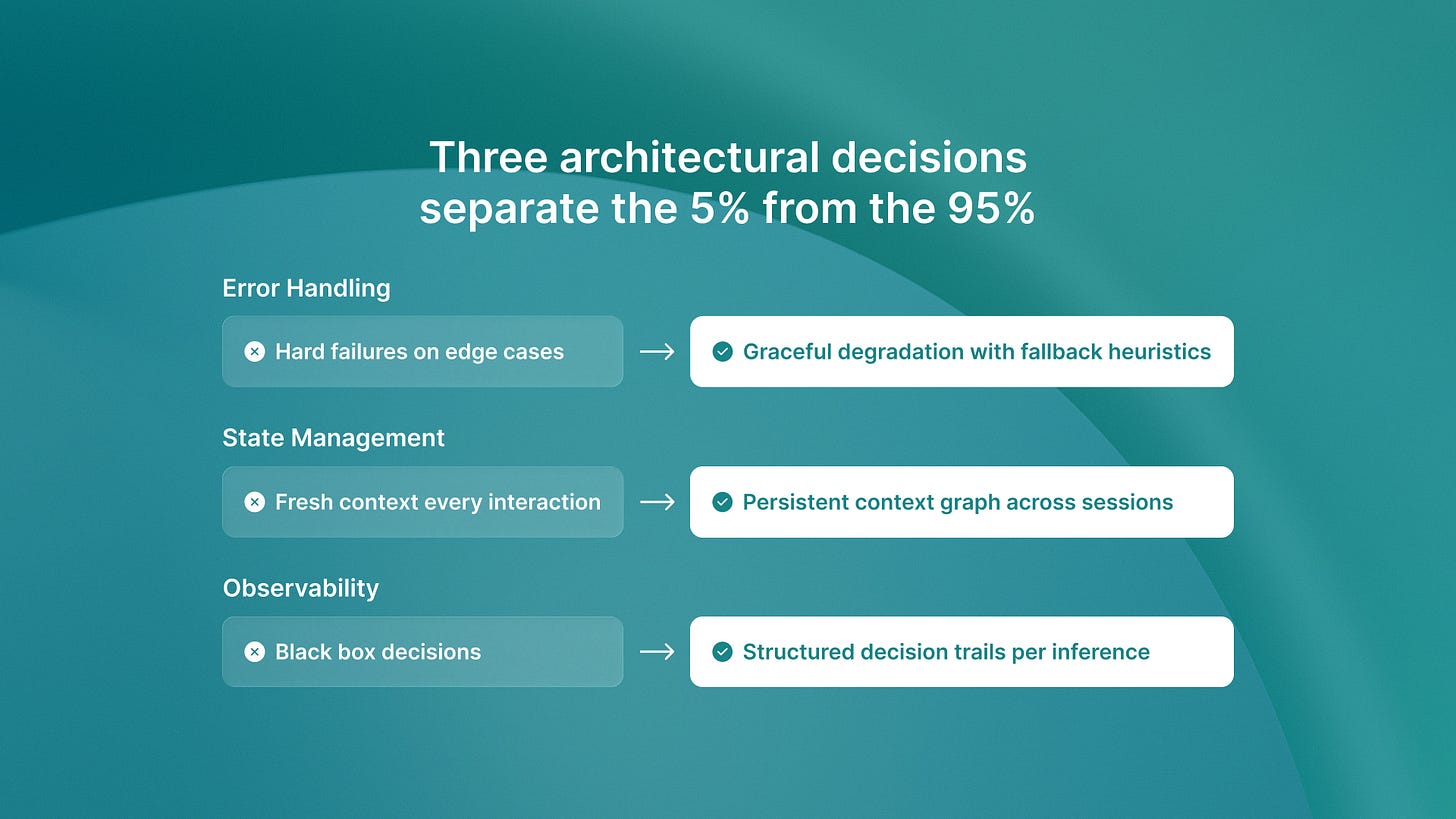

The gap isn’t about scaling compute. It’s about fundamental architectural decisions around three things: error handling, state management, and observability.

Demo-grade agents operate in controlled environments. Production agents operate in chaos. That difference determines everything.

What Demo-Grade Architecture Looks Like

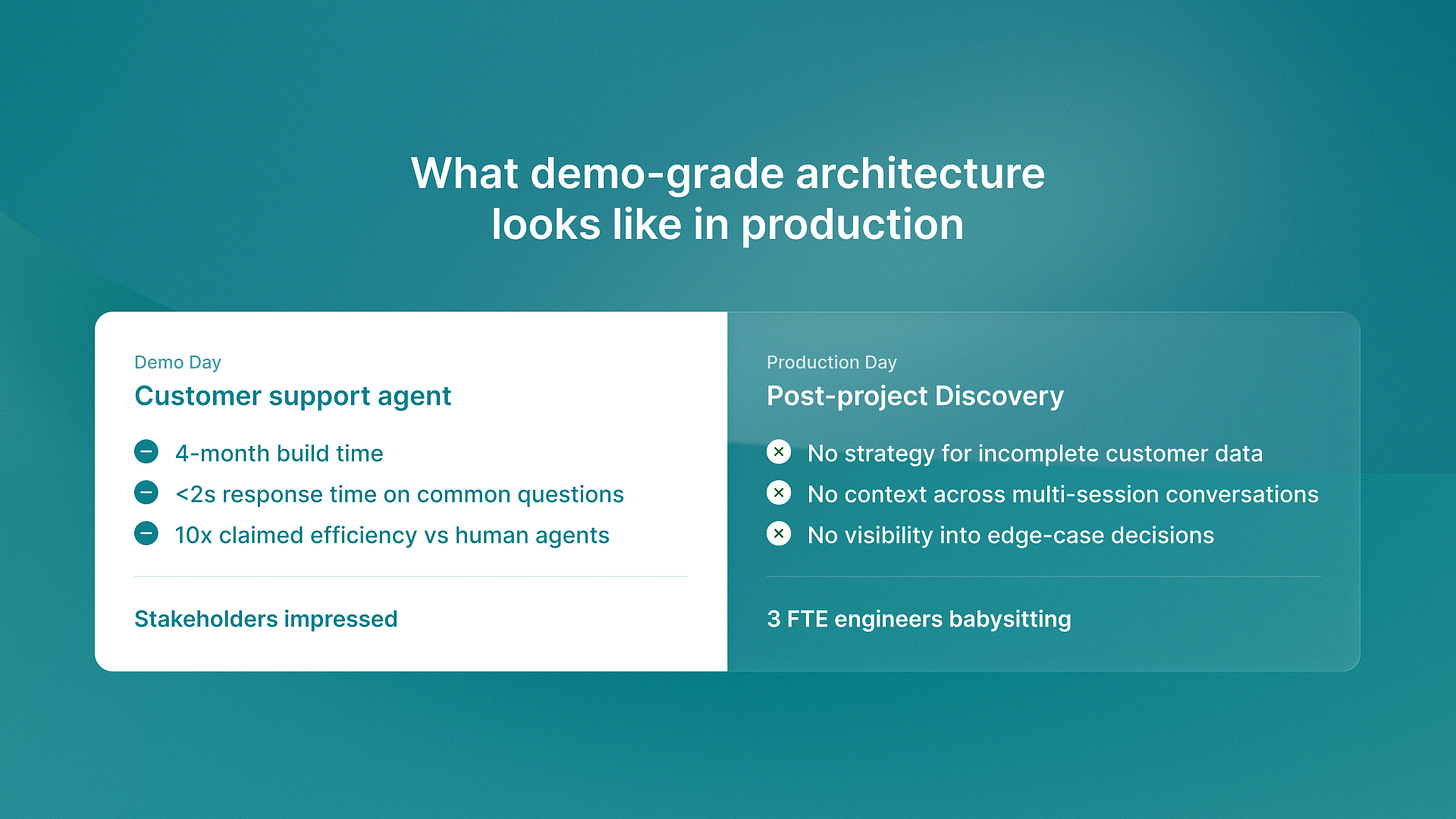

Last month I was talking to a Series B company that spent four months building an AI agent for customer support. The demo was flawless. It handled common questions perfectly. Response time under two seconds. Natural language processing that felt magical.

Then they deployed to production.

Within 48 hours they discovered the system had no strategy for handling incomplete customer data. No way to maintain context when conversations spanned multiple sessions. No visibility into why the agent made specific decisions when edge cases appeared.

The economic case evaporated. What looked like 10x efficiency in demos became a cost center. It needed three full-time engineers to babysit edge cases.

This is the pattern. Demo-grade architecture optimizes for the happy path. Production-grade architecture assumes everything will break.

The three architectural decisions that matter

I’ve shipped autonomous agents across QA flow, ReachSocial, and Timecapsule. Here’s what separates systems that work from systems that collapse.

Error handling: graceful degradation vs hard failures

Demo-grade agents fail catastrophically when inputs don’t match expected patterns. Production-grade agents degrade gracefully.

At QA flow, we handle 2400+ test suites monthly. Our agents encounter malformed Figma designs, incomplete specifications, and edge cases we never anticipated. The system doesn’t crash. It logs the uncertainty, falls back to simpler heuristics, and flags cases for human review.

That’s architectural. We built error handling into the core decision loop from day one. Not as an afterthought. As a primary design constraint.

Demo systems skip this because controlled environments don’t surface the failure modes. Production systems that skip this become technical debt you can’t remediate.

State management: session persistence vs fresh context

Demo-grade agents treat every interaction as independent. Production-grade agents maintain context across sessions and time.

I noticed this at ReachSocial when we were building LinkedIn engagement orchestration. Demo agents could draft a single comment beautifully. But real engagement campaigns span weeks. The agent needs to remember previous interactions, understand relationship history, and maintain consistent voice across 20+ touchpoints.

We built state management using Temporal for workflow orchestration. Every interaction updates a persistent context graph. The agent doesn’t just respond to the current input. It understands the full relationship arc.

This is why 40% of projects get canceled. Demo systems look economically viable until production complexity reveals you’re rebuilding state management from scratch.

Observability: black box vs transparent decision trails

Demo-grade agents are black boxes. Production-grade agents expose decision trails.

Here’s what I mean. When an autonomous agent makes a decision that affects business outcomes, you need to understand why. Not just what it decided. Why it chose option A over option B. What weights it assigned to competing factors. Where uncertainty existed in the reasoning chain.

At Timecapsule, our agents monitor project profitability in real-time. When the system flags a project as at-risk, we need transparent reasoning. Did time tracking patterns change? Did scope creep exceed thresholds? Did resource allocation shift unexpectedly?

We built structured logging for every decision point. Not as a debugging tool. As a core architectural component. The observability layer is as important as the decision layer.

Demo systems skip this because stakeholder demos don’t require decision explanations. Production systems that skip this become unauditable, untrustworthy, and ultimately unusable.

The technical debt trap

Most companies never move beyond evaluation phase because demo-grade architecture creates technical debt that becomes impossible to remediate once discovered in production.

Here’s the trap. You build a proof of concept with simple architecture. It works. Stakeholders are excited. You get budget to scale. Then you discover the foundation can’t support production requirements.

At that point you have three options. Rebuild from scratch (expensive, demoralizing). Band-aid the architecture (creates more debt). Cancel the project (40% of companies choose this).

The companies reaching the 5% success tier aren’t smarter. They’re building with production patterns from day one. They assume chaos. They design for failure. They prioritize observability over feature velocity.

We wrote about the architectural difference between AI assistants and autonomous agents last month. The ROI gap isn’t about capabilities. It’s about architectural maturity that allows true autonomy.

What production-grade looks like in practice

Production AI agents are sophisticated systems that require architectural maturity from day one. Not polish. Maturity.

At Islands, we’ve shipped agents that run unsupervised across QA testing, engagement orchestration, and time tracking. The pattern is consistent:

Error handling built into core decision loops

State management treating context as a first-class concern

Observability exposing decision reasoning, not just outcomes

Graceful degradation when complexity exceeds training

Human-in-the-loop for high-stakes edge cases

This isn’t theoretical. It’s what works when agents operate autonomously under real business complexity.

If you’re evaluating AI agent deployment, the question isn’t what the agent can do in demos. The question is whether your architecture can handle production chaos. Whether your error handling degrades gracefully. Whether your state management maintains context across sessions. Whether your observability exposes decision reasoning.

Those architecture choices decide if you’re in the 95% that deliver no return. Or in the 5% that reach production with systems that work.

The consultants won’t tell you this because they’ve never shipped production agents. But the data is clear. Demo-grade architecture collapses. Production-grade architecture scales.

You can spend months discovering architectural gaps in production. Or you can learn from operators who’ve already solved these problems. We’ve documented the real costs and economics of production AI agents. This is based on what works in practice, not what looks good in demos.

The choice is whether you want to be in the 95% or the 5%. Architecture determines everything.