Why your AI agent POC will never reach production

Every CTO who reviews foundation models for AI agents makes the same mistake. They focus on benchmark scores, but they should focus on production reliability.

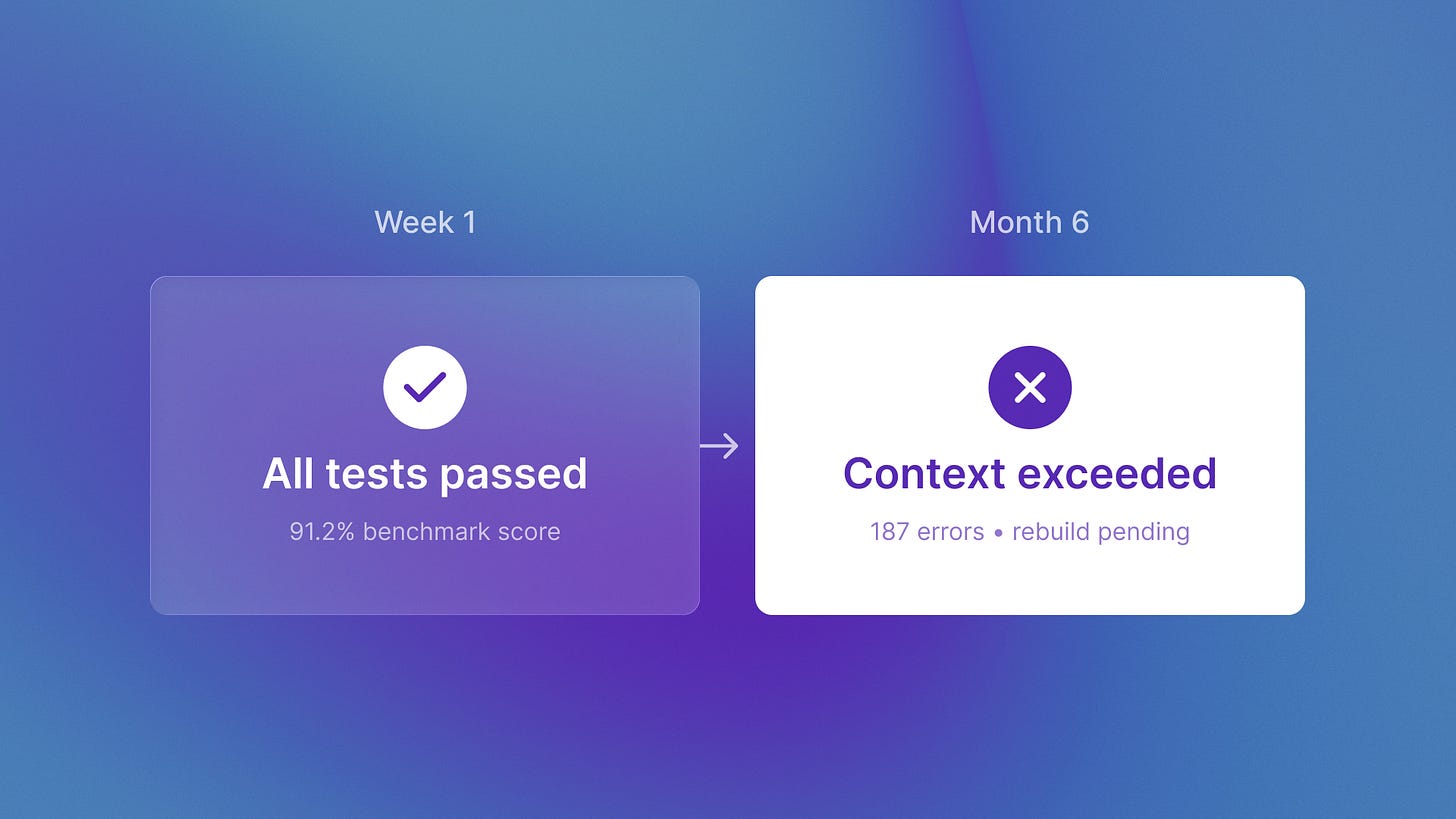

I’m writing to you about something I’ve watched happen repeatedly over the past six months. Engineering teams demo impressive AI agents in week one. By month six, they’re rebuilding from scratch or abandoning the project entirely. The pattern is clear enough that I can predict which teams will hit this wall by asking one question: how did you choose your model?

Claude Opus 4.6 just launched (February 5, 2026) with major improvements for autonomous agents. Engineering leaders are comparing specs against GPT-5.2 right now. Most will make their decision based on coding benchmarks and leaderboard rankings.

That decision will determine whether they’re rebuilding their entire agent architecture in August.

The 70% failure rate nobody talks about

Only 30% of generative AI pilots reach production. That number comes from Deloitte’s 2025 analysis, and it reflects something specific about how teams select foundation models.

The POC-to-production gap exists because model selection happens during demos when benchmarks look impressive. Not during months-long production runs when context limits, token costs, and failure modes determine viability.

Here is what matters most for production agents. Models should keep context across sessions. They should work with other agents. They should recover from errors on their own. Benchmark scores measure none of this.

I was talking to the team at QA flow last week about their autonomous testing platform. They shared something revealing about their model selection process. Initially, they evaluated models based on coding benchmark performance. Higher HumanEval scores meant better code generation, right?

What they found in production: the model that scored 3% higher on isolated coding tests could not keep context. It struggled during their multi-step test generation workflow. QA flow agents need to reason about entire application flows (Figma design to test execution to bug detection). That requires holding system architecture in working memory for hours, not generating a single function.

They rebuilt on a different model after four months. Not because the first model couldn’t code. Because it couldn’t sustain the architectural reasoning their autonomous system required.

What terminal-bench actually measures

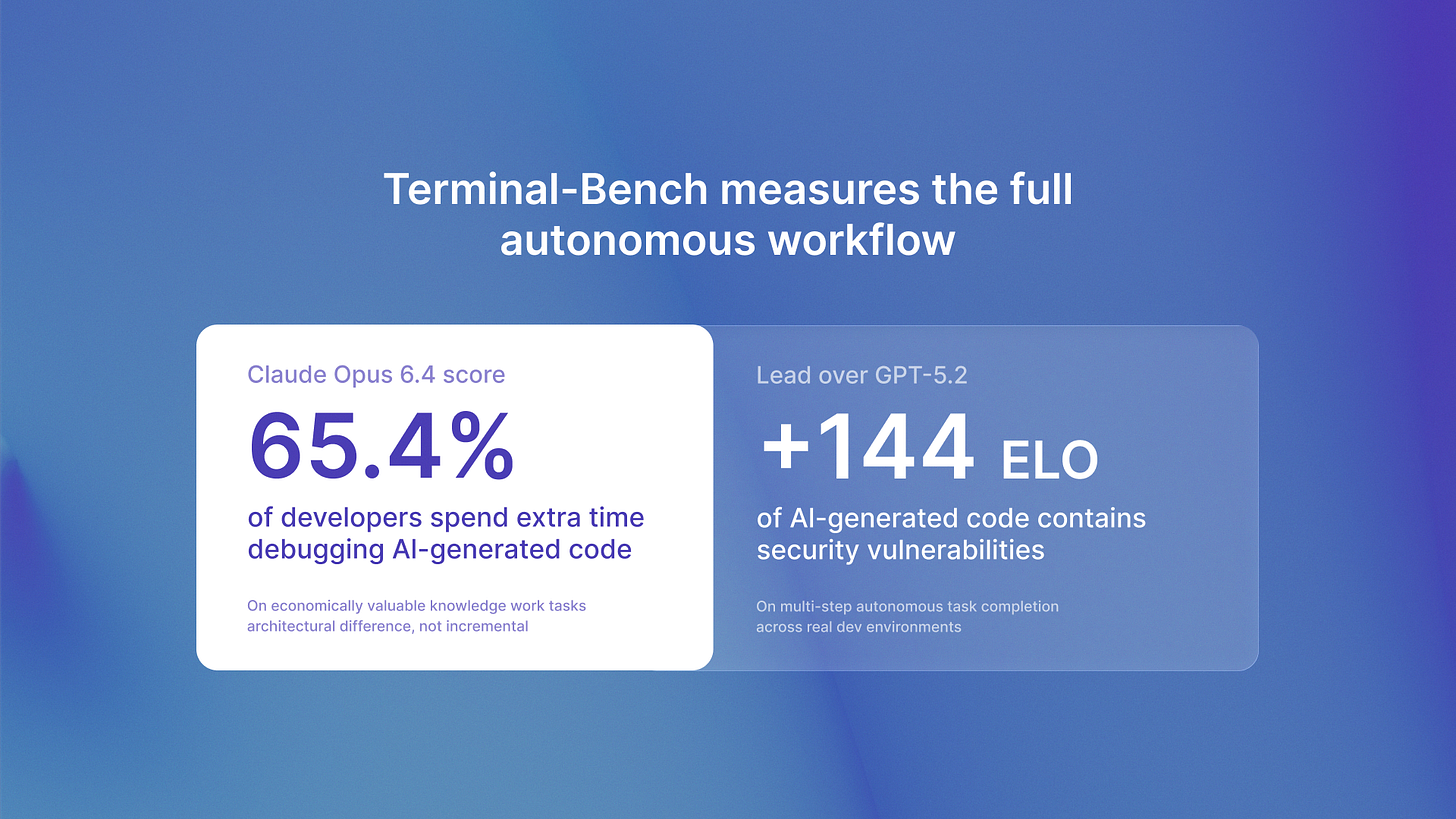

Claude Opus 4.6 scored 65.4% on Terminal-Bench 2.0. That number tells you something specific if you understand what the benchmark tests.

Terminal-Bench doesn’t measure code completion. It measures multi-step autonomous task completion in real development environments. Requirement interpretation, environment setup, execution, error recovery. The full workflow an autonomous agent actually performs.

This is the difference between demo performance and production reliability. A model that generates clean code snippets might fail completely at the orchestration layer where production agents operate.

Opus 4.6 outperformed GPT-5.2 by approximately 144 ELO points on GDPval-AA (economically valuable knowledge work tasks). That gap represents something architectural. Not incremental improvement in isolated capabilities, but qualitative difference in sustained autonomous operation.

The context problem that kills production agents

Claude Opus 4.6 scored 76% on MRCR v2, up from its predecessor’s 18.5%. This shows a clear capability shift for production agents.

MRCR v2 measures long-context retrieval. Can the model actually use its context window, or does performance degrade as context grows? Most models claim large context windows but lose coherence past certain thresholds.

76% means the model maintains reasoning quality across its full 1 million token context. That’s the difference between agents that can reason about entire codebases versus agents that modify individual functions.

Last month I noticed something at ReachSocial that illustrates this perfectly. Their LinkedIn engagement orchestration runs campaigns across weeks. Each agent interaction builds on previous context: prospect research, message history, response patterns, timing optimization.

They initially prototyped with a model that had impressive benchmark scores but struggled with context persistence. The agent would “forget” earlier campaign context mid-workflow. Not because of memory limits, but because the model couldn’t effectively retrieve and reason over accumulated context.

Production autonomous agents don’t operate in isolated tasks. They maintain state across sessions, coordinate with other agents, and build on previous work. Context handling isn’t a nice-to-have feature. It’s the foundation of sustained autonomous operation.

The architectural decision you can’t reverse

Model selection compounds over time in ways that aren’t obvious during POCs.

Choosing based on benchmarks optimizes for week-one demos. Choosing based on context handling and agent team features optimizes for month-six production reliability.

Opus 4.6 introduced agent teams: coordinated multi-agent architectures where specialist agents handle specific tasks under a coordinator agent. This isn’t available in GPT-5.2’s single-model API.

That architectural difference determines what autonomous systems you can build. Not just how well they perform, but what patterns are possible.

I wrote about this architectural choice in detail here. Agentic AI vs AI Assistants: Why Only Autonomous Systems Deliver 420% ROI

Core insight: single-model optimization has different limits than multi-agent orchestration.

At Islands, we manage dev hours across 8-15 simultaneous client projects. When we evaluate models for our portfolio companies’ agent architectures, we’re not looking at benchmark leaderboards. We’re asking: can this model support the coordination patterns we’ll need in six months?

Because by then, you’ve built months of agentic workflows on top of your model choice. Switching models means rewriting coordination logic, retesting reliability patterns, and rebuilding error handling. You don’t just swap the API endpoint.

What to optimize for instead

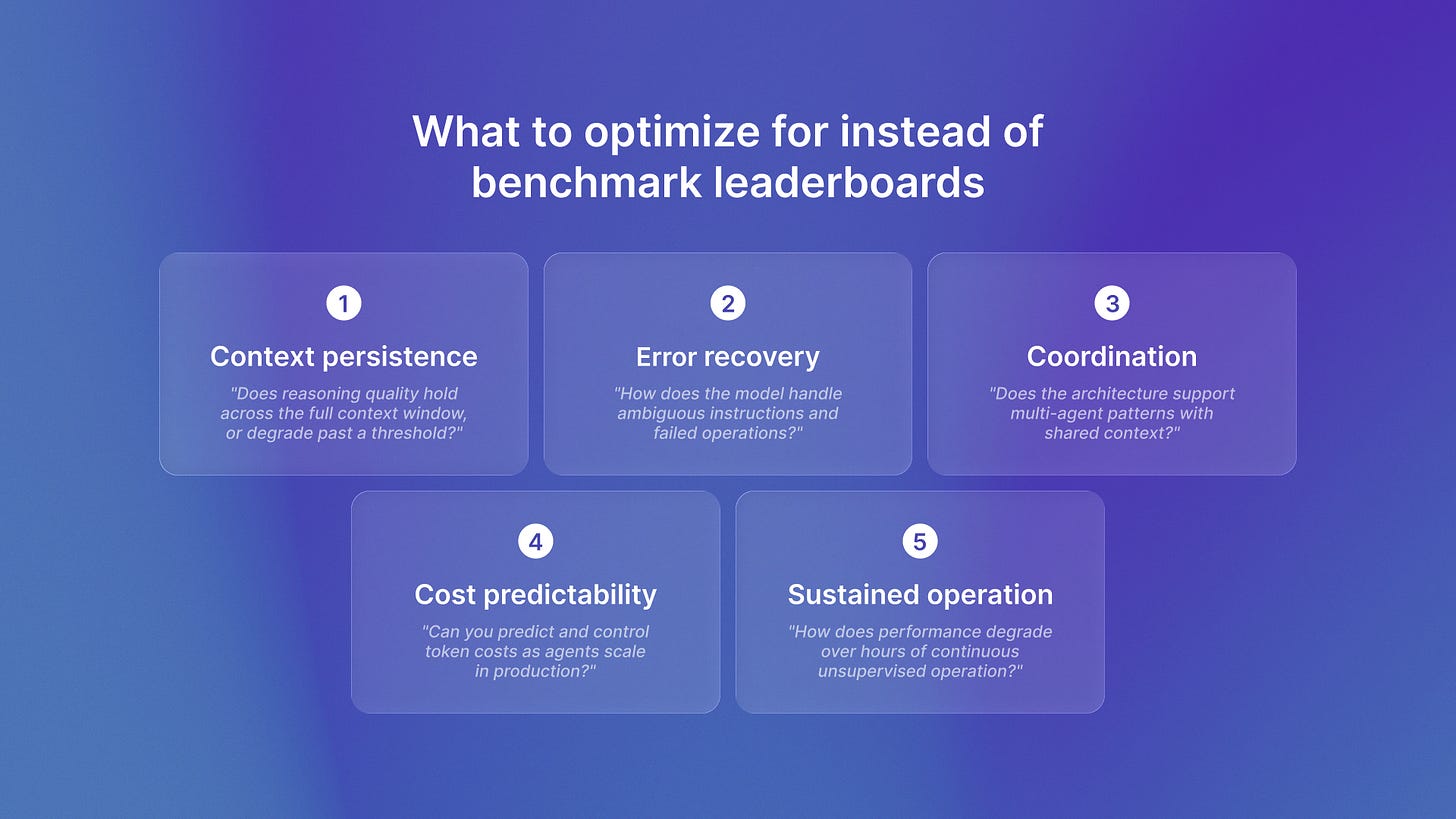

Here’s the framework we use for model selection in production agent architectures:

Context persistence: Can the model maintain reasoning quality across its full context window? Test with real workflow context, not synthetic benchmarks.

Error recovery patterns: How does the model handle ambiguous instructions, missing information, or failed operations? Production agents encounter these constantly.

Coordination capabilities: Does the architecture support multi-agent patterns? Can agents maintain shared context and coordinate tasks?

Cost predictability: Token costs matter in production. Can you predict and control costs as agents scale?

Sustained operation: How does performance degrade over hours of continuous operation? Most benchmarks test isolated tasks.

These characteristics don’t show up on leaderboards. They show up in production.

The teams that successfully scale from POC to production optimize for these factors upfront. The teams stuck rebuilding in month six optimized for demo impressiveness.

The strategic implication

The model you choose in February 2026 determines whether you’re rebuilding your agent architecture in August 2026.

Engineering teams selecting models based on coding benchmarks will hit context limits and coordination problems that require fundamental rewrites. Teams selecting based on production reliability characteristics will scale from POC to production without architectural rewrites.

Model selection isn’t a reversible decision when you’ve built six months of agentic workflows on top of it. Choose for month-six production, not week-one demos.

If you’re evaluating models now, ask yourself this. Are you optimizing for the benchmark slide in your architecture review? Or for the sustained autonomous operation your production system will require?

The 70% of teams who don’t reach production made the wrong choice on that question. The 30% who do understood that model selection is an architectural decision with compounding implications.

You rebuild, or you choose correctly the first time.