Why Your AI Agent Demo Crushed It But Production Is a Dumpster Fire

Your AI agent demo crushed it. After two weeks in production, it’s creating duplicate work. It’s also missing important context. Your engineering team is writing exception handlers faster than the agent can process requests.

I’m writing to you because this pattern is everywhere right now. 95% of enterprise AI pilots never made it to production in 2025 (MIT via Metadata Weekly). Of the ones that did launch, Gartner predicts over 40% will be scrapped by 2027. Not because the models are bad. The LLMs work fine.

The problem is architectural decisions made during pilots that create technical debt you cannot refactor your way out of.

Last month I was talking to a Series B fintech CTO who showed me their agent pilot. Beautiful demo: answered customer questions, routed tickets, suggested solutions. Flawless in staging. They launched with 500 users at the same time. It began repeating answers it had already given. It also broke during regular API calls. Additionally, it polled their CRM so much that it hit rate limits in just 90 minutes.

They’re rewriting from scratch now.

Here’s what is really hurting these projects: three specific design problems. They may look good in demos but fail under real use.

Composio’s 2025 AI Agent Report highlights three main problems.

Dumb RAG: This refers to poor memory management.

Brittle Connectors: This issue involves broken input/output connections.

Polling Tax: This is about the lack of an event-driven architecture.

For more details, check the report.

The window to fix these is narrow. Most teams realize the issues 6-12 months into deployment when rewriting becomes the only option.

Why Your AI Agent Demo Crushed It But Production Is a Dumpster Fire

Your AI agent demo crushed it. After two weeks in production, it’s creating duplicate work. It’s also missing important context. Your engineering team is writing exception handlers faster than the agent can process requests.

I’m writing to you because this pattern is everywhere right now. 95% of enterprise AI pilots never made it to production in 2025 (MIT via Metadata Weekly). Of the ones that did launch, Gartner predicts over 40% will be scrapped by 2027. Not because the models are bad. The LLMs work fine.

The problem is architectural decisions made during pilots that create technical debt you cannot refactor your way out of.

Last month I was talking to a Series B fintech CTO who showed me their agent pilot. Beautiful demo: answered customer questions, routed tickets, suggested solutions. Flawless in staging. They launched with 500 users at the same time. It began repeating answers it had already given. It also broke during regular API calls. Additionally, it polled their CRM so much that it hit rate limits in just 90 minutes.

They’re rewriting from scratch now.

Here’s what is really hurting these projects: three specific design problems. They may look good in demos but fail under real use.

Composio’s 2025 AI Agent Report highlights three main problems.

Dumb RAG: This refers to poor memory management.

Brittle Connectors: This issue involves broken input/output connections.

Polling Tax: This is about the lack of an event-driven architecture.

For more details, check the report.

The window to fix these is narrow. Most teams realize the issues 6-12 months into deployment when rewriting becomes the only option.

Why Production Kills Pilots: The Architecture Gap

Demos run in controlled environments. Production is chaos.

Demos process single-threaded requests with clean inputs on the happy path. Production handles concurrent users submitting malformed data while external APIs change their schemas and services go down mid-request.

Here’s what I’ve seen at Islands: teams build pilots that work perfectly when one person tests them sequentially. They ship to production where 50 users hit the agent simultaneously with edge cases the pilot never encountered. The agent loses track of the conversation. It breaks when it gets unexpected API responses. Then, it starts polling services in tight loops because it can’t see state changes.

The gap isn’t model capability. It’s architectural discipline.

Most teams discover this too late because there’s a 6-12 month lag between pilot success and production failure. Pressure to ship fast means skipping architecture reviews. You validate that the agent can do the task, not that it can do the task reliably at scale under real-world conditions.

The cost of this architectural debt is brutal. You can’t refactor AI systems incrementally like traditional code. The memory patterns, integration layers, and event handling are foundational. Fixing them requires complete rewrites. Worse, you lose all the institutional knowledge embedded in the failed system.

I saw a team waste nine months of work. They used Dumb RAG and could not change their memory setup without starting from scratch.

Failure Mode #1: Dumb RAG (Bad Memory Management)

Here’s what Dumb RAG looks like in production: agents that forget previous interactions within the same conversation. Make the same mistakes repeatedly across sessions. Can’t synthesize information from multiple sources. Lose critical context mid-task.

Last week I was reviewing an agent at one of our portfolio companies. Customer asks a question in message 1, agent answers. Customer references that answer in message 3, agent has no idea what they’re talking about. Retrieves the original answer from the vector database but can’t connect it to the current conversation.

The architectural mistake: treating RAG as simple document retrieval instead of persistent, structured memory.

Teams embed everything, retrieve nothing useful. They throw documents into a vector database, retrieve semantically similar chunks, and hope the LLM figures it out. No semantic understanding of what matters. No distinction between facts that inform current decisions and historical context that shapes reasoning. They conflate retrieval with reasoning.

How to identify Dumb RAG before it kills your project:

Test multi-turn conversations that require synthesizing information across messages. If the agent can’t reference something it said five exchanges ago, you have Dumb RAG. Measure context retention across sessions. If starting a new conversation means starting from zero, you have Dumb RAG. Audit what the agent remembers versus what it retrieves. If it re-retrieves the same information every time instead of maintaining state, you have Dumb RAG.

Production-grade memory architecture uses structured state management, not just retrieval. Hierarchical memory systems have different layers. Immediate context, session history, and long-term knowledge are in these layers. There are clear rules for promoting information between them. Explicit context windows that define what matters now versus what’s background. Semantic filtering before retrieval so you’re not dumping every tangentially related document into the prompt.

I helped a team at QA flow redesign their agent’s memory architecture. Before: retrieving 20 similar test results on every bug analysis. After: maintaining session state of current analysis, retrieving only net-new information when context shifts. Bug detection accuracy went from 73% to 91% because the agent stopped confusing historical bugs with current ones.

Failure Mode #2: Brittle Connectors (Broken I/O)

Brittle Connectors in production: agents that break when APIs change version. Can’t handle service outages. Fail on unexpected response formats. Require manual intervention every time an external system updates.

One of our portfolio companies built an agent that integrated with their CRM. Worked perfectly in pilot. CRM updated their API, added one optional field to the response schema. Agent crashed on every request because it expected an exact match.

The architectural mistake: hard-coding integrations and assuming external services are stable.

Teams make direct API calls without abstraction layers. No retry logic. No circuit breakers. Brittle parsing that breaks when response formats vary even slightly. They optimize for the happy path and ignore everything else.

How to identify Brittle Connectors before deployment:

Run chaos engineering tests. Introduce random API failures. See if your agent degrades gracefully or crashes. Test API versioning scenarios. What happens when a field gets renamed or a new required parameter appears? Simulate service outages. Can your agent continue functioning with degraded capability or does everything stop? Run schema change testing. Feed the agent unexpected response formats and measure failure modes.

Production-grade integration patterns use adapter layers that abstract external services behind stable interfaces. Graceful degradation where the agent continues functioning with reduced capability when services fail. Retry policies with exponential backoff and jitter so you’re not hammering failed services. Schema validation and evolution handling that adapts to changes without breaking.

We rebuilt the integration layer for ReachSocial using these patterns. Before: brittle connectors that required code changes every time LinkedIn updated their API. After: adapter pattern with schema validation and automatic retry logic. Zero production incidents from external API changes in four months.

Failure Mode #3: Polling Tax (No Event-Driven Architecture)

Polling Tax in production: high latency responses. Expensive API usage that scales linearly with load. Agents that can’t respond to real-time events. Systems where operating costs make autonomous operation economically unfeasible.

I saw this at a logistics company running an agent pilot. Agent checked shipment status by polling the tracking API every 30 seconds. Worked fine with 10 shipments in pilot. Scaled to 1,000 shipments and they were making 120,000 API calls per hour. Cost projection at full scale: $47,000/month in API fees alone.

The architectural mistake: building request-response systems when autonomous operation requires event-driven patterns.

Teams use constant polling of external services because it’s simple to implement. No webhooks. No message queues. Synchronous processing of inherently async workflows. They optimize for pilot simplicity and ignore production economics.

How to identify Polling Tax in your pilot:

Calculate API calls per agent action. If you’re polling multiple services every few seconds, you have Polling Tax. Measure response time to external events. If there’s a multi-second delay between event occurrence and agent response, you have Polling Tax. Project costs at 10x scale. If API fees grow linearly with usage, you have Polling Tax.

Production-grade event-driven patterns use webhook integration so external services push updates instead of being polled. Message queues for async processing. Event sourcing for auditability and replay capability. These patterns create systems where costs scale sub-linearly and latency stays constant as load increases.

At Timecapsule, we migrated from polling to webhooks for time entry processing. Before: polling every 60 seconds, 2-5 minute latency, scaling costs. After: webhook-driven updates, sub-second latency, 85% reduction in API calls. The system went from economically questionable to obviously scalable.

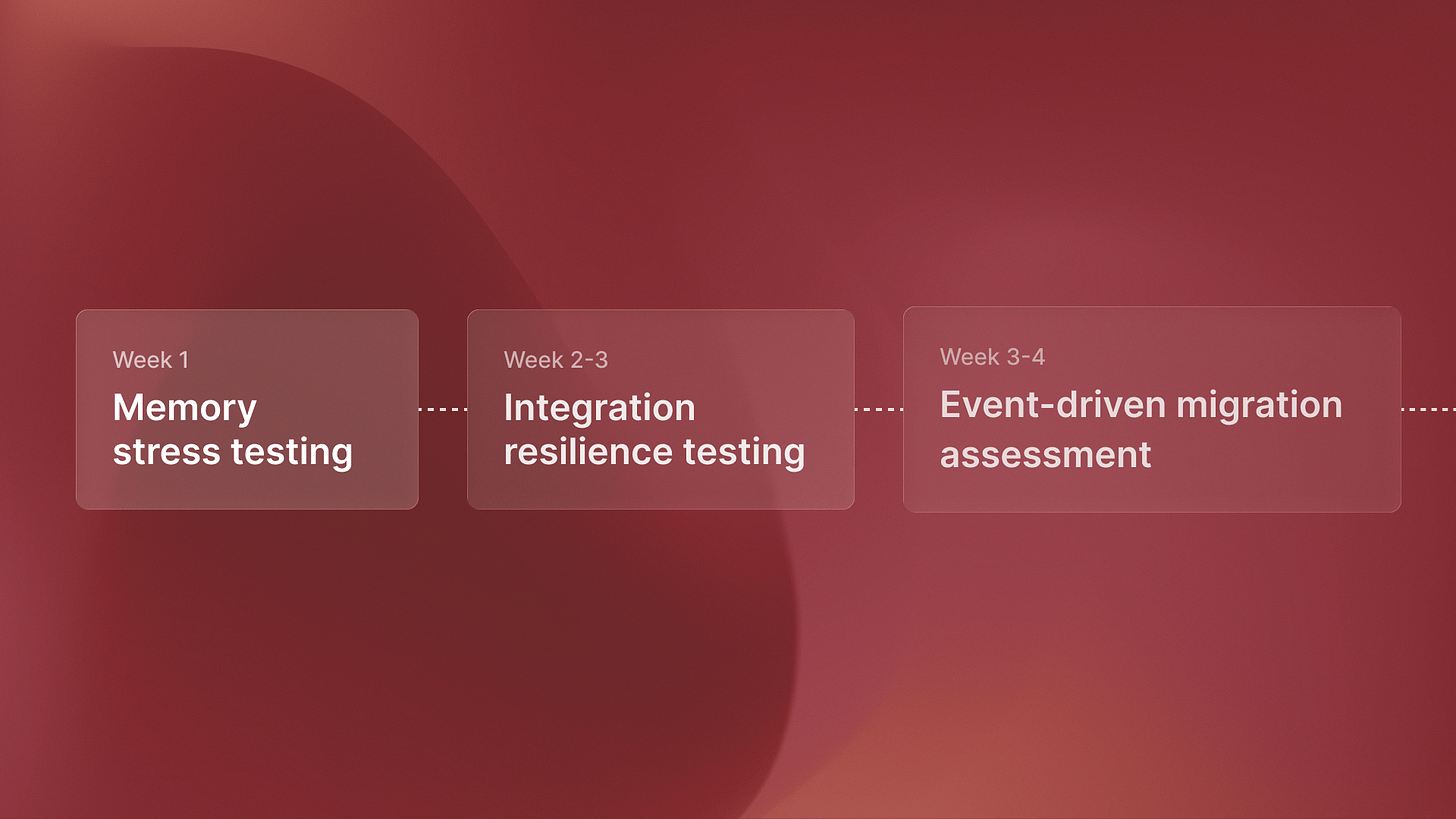

The 30-Day Architecture Audit

You don’t need to wait for production failure. Here’s how to catch these failure modes during your pilot.

Week 1-2: Memory stress testing. Run multi-session conversations that require context from previous interactions. Measure what percentage of historical context the agent retains. Audit retrieval quality: is it retrieving useful information or noise?

Week 2-3: Integration resilience testing. Simulate API version changes. Introduce random service outages. Test with unexpected response formats. Measure how many manual interventions are required.

Week 3-4: Event-driven migration assessment. Calculate current polling costs. Project those costs at 10x and 100x scale. Benchmark latency for event-driven alternatives. Map async workflows that would benefit from message queues.

Decision framework after the audit: Fix now, rewrite, or abandon.

Fix now if architectural gaps are isolated and you can refactor without touching core logic. Rewrite if the gaps are foundational but the use case is validated and valuable. If fixing the architecture costs more than expected, or if it takes too long to be ready, abandon the project.

The teams shipping production AI agents in 2026 are the ones who caught these failure modes during pilots, not after deployment. This isn’t about model capability. It’s about architectural discipline.

Companies that fix these issues now get 18 months of production learning ahead of competitors still debugging brittle pilots. For more on what production-ready AI agents actually look like in 2026, I wrote about the four capabilities that separate demos from autonomous systems.

Avoid becoming part of the 40% that scraps projects by 2027. Build production-grade architecture from day one.