Why most AI agent pilots never make it to production

I’ve been watching something fascinating unfold across our portfolio companies. Everyone’s building AI agent demos. Almost nobody’s shipping production systems.

Here’s the data that caught my attention. McKinsey reports that 39% of organizations are trying agentic AI. But only 23% are scaling these systems. That gap wouldn’t bother me if it was closing. It’s not. KPMG found that 65% of leaders cite agentic system complexity as their top barrier. This number has stayed the same for two straight quarters.

This isn’t a temporary growing pain. It’s an architectural problem.

The demo-to-production gap is structural

Most pilot projects start with what I call “assistant architecture.” Stateless interactions. Human-in-the-loop at every step. Simple orchestration that works great for demos.

Then teams try to deploy these systems into production workflows, and everything breaks.

I was talking with the QA flow team last week about their autonomous testing platform. They shared something that illustrates this perfectly. Their first prototype ran tests by calling Claude in a loop. Worked beautifully for 20-test demos. Collapsed completely when they tried processing 800+ tests per day across multiple client projects.

The difference wasn’t the AI model. It was the orchestration layer they hadn’t built.

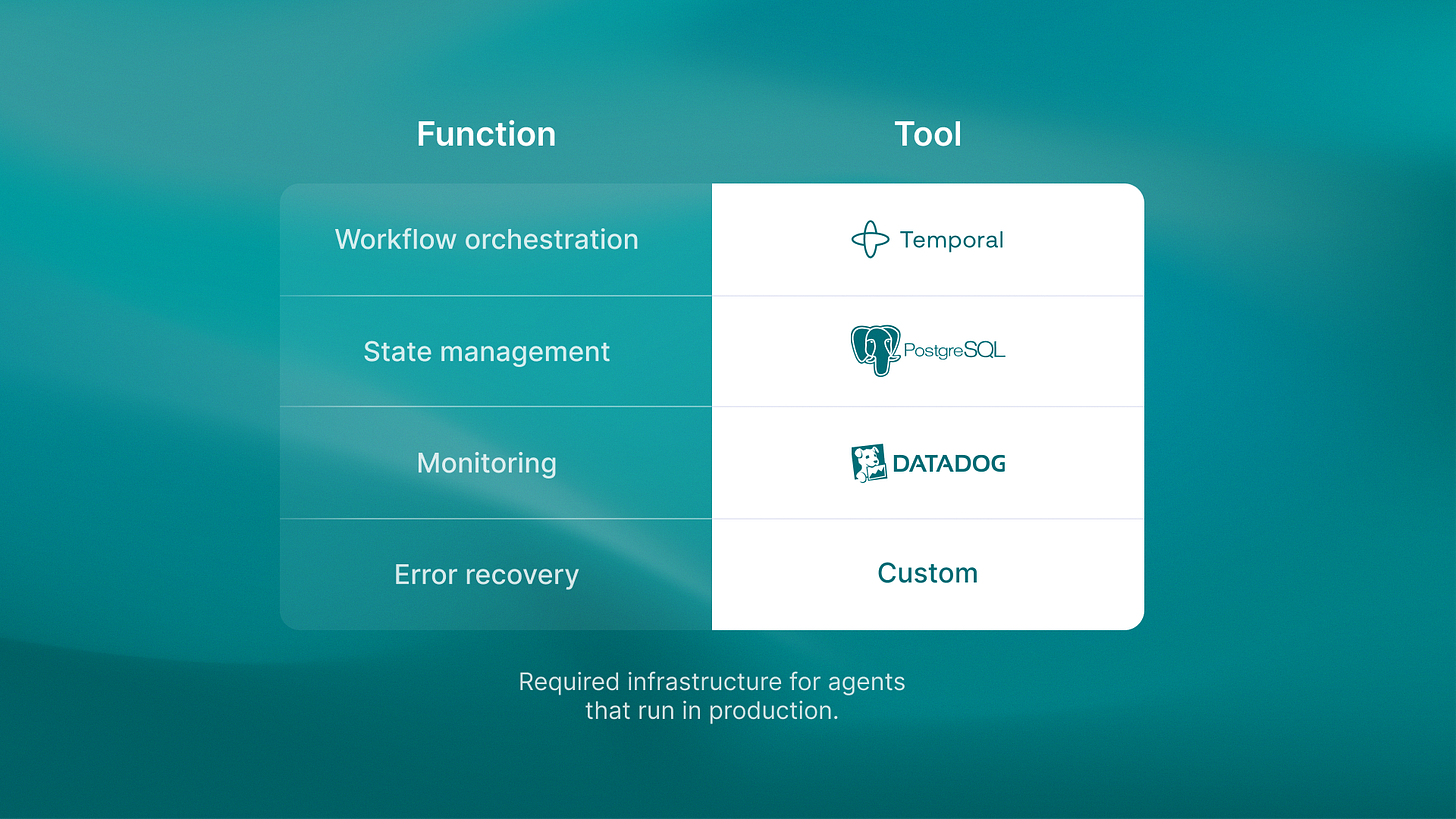

Here’s what production agents actually need: Temporal-style workflow orchestration for complex multi-step processes. PostgreSQL for persistent state management so agents remember context across sessions. Datadog monitoring infrastructure to catch failures before they cascade. Error recovery patterns that handle the 100+ edge cases that never show up in pilots.

Demo architectures skip all of this. Production systems can’t.

Quality issues kill at 3x the rate

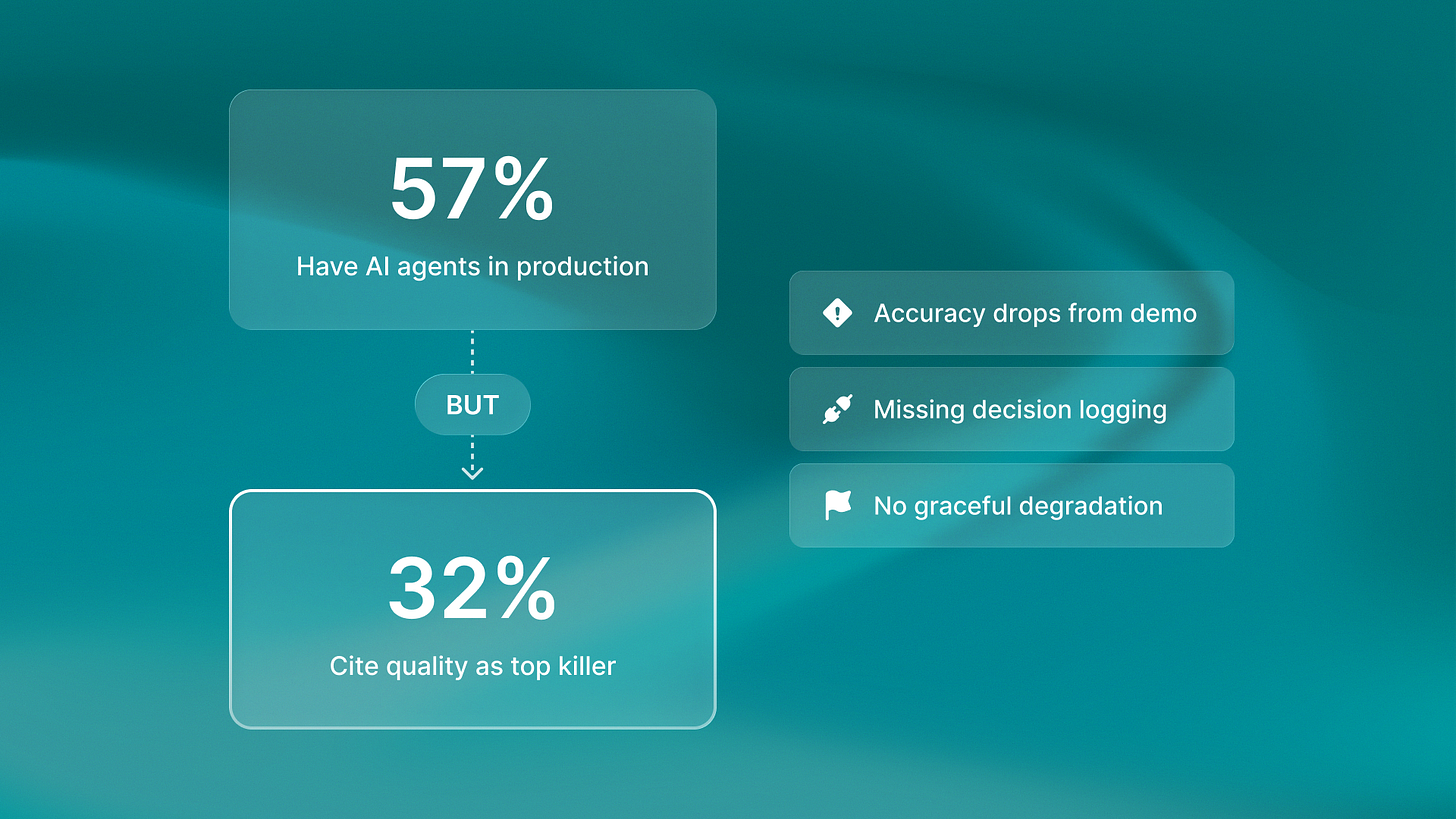

The LangChain State of AI Agents Report found 57% of companies have AI agents in production. Sounds encouraging until you see the next number: 32% cite quality as their top production killer.

That gap between “deployed” and “reliably operational” is where most pilots die.

Last month I watched a team rebuild their entire agent architecture after six months of pilot success. Their demo handled customer support tickets with 90% accuracy. Production deployment hit 60% and stayed there. The problem wasn’t the AI. It was the governance layer they never built. They lacked decision logs and clear error handling. They also lacked graceful fallback when confidence dropped below a set threshold.

They’d built an impressive prototype. They hadn’t built a system that could run autonomously for months.

I wrote about this architectural gap in our analysis of agentic AI vs AI assistants. The core issue is that most teams are building the wrong architecture from day one. They treat agents like assistants that enhance productivity, when production agents need to replace entire workflows. That requires fundamentally different infrastructure.

The assistant-agent confusion wastes 6-12 months

Here’s what I keep seeing: teams start building what they call an “AI agent” but architect it like an assistant.

Assistants are stateless. GitHub Copilot suggests code but doesn’t remember your codebase architecture. ChatGPT drafts emails but forgets context between sessions. Salesforce Einstein surfaces insights but doesn’t execute actions.

Agents are fundamentally different. They maintain state. They execute multi-step workflows autonomously. They make decisions without human approval. They need to recover from failures and keep running.

The ReachSocial platform demonstrates this distinction clearly. Their LinkedIn engagement tool does more than suggest comments.

It runs campaigns across dozens of accounts.

It keeps an engagement history.

It adjusts timing based on response patterns.

It also handles rate limits without human help.

That requires architecture you simply don’t need for assistants.

Most pilot projects build assistant architecture because it’s simpler. Then they try to add autonomy as a feature. It doesn’t work. You end up rebuilding from scratch, usually 6-12 months into the project.

Production orchestration requires planning for autonomy

I’ve tracked deployment patterns across our portfolio. Companies shipping production agents made the same early choice. They planned for autonomy from day one.

What that actually looks like in practice:

Workflow orchestration: Temporal or similar systems that manage complex, multi-step workflows. They include built-in retry logic and state persistence. Not simple API calls in a loop.

State management: PostgreSQL databases that track agent context, decision history, and workflow progress. Not ephemeral memory that disappears between sessions.

Monitoring infrastructure: Datadog or PostHog tracking every agent action, with alerts for unusual patterns or quality degradation. Not hoping you notice when things break.

Error recovery: Graceful degradation patterns that handle API failures, confidence threshold drops, and edge cases. Not crash-and-restart.

These aren’t nice-to-haves. They’re the difference between a demo that impresses investors and a system that runs production workflows for months.

I detailed this infrastructure requirement in our guide to building your first AI agent in 30 days. The playbook stresses that the perception, reasoning, action, and learning layers need production-grade orchestration. They should not rely on prototype-level glue code.

The timeline urgency nobody’s talking about

Here is the competitive reality. Companies that start building production orchestration patterns now will ship autonomous agents in Q2–Q3 2026.

Companies that wait for “better AI models” to fix orchestration problems will still be rebuilding their pilot systems. They will likely still be doing this in 12 to 18 months.

The governance gap is architectural, not technological. Better models won’t fix missing state management or non-existent error handling. They won’t magically add workflow orchestration to systems that were built as stateless assistants.

We manage dev hours across 8 to 15 client projects at once. Teams shipping production agents are not waiting for GPT-5 or Claude 4. They are building orchestration infrastructure. This will let them swap in better models when they arrive.

The companies that understand this are building their competitive moat right now. The ones that don’t are six months away from realizing their pilot architecture won’t scale.