When to Build an AI Agent (And When You Don't Need One)

A practical framework for CTOs choosing between autonomous agents and AI assistants

Late 2025, we watched a Series B company spend six months building an autonomous agent to handle customer support ticket routing. Complex architecture. Multi-step reasoning. Tool orchestration across four internal systems.

The result? Marginally better than their existing rules-based automation.

Two months later, they shut it down. The maintenance burden exceeded the value. Their engineering team went back to building product features that actually moved revenue.

The problem wasn’t the execution. The problem was the decision to build an agent in the first place.

Here’s what’s happening across the industry: 57% of companies already have AI agents in production, according to LangChain’s State of AI Agents Report 2025. Another 30% are actively developing agents with concrete deployment plans. The agent wave isn’t coming. It’s here.

But 65% of enterprise leaders cite agentic system complexity as their top adoption barrier, per KPMG’s Q4 2025 AI Pulse Survey. That stat has held steady for two consecutive quarters.

The disconnect? Most technical leaders are making architectural decisions without a framework for when autonomous systems actually justify their complexity.

The Stakes Are Higher Than You Think

Build an assistant when you need an agent and you cap your ROI at incremental productivity gains. Over-engineer an agent when an assistant would suffice and you waste months on unnecessary complexity while your competitors ship features that matter.

The companies getting this decision right are seeing exceptional returns. 62% of organizations anticipate 100% or greater ROI from their AI agent deployments, according to Warmly’s AI Agents Statistics 2026.

But those returns only materialize when agents are deployed against the right workflows.

We’ve spent the past year working across portfolio companies building and operating autonomous agents in production. Islands manages fractional CTO services across 8-15 simultaneous client projects, which means we see the full spectrum: where agents deliver transformative value and where they become expensive distractions.

What we’ve learned: the agent versus assistant decision isn’t about capability level. It’s fundamentally architectural. And that architecture only pays for itself under specific conditions.

What Actually Separates Agents from Assistants

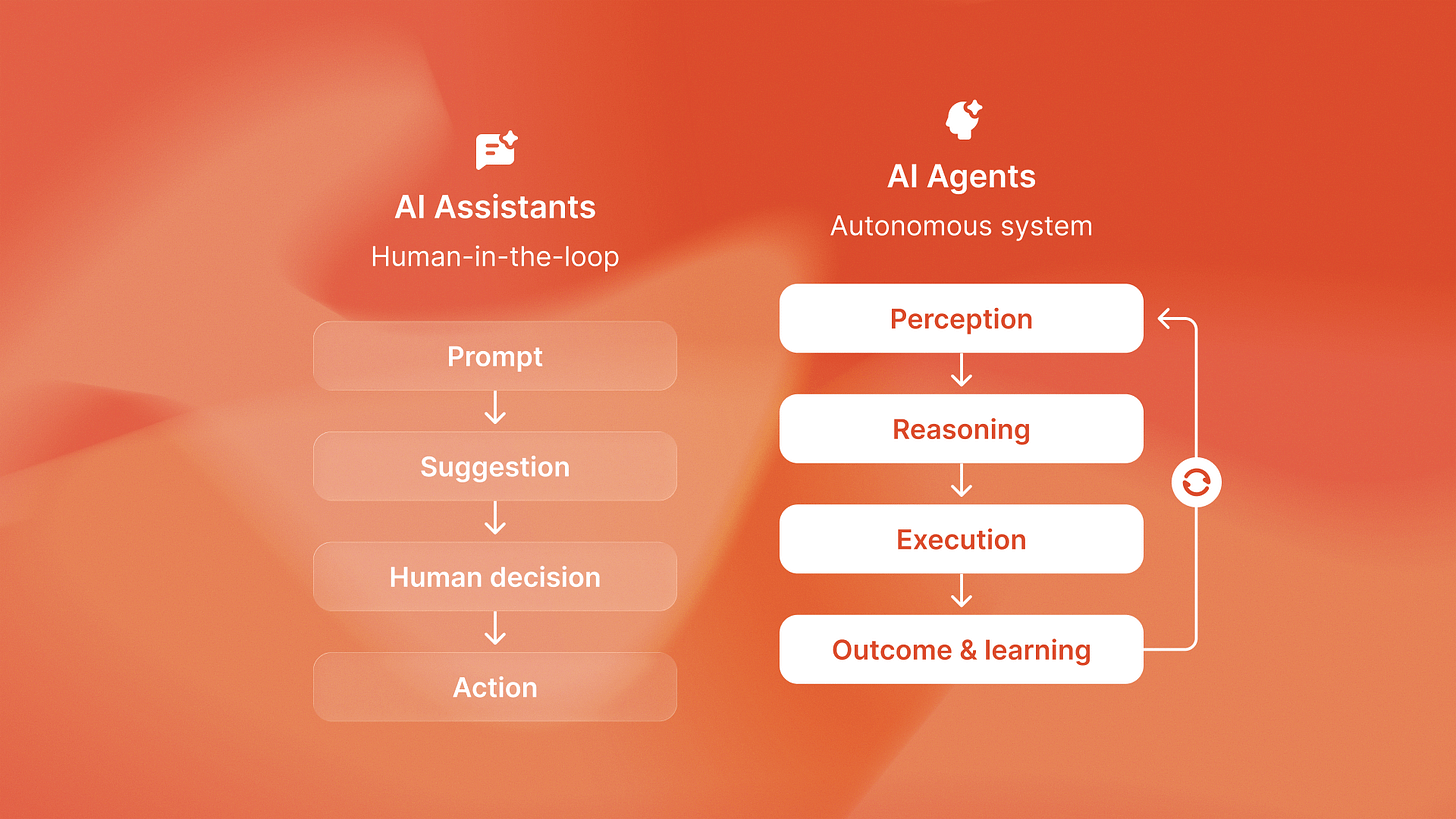

Most people think the difference is sophistication. Assistants are simple, agents are complex. That’s not quite right.

The real distinction is architectural:

Assistants enhance human workflows. They respond to prompts, surface suggestions, accelerate tasks humans are already doing. GitHub Copilot suggesting code completions. ChatGPT drafting emails. Salesforce Einstein surfacing insights.

Agents replace entire workflows through autonomous loops. They perceive environments, make decisions, use tools, and execute multi-step processes without human intervention. QA flow detecting bugs from Figma designs and running 2,400 test suites monthly. Ingage orchestrating LinkedIn campaigns across weeks. Shoreline monitoring compliance violations and triggering remediation.

The architecture difference creates fundamentally different cost structures, operational patterns, and ROI profiles.

When you build an assistant, you’re augmenting existing headcount. Productivity gains compound gradually. A developer writes code 30% faster. A sales rep handles 20% more outreach. Valuable, but incremental.

When you build an agent, you’re automating entire positions or creating capabilities that didn’t exist economically before. The gains are step-function, not incremental. A QA team that manually ran 200 test cases per month suddenly runs 2,400. A compliance function that reviewed transactions weekly now monitors them continuously.

But here’s the catch: agents only deliver that step-function ROI when applied to workflows that meet specific criteria.

The High-ROI Agent Framework

Across our portfolio deployments, we’ve identified four characteristics that predict whether autonomous architecture justifies its complexity:

High-volume repetitive workflows with clear patterns. Agents excel when the same logical process executes hundreds or thousands of times. QA flow runs the same test generation and execution loop across every design file. The volume creates data for improvement and ROI calculation. Low-volume workflows don’t amortize the architectural investment.

Clear success criteria that can be measured programmatically. You need to know when the agent succeeded or failed without human review every time. Bug detection has binary outcomes: caught or missed. Invoice processing has verification steps: amount matches, vendor validated, approval obtained. Ambiguous outcomes create supervision overhead that kills the automation value.

Structured data inputs with consistent formats. Agents need reliable perception. Figma files have structured component trees. Email threads have sender/recipient/subject fields. Database records have schemas. When inputs vary wildly in format or quality, you spend more engineering effort on input normalization than the agent logic delivers in value.

Meaningful cost of human execution. This is the economic filter. If the workflow costs $500/month in human time, an agent that saves 80% delivers $400/month in value. That doesn’t cover the engineering investment, LLM costs, and maintenance burden. You need workflows where the human cost baseline is high enough to justify the infrastructure.

Here’s a real example from our portfolio: Timecapsule tracks time with real-time profitability monitoring. We evaluated building an autonomous agent to analyze time entries and automatically flag unprofitable projects.

We decided against it.

Why? The workflow didn’t meet our criteria. Analysis volume was too low (weekly, not continuous). Success criteria were ambiguous (what counts as “flagging early enough”?). And the human cost baseline was minimal: a finance lead already reviewed profitability dashboards as part of their existing role.

An AI assistant that surfaces anomalies in the existing dashboard delivered 90% of the value for 10% of the complexity.

Contrast that with QA flow’s autonomous testing agent: thousands of test executions monthly, binary pass/fail outcomes, structured Figma file inputs, and a human cost baseline of $8,000-12,000/month for a QA team running comparable coverage. The agent architecture was economically obvious.

The Decision Tree

Here’s how we evaluate new opportunities:

Start with volume. If the workflow executes fewer than 50 times per month, it’s probably not an agent opportunity. The architectural overhead won’t amortize. Build an assistant or keep it manual.

If volume clears that threshold, examine success criteria. Can you measure success without human review for every execution? If yes, continue. If no, rethink the workflow boundaries. Maybe you’re trying to automate too much at once.

Next, evaluate input structure. Are the data inputs consistent enough that your perception layer works reliably? If inputs require heavy preprocessing or human curation, that’s a red flag. Either standardize the inputs first or reconsider whether this is truly automatable.

Finally, calculate the human cost baseline. What does it cost today to execute this workflow manually? Include fully-loaded headcount costs, not just salary. A workflow that consumes 25% of a $120K employee costs $30K/year. An agent that saves 80% of that delivers $24K/year in value. Now subtract LLM costs (typically $2K-8K/year for production agents), infrastructure ($3K-5K/year), and engineering maintenance (conservatively 20% of a developer’s time = $30K/year). You need substantial human cost baseline to reach positive ROI within 12 months.

This framework isn’t about moving fast. It’s about moving strategically on opportunities that justify autonomous architecture.

What We’re Seeing Work in Production

The highest-ROI agent deployments we’re seeing share a pattern: they target workflows where human execution is expensive, repetitive, and well-defined, but the work is too specialized or high-volume for traditional automation.

QA testing fits this perfectly. Manual QA is expensive ($60-80K per tester), scales linearly with application complexity, and follows clear verification logic. But traditional test automation requires engineers to write and maintain test scripts, which creates its own cost burden. QA flow navigates this by generating tests autonomously from design files, then executing them continuously. The volume (2,400+ test suites monthly across clients) justifies the architecture.

Compliance monitoring follows similar economics. Manual review is expensive and error-prone. Rules-based automation is brittle and misses edge cases. Autonomous agents can monitor continuously, reason about context, and trigger remediation. The combination of high human cost and clear success criteria makes the ROI calculation straightforward.

LinkedCa engagement orchestration is another strong fit. Building professional authority on LinkedIn requires consistent, contextually relevant engagement across dozens of conversations. Reachsocial automates the monitoring, context analysis, and response generation loop. A human could do this work, but the time investment (2-3 hours daily) makes it economically prohibitive for most professionals.

Notice the pattern: these aren’t the sexiest use cases. They’re operational workflows with clear economics.

Where Agents Fail

We’ve also seen plenty of agent failures. The patterns are predictable:

Workflows with ambiguous success criteria. An agent that “improves customer satisfaction” or “optimizes marketing spend” lacks the measurement clarity to operate autonomously. You end up reviewing every decision, which defeats the automation value.

Low-volume, high-stakes decisions. Agents excel at high-volume, medium-stakes workflows. When volume is low and stakes are high (executive hiring, M&A analysis, crisis response), the cost of error exceeds the automation value. These are assistant opportunities: augment human judgment, don’t replace it.

Rapidly changing environments. Agents need stable patterns to learn from and optimize against. In environments where rules change weekly or inputs vary wildly, you spend more time retraining and adjusting than the agent delivers in autonomous value.

Workflows that require deep relationship context. Agents can handle transactional interactions effectively. They struggle with workflows that require understanding subtle relationship dynamics, organizational politics, or long-term strategic context. Keep humans in the loop for relationship-critical work.

The Competitive Implication

Here’s what matters for technical leaders making these decisions in 2026: the companies that get agent selection right will build sustainable competitive advantages through operational leverage that compounds over time.

An autonomous QA agent that runs 2,400 test suites monthly creates a quality moat. A compliance monitoring agent that reviews every transaction creates a risk management capability smaller competitors can’t match. A content orchestration agent that maintains consistent engagement creates audience advantages that accumulate.

These aren’t one-time productivity gains. They’re operational capabilities that scale independently of headcount.

But the inverse is also true: companies that chase agent hype without economic discipline will waste 12-18 months building complex systems that deliver marginal value. By the time they realize the architectural choice was wrong, their competitors will have shipped the features that actually moved the business forward.

The framework we’ve outlined isn’t theoretical. It’s drawn from production deployments across portfolio companies managing real P&Ls. We’ve built agents that delivered 420% ROI. We’ve also shut down agents that became expensive distractions.

The difference came down to workflow selection, not execution quality.

If you’re evaluating where to deploy autonomous agents in your organization, start with the economics. Find workflows with high volume, clear success criteria, structured inputs, and meaningful human cost baselines. Those are your high-ROI opportunities.

Everything else is probably an assistant.

For a deeper look at the architectural differences and cost structures that separate agents from assistants, we’ve documented the full ROI breakdown in our agent economics analysis. And if you’re ready to build your first agent, our 30-day playbook walks through the perception, reasoning, action, and learning layers that make autonomous systems work in production.

The agent wave is here. The question isn’t whether to build agents. It’s where to deploy them strategically.