What we learned deploying AI agents across three production environments

Everyone’s building AI agent demos. We’re deploying autonomous systems across multiple production environments and discovering the patterns that separate prototypes from systems that actually ship.

Here’s the reality: 57% of companies now use agents in production. This is according to the LangChain State of AI Agents Report 2025. The market has moved past “should we build agents” to “how do we deploy them correctly.” But most content focuses on demos and prototypes. It skips orchestration patterns, failure modes, and architecture choices. These choices decide if an agent ships in weeks or stalls for months.

We’ve deployed production agents across three domains at Islands.

We use QA flow for autonomous testing.

We use ReachSocial for LinkedIn engagement orchestration.

We use Shoreline for contract monitoring.

Each deployment started with different requirements, but we kept hitting the same core challenges. The third time through, the patterns became obvious.

This post shares what we learned from deploying agents three times. It covers common failure modes, reusable orchestration patterns, and deployment structures. These structures support rapid iteration instead of endless pilots.

Customer service dominates, but workflow architecture matters from day one

Customer service was the most common agent use case at 26.5%. Research and data analysis followed at 24.4%. Source: LangChain State of AI Agents Report 2025.

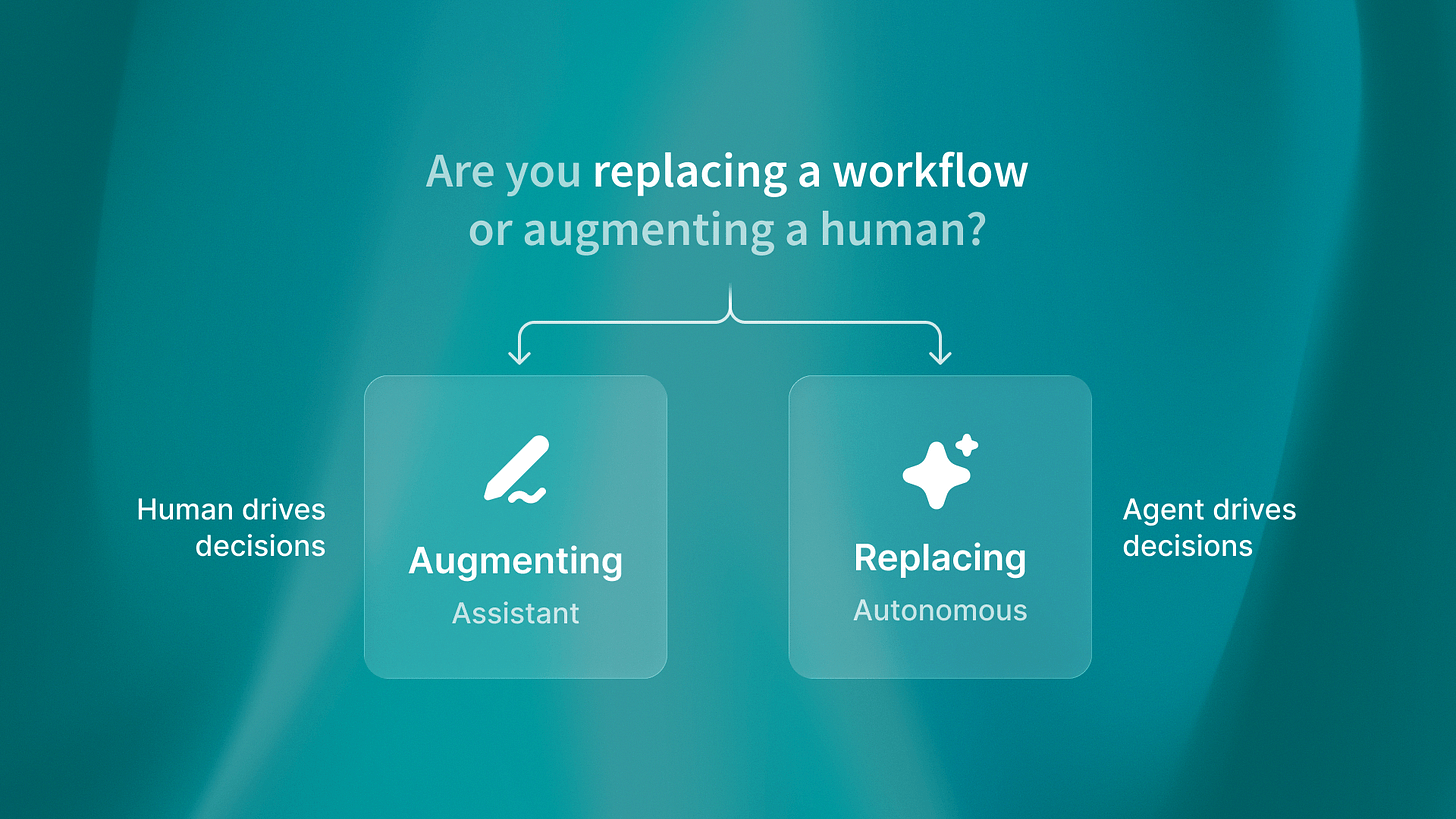

But here’s what the statistics don’t tell you: the use case matters less than whether you architect for workflow replacement from the start.

I was talking to the QA flow team last week about their deployment timeline. They started with a clear vision: autonomous test generation from Figma designs, no human QA engineers in the loop. That architectural decision drove everything else. They built state management for test execution history. They designed error handling for flaky tests. They created monitoring for false positives.

Contrast that with what we see in customer service deployments. Teams begin with, “let’s help agents draft replies,” then realize in six months they must handle escalations.

They also need to keep conversation context across channels. They must also connect with existing ticketing systems. They’re retrofitting autonomy into an assistant architecture.

The pattern is simple: decide whether you’re replacing a workflow or augmenting humans. That decision determines your orchestration needs, state management approach, and monitoring strategy. We wrote about this distinction in depth, but the short version: assistants need different architecture than autonomous agents.

Start with the end state workflow. If you can’t describe what fully autonomous execution looks like, you’re not ready to architect.

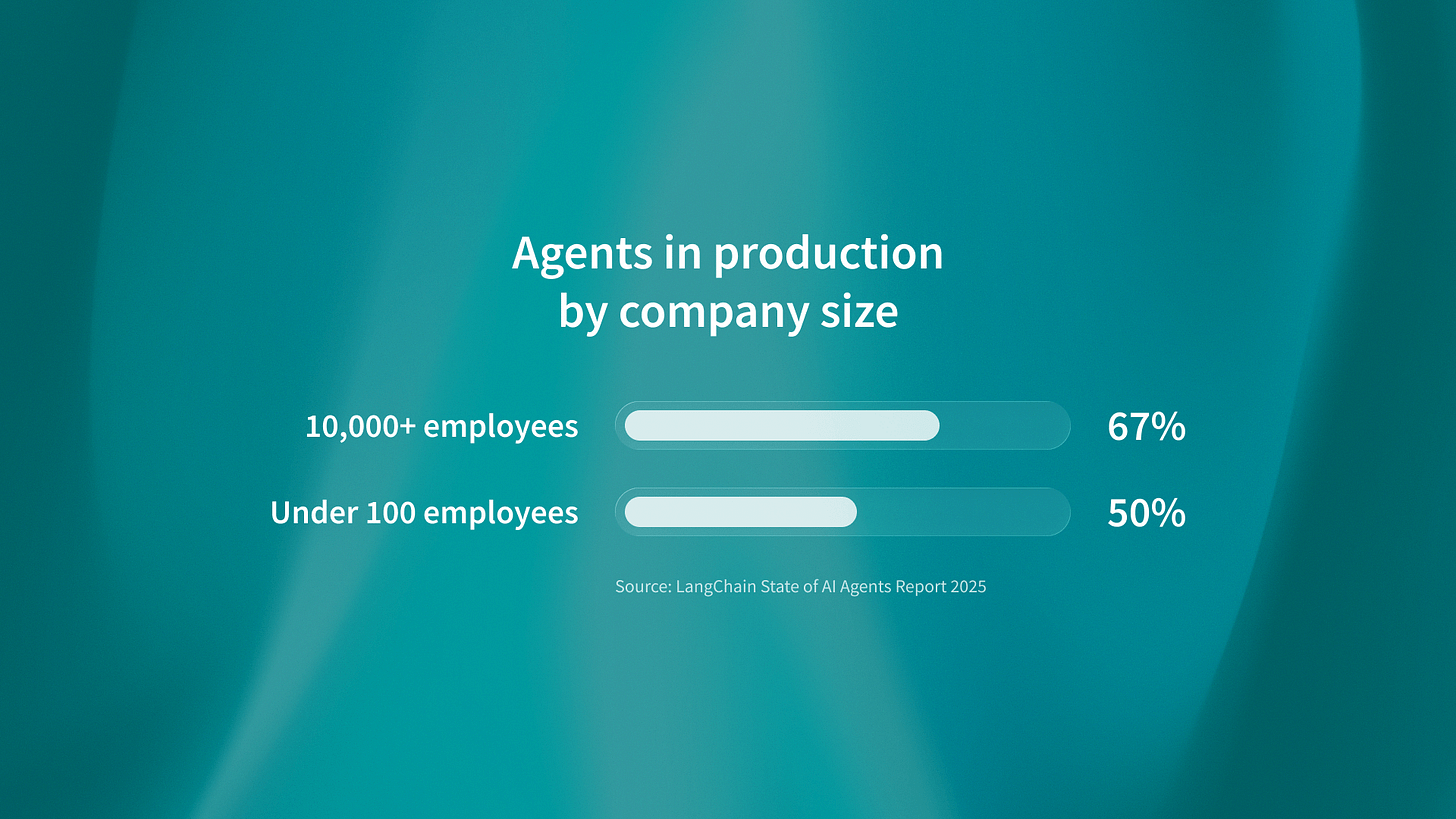

Enterprise deployment patterns differ dramatically from startup approaches

Organizations with over 10,000 employees have 67% of agents in production. Companies with under 100 employees have 50% (LangChain State of AI Agents Report 2025). That gap isn’t about resources. It’s about structured governance creating faster deployment.

Here’s what we discovered deploying ReachSocial: larger organizations move faster once they establish approval frameworks. They define what “production-ready” means upfront. Security reviews happen in parallel with development, not after. Testing protocols are standardized.

Smaller companies iterate faster on prototypes but stall at the production boundary. No one wants to be the person who approved the agent that leaked customer data. No established process for evaluating autonomous system risk. Every deployment becomes a custom negotiation with legal, security, and compliance.

The reusable pattern: establish your production criteria before you build. What security requirements apply? What audit logs do you need? How do you handle graceful degradation? Document these as deployment checklists, not ad-hoc reviews.

ReachSocial built their compliance framework during development, not after. Result: first production deployment in eight weeks instead of the six months we saw at companies retrofitting governance.

Security, Compliance, and Auditability aren’t bolt-on features

75% of tech leaders rank security, compliance, and auditability as top needs for agent deployment. (KPMG Q4 AI Pulse Survey 2026) But here’s the gap: most teams treat these as post-development concerns.

I noticed something when reviewing Shoreline’s architecture: they logged every contract analysis decision from day one. Not because they expected an audit. Debugging autonomous systems requires understanding what the agent saw. It also requires knowing what the agent decided. You also need to know why it made those decisions.

That logging infrastructure became their compliance story. When legal asked, “How do we confirm the agent caught all key changes?” They had full audit trails. These showed input documents, extracted clauses, confidence scores, and decisions. The architecture for debugging doubled as the architecture for compliance.

The pattern we see across deployments is clear: security and audit requirements should guide your first architecture.

They should not limit it later. If you’re using Temporal for orchestration, log workflow execution at the step level. If you’re using PostgreSQL for state, track state transitions with timestamps and reasons. If you’re calling external APIs, record requests and responses.

This isn’t overhead. This is how you debug production agents. The compliance value is a bonus.

Reusable orchestration patterns accelerate deployment across use cases

We hit the same architectural challenges three times: state management, error handling, and human-in-the-loop workflows. By the third deployment, we had reusable patterns that reduced implementation time by 40-60%.

State management: PostgreSQL with explicit state machines. Every agent workflow maps to defined states (pending, in-progress, completed, failed, requires-review). State transitions are logged. Current state determines available actions. This pattern worked identically for QA flow test execution, ReachSocial campaign orchestration, and Shoreline contract monitoring.

Error handling: retry with exponential backoff for temporary failures. Escalate to human review for unclear cases. Use clear failure modes with context. We learned this while deploying the QA flow. Test generation might fail if the Figma file is malformed (escalate). It might also fail if the LLM API times out (retry). The distinction matters.

Human-in-the-loop: review queues with context preservation. When an agent cannot proceed on its own, it creates a review task. It includes its current state, the decision point, and relevant context. A human makes the call, and the agent resumes with that input. Same pattern across all three deployments.

The competitive advantage: companies deploying their second or third agent move 3-4x faster than their first deployment. They’ve solved orchestration once. If you’re deploying your first agent, adopt proven patterns instead of pioneering mistakes others have already made.

The production gap is where most agent projects fail

Here’s what separates successful deployments from stalled pilots: planning for autonomy, monitoring, and graceful degradation from day one.

I was reviewing a failed agent deployment last month. The team built a beautiful demo: natural language to database queries, impressive accuracy, stakeholders loved it. Six months later, still not in production. The gap is that the system does not handle unclear queries. It does not monitor for accuracy drift. It also has no fallback when the agent cannot parse the user’s intent.

They built for the happy path. Production requires handling the unhappy paths.

Our cross-company analysis shows that teams starting with production-ready architecture ship 3-4x faster than those retrofitting demos. What does production-ready mean?

Temporal for orchestration (handles retries, timeouts, state management)

PostgreSQL for state (explicit state machines, audit trails)

Comprehensive logging (every decision, every API call, every state transition)

Monitoring from day one (Datadog or similar for latency, error rates, throughput)

Graceful degradation (fallback to human review instead of crashes)

QA flow launched with all of this. Not because they over-engineered, but because they knew demos don’t ship. The economics of agent deployment only work if you reach production.

What this means for your deployment timeline

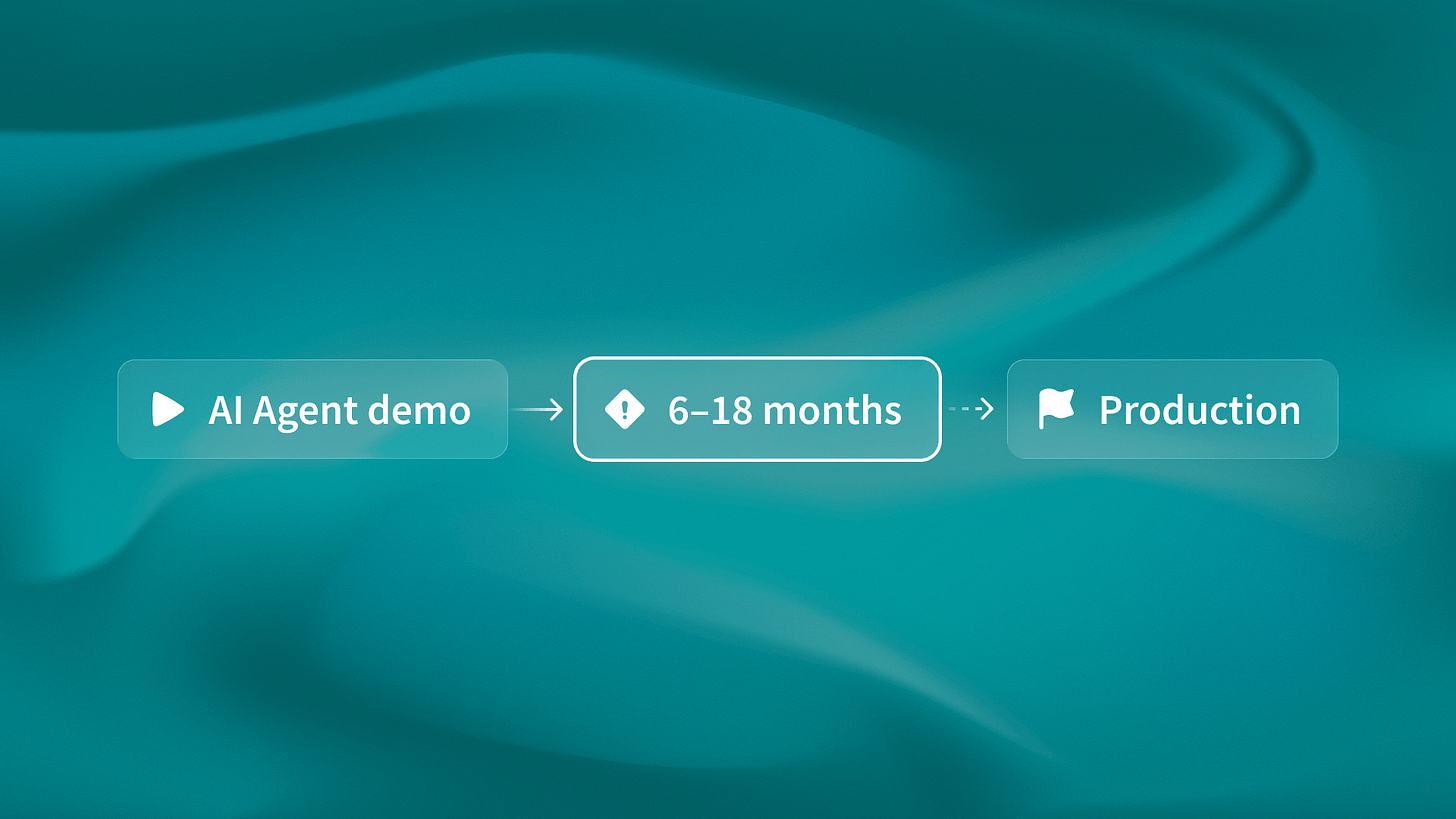

Companies that deploy agents as one-off custom projects will waste 12 to 18 months. They will repeat mistakes that others have already solved. The winners will be teams that use proven orchestration patterns, design for production from day one, and ship working systems in weeks. Their competitors will still be gathering requirements.

By 2026, the differentiation won’t be between companies with and without agents. It’ll be between those with production-ready autonomous systems and those stuck maintaining fragile prototypes. The predictions are clear: multi-agent orchestration, persistent memory, proactive problem detection. All of these require production-grade architecture.

The patterns exist. The question is whether you’ll learn from others’ deployments or repeat their failures.