The real math behind agent vs assistant investments

I keep seeing the same pattern in technical leadership meetings. Someone presents two AI investment options. One deploys assistants across the engineering team for productivity gains. The other builds autonomous agents to replace specific workflows.

Both have compelling demos. Both show impressive ROI projections. But the underlying economics are completely different.

Choosing the wrong option can mean spending too much on infrastructure you don’t need. Or it can mean not investing enough in automation that could build a competitive moat.

Let me walk you through the production economics that demos never reveal.

The ROI difference isn’t about percentages, it’s about what you’re measuring

Organizations project an average ROI of 171% from agentic AI deployments. U.S. enterprises forecast 192% returns. Those numbers sound similar to assistant productivity claims until you understand what they’re measuring.

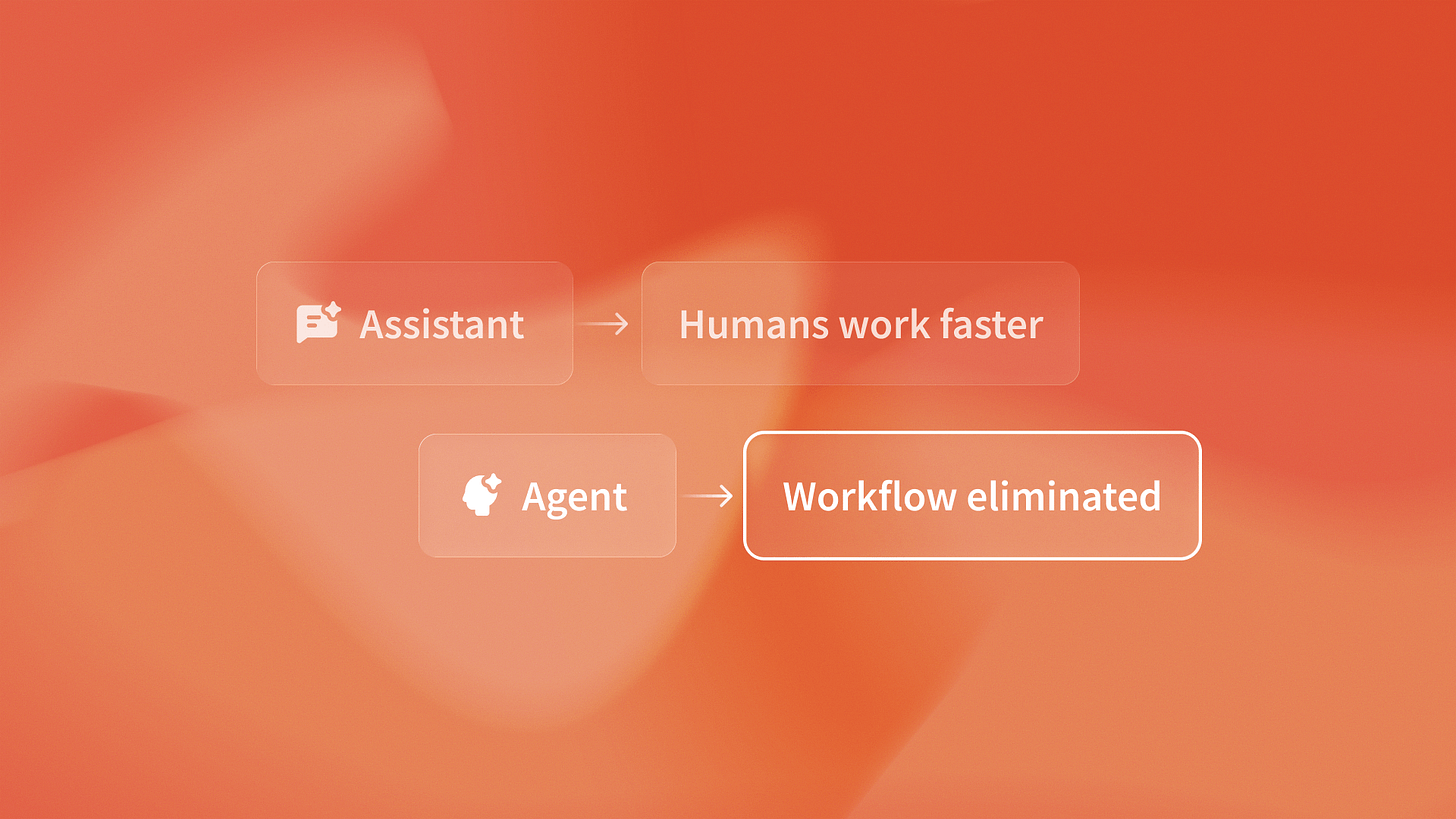

Assistants optimize labor costs. GitHub Copilot makes developers faster. ChatGPT reduces email drafting time. Salesforce Einstein surfaces insights quicker. The ROI comes from the same people doing more work in less time.

Agents eliminate labor categories entirely.

I was talking to the team at QA flow last week about their testing economics. They shared something that illustrates this perfectly. Their autonomous testing platform doesn’t make QA engineers faster at writing tests. It removes the need for humans to write regression tests at all. The agent monitors GitHub commits, generates test cases from design files, executes them, and flags issues without human intervention.

That’s not a productivity multiplier. That’s workflow elimination.

Companies using AI agents report revenue gains of 3% to 15%. They also report a 10% to 20% increase in sales ROI. Some achieve up to 37% cost reductions. These aren’t efficiency gains. They’re compound effects from removing entire job functions and reallocating that capacity to higher-value work.

Development costs reveal the architectural difference

Here’s where technical leaders often misjudge the investment required.

AI agent development costs range from $20,000 to $60,000 depending on complexity. Autonomous decision-making agents start at $80K+. Those numbers represent fundamentally different engineering challenges than deploying assistants.

Assistants integrate into existing workflows. You’re adding a feature layer on top of human decision-making. The engineering complexity is bounded: API integration, prompt engineering, response formatting. Development timelines are measured in weeks.

Agents replace workflows. You’re building perception, reasoning, action, and learning systems that operate without human oversight. The engineering complexity is unbounded: error handling, state management, feedback loops, graceful degradation. Development timelines are measured in months.

I noticed something interesting at Islands when we started building agents for client projects. The first 60% of development time goes to making the agent work in the happy path. The remaining 40% goes to handling edge cases, failure modes, and recovery scenarios in production.

That’s the cost structure demos don’t reveal. The difference between “works in a demo” and “runs autonomously in production” is often 2-3x the initial development estimate.

The breakeven calculation changes when you eliminate vs optimize

Most technical leaders estimate assistant ROI using simple labor math. If the tool saves 2 hours per engineer each week at $80 per hour, you can break even. You break even when the subscription cost, divided by hours saved, equals the engineer’s hourly cost.

Agent ROI requires different math entirely.

Let’s use a concrete example. Say you are deciding whether to build an autonomous compliance monitoring agent. Or, you can deploy an assistant to help your compliance team work faster.

The assistant scenario: 3 compliance analysts at $75K each spend 15 hours per week reviewing contract updates. An AI assistant reduces that to 10 hours per week. You save 15 hours weekly across the team. At $36/hour effective cost, that’s $540 per week or $28K annually. Your assistant tool costs $5K per year. ROI is 460%.

The agent scenario: You invest $50K to build an autonomous agent. It monitors contracts, flags needed updates, and drafts compliance memos. Development takes 4 months. But once deployed, you reallocate 2 of those 3 analysts to higher-value risk assessment work. You’re not saving 5 hours per week. You’re eliminating 30 hours per week of routine monitoring.

The breakeven timeframe shifts from months to quarters. But the total value captured is 4-5x higher. This is because you create capacity for revenue-generating work. You are not just speeding up existing tasks.

Companies implementing agents report revenue increases between 3% and 15%. That top-line growth comes from shifting removed capacity to customer-facing work, not from making back-office tasks a bit faster.

Production costs determine actual ROI, not development estimates

Here’s what catches technical leaders off guard: the ongoing operational costs of running agents in production.

Infrastructure costs. LLM API calls. Monitoring systems. Error handling and recovery. These weren’t in the initial business case because the demo ran on a laptop with cached responses.

I was reviewing the production economics at Timecapsule recently and they shared their real-time profitability monitoring data. Their time tracking platform uses agents to automatically categorize billable hours and flag projects drifting toward unprofitability. The agent makes roughly 15,000 API calls per day across their client base. At $0.002 per call for Claude Sonnet, that’s $30 daily or $900 monthly just in LLM costs.

Add infrastructure ($200/month), monitoring ($150/month), and engineering maintenance (4 hours per week at $150/hour). That totals $2,400/month for maintenance. Your monthly operational cost is $3,650. For their use case, the agent eliminates 80 hours of manual profitability analysis monthly across clients. At $75/hour, that’s $6,000 in eliminated labor. Net monthly value: $2,350. Annual ROI: 177%.

That 177% matches the industry average of 171%. This is because it covers total cost of ownership, not just development costs.

Most assistant deployments show higher ROI percentages because operational costs are minimal. You’re paying subscription fees, not running infrastructure. But the absolute dollar value of ROI is lower because you’re optimizing labor, not eliminating it.

The competitive advantage math changes everything

Here’s where the business case diverges most dramatically between assistants and agents.

Assistants provide parity improvements. If your competitors also deploy GitHub Copilot or ChatGPT, you maintain relative position but gain no competitive edge. The ROI is real but shared across the industry.

Agents create moats through automation.

When QA flow uses automated tests, it can find regression bugs. It does not need human help. This saves QA engineers time. They’re shipping faster than competitors who still rely on manual testing cycles. That speed advantage compounds: faster shipping means more iterations, more customer feedback, more feature development.

Companies implementing agents report revenue increases between 3% and 15%. That top-line growth comes from doing things competitors can’t do at scale, not from doing the same things slightly faster.

I wrote about this in more detail here, but the core insight is this: assistants make your existing processes better. Agents make entirely new processes possible.

The ROI calculation should include the value of capabilities your competitors lack, not just labor cost savings.

Making the right architectural choice

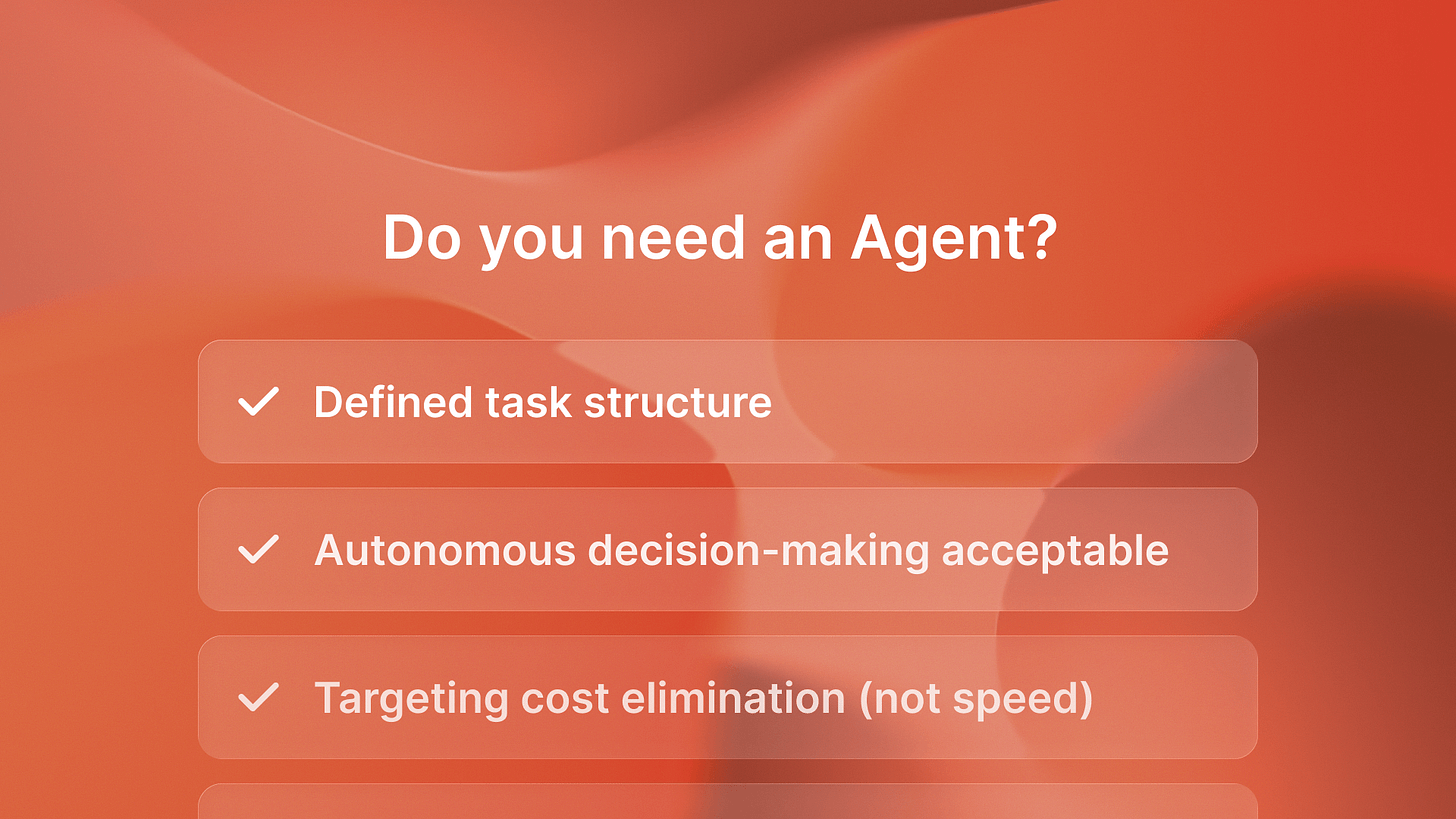

Both assistants and agents have valid use cases. The mistake is conflating their economics.

Use assistants when you want to enhance human decision-making without changing workflow structure. The ROI comes from productivity gains with minimal operational overhead. Break-even happens in months. Total value captured is measured in labor hour savings.

Use agents when you want to eliminate workflows entirely and create competitive moats through automation. The ROI comes from workflow elimination and new capabilities. Break-even happens in quarters. Total value captured is measured in labor elimination plus revenue growth from speed advantages.

The companies that understand these different economic models will justify the right investments and avoid expensive architectural mistakes. Next week I’ll explain how to build your first agent business case using this framework. I’ll include clear cost breakdowns and ROI projections you can share with your board.

If you want more details about costs right now, I wrote a full breakdown. You can read it here: agent economics and optimization strategies here.