The hidden tax on test automation maintenance

You automated your tests. Maintenance costs went up, not down.

Your team invested in Selenium, Cypress, or Playwright to reduce QA costs and scale faster.

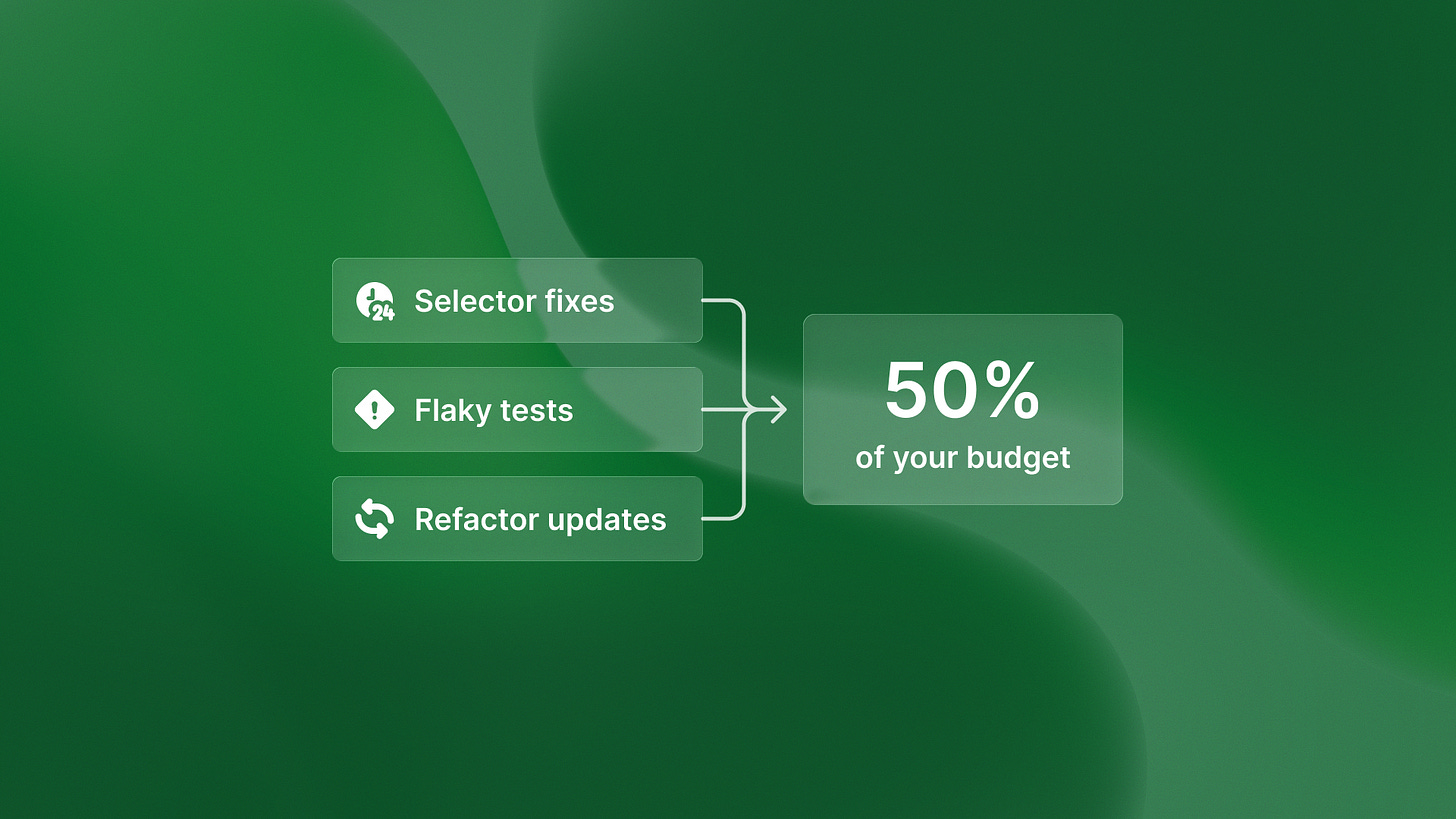

Instead, you’re spending 20+ hours weekly updating broken test scripts after every refactor. Up to 50% of your automation budget now goes to script maintenance, according to the World Quality Report cited by IT Convergence 2025.

Traditional test automation doesn’t solve the QA scaling problem. It shifts the bottleneck from test execution to test maintenance.

The hidden cost structure of test automation maintenance

That 50% maintenance budget breaks down into specific time sinks: updating CSS selectors after UI changes, fixing flaky tests that fail randomly, refactoring test code when application code refactors, and investigating false positives that waste developer time.

A Rainforest QA Survey found that 55% of teams using Selenium, Cypress, and Playwright spend at least 20 hours per week creating and maintaining automated tests. That’s 25-50% of a full-time QA automation engineer’s capacity just maintaining existing tests, not writing new coverage.

The maintenance burden compounds as your codebase grows. As engineering teams scale from 50 to 500 engineers, test suite size grows proportionally, but maintenance complexity grows exponentially.

More developers making changes means more refactors, more test breakage, and more time spent keeping tests in sync with implementation changes rather than catching new bugs.

Why traditional automation doesn’t solve the scaling problem

Automation solves test execution speed by running tests faster than humans can. It doesn’t solve test definition scalability because humans still write every test case and maintain them as code changes.

You’ve moved from “we can’t manually test everything” to “we can’t maintain all our automated tests.” The constraint moved, it didn’t disappear.

Even AI-powered script generators like Playwright with AI or GitHub Copilot for test writing don’t fix this.

These tools help write scripts faster, but humans still define what to test and scripts still break during refactors. They’re productivity tools for the same paradigm, not a different approach to testing architecture.

Why tests break: implementation vs. intent

Implementation-based testing validates how the system works by checking CSS selectors, DOM structure, and API endpoint paths.

Tests break when implementation details change even if behavior remains correct.

Here’s a concrete example: you refactor a button from one component library to another.

The DOM structure changes completely, breaking all tests that reference that button’s CSS class, even though the user workflow (clicking the button to submit a form) hasn’t changed at all.

Intent-based testing validates what the system should do according to design specifications, not how it’s implemented in code.

Tests validate behavior and user intent, which survives refactors.

Tools like QA flow use this approach, generating tests from Figma specs rather than implementation details.

The autonomous testing alternative

Automated testing runs scripts humans wrote. Autonomous testing generates tests from design specs and commit messages, executes them, and creates bug tickets with zero human-written test cases.

When tests are generated from design intent rather than implementation details, refactors don’t require updating test scripts.

The same Figma spec generates valid tests for both the old and new implementation because it’s testing “form should have email field, submit button, success message” regardless of whether you implement it with React Hook Form, Formik, or vanilla HTML.

The qaflow.com/audit tool analyzes your existing test coverage and identifies where implementation-based tests create maintenance debt.

QA automation engineers stop spending 20 hours weekly maintaining test scripts and redeploy that time to exploratory testing, UX validation, and domain-specific edge cases that actually require human judgment.

What this means for your automation strategy

Your existing Selenium, Cypress, or Playwright infrastructure has value. It’s just limited by the implementation-based paradigm that guarantees maintenance burden scaling with codebase complexity.

You have two choices: continue scaling traditional automation and accept the 50% maintenance tax, or shift to autonomous testing that eliminates the maintenance burden by testing intent instead of implementation.

The maintenance tax isn’t a process problem or a tooling problem. It’s an architectural problem. Testing implementation details guarantees maintenance burden that scales with codebase complexity.

Testing intent from design specs eliminates that tax because behavior survives refactors. That’s not automation. That’s leverage.