The $37 Billion Question Nobody's Asking

The $37 Billion Question Nobody’s Asking

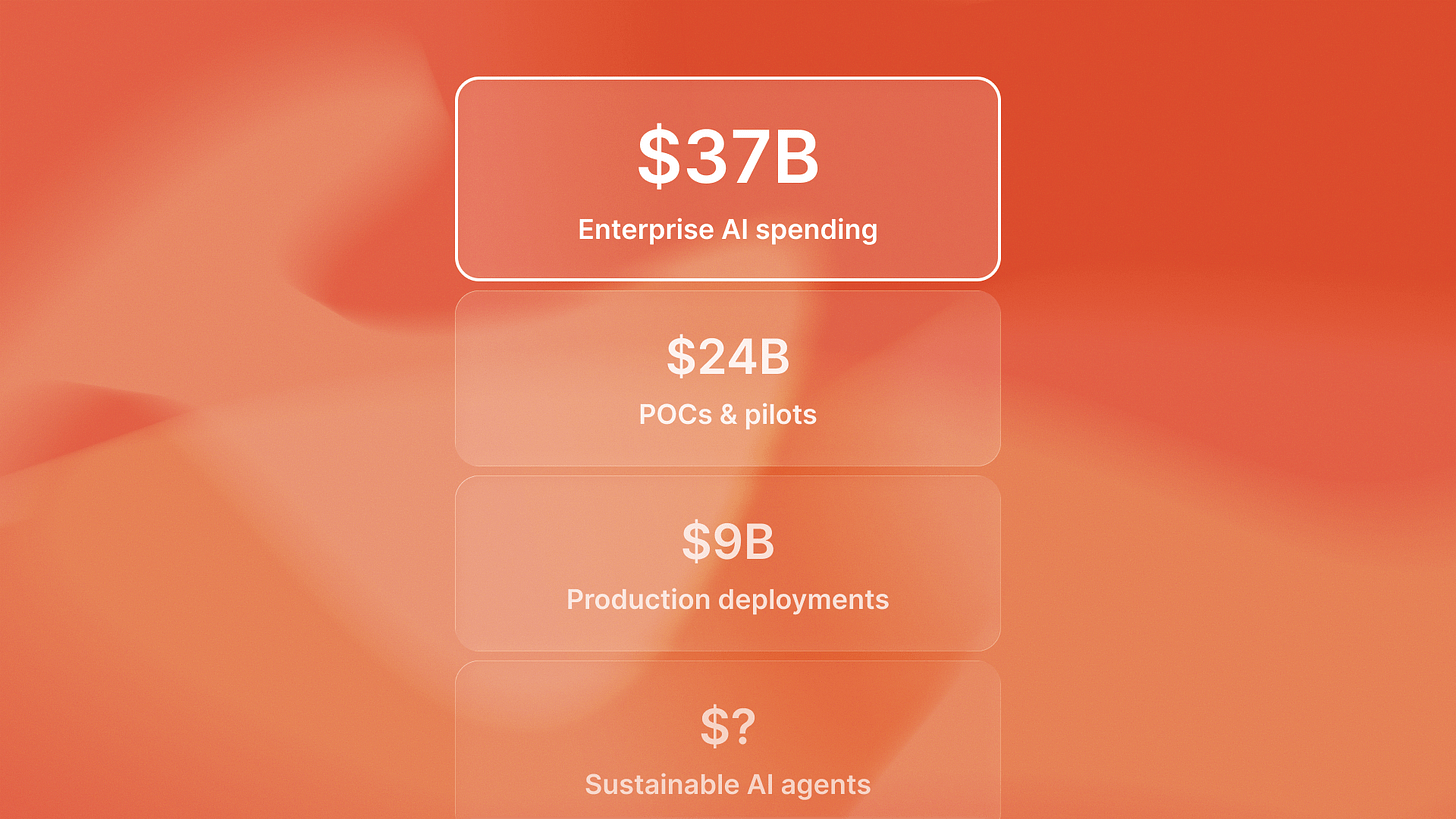

Everyone’s celebrating the AI spending surge to $37 billion. Almost no one’s talking about whether those dollars actually survive production.

Enterprise AI spending jumped from $11.5 billion in 2024 to $37 billion in 2025, a 3.2x year-over-year increase according to Menlo Ventures’ State of Generative AI report. Boardrooms are writing checks. CTOs are spinning up teams. Consultants are booking flights.

But here’s the part that keeps technical leaders awake: 59% of enterprise leadership expects measurable ROI from AI investments within 12 months. Meanwhile, only 16% of enterprise deployments qualify as true autonomous agents with planning and execution loops. The rest? Fixed-sequence workflows dressed up as AI transformation.

The math doesn’t work. Leadership expects agent-level returns on assistant-level architecture. And when the bills start arriving, most technical teams discover they’ve been flying blind on production economics.

The Cost Model Nobody Gave You

February 2025. A Series B fintech company launches their first autonomous compliance monitoring agent. The POC ran on $400/month in LLM API costs. Leadership approved the production budget: $2,000/month with room to scale.

Three months later, the real number: $8,700/month. Not because the agent failed. Because nobody mapped the full cost structure before deployment.

Here’s what actually costs money in production autonomous systems, based on patterns we’re seeing across portfolio deployments:

LLM API costs: The visible expense. GPT-4 at $0.03/1K input tokens, $0.06/1K output tokens. Claude Opus at $0.015/1K input, $0.075/1K output. For a system processing 2 million tokens monthly, you’re looking at $2,000-3,000 in pure inference costs. This is what everyone budgets for. This is not what breaks budgets.

Observability infrastructure: The hidden tax. Production agents need logging, tracing, error tracking, and performance monitoring across every decision loop. We’re seeing observability overhead run 15-25% of total operational costs. Tools like Langsmith, Helicone, or custom logging infrastructure. For that same 2M token system, add another $500-800/month. Nobody mentions this in the pitch deck.

Error recovery systems: The reliability premium. Autonomous agents fail differently than traditional software. They need retry logic, fallback models, human-in-loop escalation paths, and graceful degradation strategies. QA flow, our autonomous testing platform, dedicates 20% of infrastructure spend to error handling and recovery orchestration. That’s not waste - that’s what keeps the system from spiraling into expensive failure loops.

Multi-agent orchestration: The coordination overhead. When you move beyond single-agent systems, you need state management, message queues, coordination layers, and conflict resolution logic. This adds both infrastructure costs (Redis, message brokers, state stores) and additional LLM calls for inter-agent communication. One portfolio company running a three-agent system for customer support routing saw orchestration costs match their per-agent inference costs.

Human-in-loop operations: The operational reality. Even well-designed autonomous agents need human oversight for edge cases, escalations, and continuous learning feedback. Budget for tooling, dashboards, alert systems, and the FTE time to respond. This isn’t a technical cost - it’s an operational one that catches teams by surprise.

The fintech company’s $8,700/month breakdown: $2,800 in LLM inference, $1,400 in observability, $1,900 in error recovery infrastructure, $1,200 in orchestration overhead, $1,400 in human-in-loop tooling and time. The POC only measured the first line item.

Where the Optimization Leverage Actually Lives

August 2025. Reachsocial, our LinkedIn engagement platform, was burning $6,200/month on autonomous campaign orchestration. Leadership questioned whether the agent architecture was worth the overhead.

Six weeks later: $3,400/month. Same functionality. Same performance. Different optimization strategy.

Here’s what actually moves the cost needle based on production deployments:

Strategic model selection: Stop using GPT-4 for everything. We’re seeing 40-60% cost reductions by routing tasks to appropriate models. GPT-4 for complex reasoning and planning. GPT-3.5 or Claude Haiku for execution and formatting. Gemini Flash for high-volume classification. Reachsocial’s optimization: GPT-4 for campaign strategy ($800/month), Claude Haiku for content generation ($400/month), GPT-3.5 for execution monitoring ($300/month). Same intelligence, different cost structure.

Structured output engineering: Every retry loop costs money. Structured outputs with JSON schemas cut retry rates by 60-80% in our portfolio deployments. Instead of hoping the LLM returns valid JSON and catching failures, you guarantee format compliance upfront. This isn’t just about reliability - it’s direct cost reduction. One portfolio company eliminated $1,200/month in wasted retries by implementing structured outputs across their agent fleet.

Semantic caching strategies: Autonomous agents make repetitive decisions. Caching embeddings and responses for similar inputs cuts redundant API calls by 50-70%. We’re using semantic similarity matching to cache not just exact queries but conceptually similar ones. Timecapsule, our time tracking platform, implemented semantic caching for project categorization decisions. Result: 65% cache hit rate, $900/month savings on what had been a predictable, repetitive agent task.

Prompt compression: Tokens in, tokens out. Every word costs money. We’re seeing 30-40% cost reductions by compressing context windows without losing performance. Strip formatting. Remove redundant examples. Use abbreviations in system prompts. One agent’s system prompt went from 1,200 tokens to 680 tokens with identical output quality. At 50,000 calls per month, that’s real money.

Batch processing where possible: Real-time is expensive. Near-real-time is cheaper. For agents that don’t need instant response, batching requests and processing in 5-10 minute windows cuts costs through better resource utilization and reduced API overhead. Not every autonomous system needs millisecond latency.

Reachsocial’s $2,800 monthly savings: $1,100 from strategic model routing, $800 from structured outputs eliminating retries, $600 from semantic caching, $300 from prompt compression. The capability didn’t change. The cost structure did.

The ROI Pressure That Changes Everything

59% of enterprise leaders expect measurable ROI within 12 months. That timeline isn’t arbitrary - it’s the window between enthusiasm and budget cuts.

Here’s what that pressure creates: Technical leaders who understand production agent economics in 2025-2026 will scale autonomous systems while competitors stall on budget objections. The ones who treat AI spending as an R&D exercise will hit the 12-month mark with impressive demos and unsustainable burn rates. The ones who build realistic cost models now will justify continued investment with actual unit economics.

We’re seeing this play out across portfolio companies. The pattern that separates sustainable deployments from budget disasters: treating agent architecture as an economic decision from day one, not a technical curiosity you optimize later.

That means mapping the full cost structure before production. Understanding that LLM APIs are 30-50% of total cost, not 90%. Building optimization into the system design, not bolting it on when leadership questions the spend. Choosing models based on task requirements and cost profiles, not default to GPT-4 because it’s familiar.

The competitive implication: The winners in AI transformation won’t be those who spend the most on cutting-edge models. They’ll be the ones who optimize the fastest, who understand that production agent economics require different thinking than POC budgets, who build cost awareness into every architectural decision.

By late 2026, the gap between companies who mastered these economics and those who didn’t will be measurable in capability deployment speed. One group will be scaling agent fleets across operations. The other will still be justifying their first production deployment to skeptical CFOs holding spreadsheets that don’t add up.

The $37 billion spending surge creates opportunity. But only for technical leaders who understand what those dollars actually buy in production. Not demos. Not POCs. Not consultant promises. Sustainable autonomous systems that survive contact with real-world cost structures and deliver ROI that justifies continued investment.

That’s the question almost nobody’s asking. And the answer determines who’s still deploying agents 18 months from now.