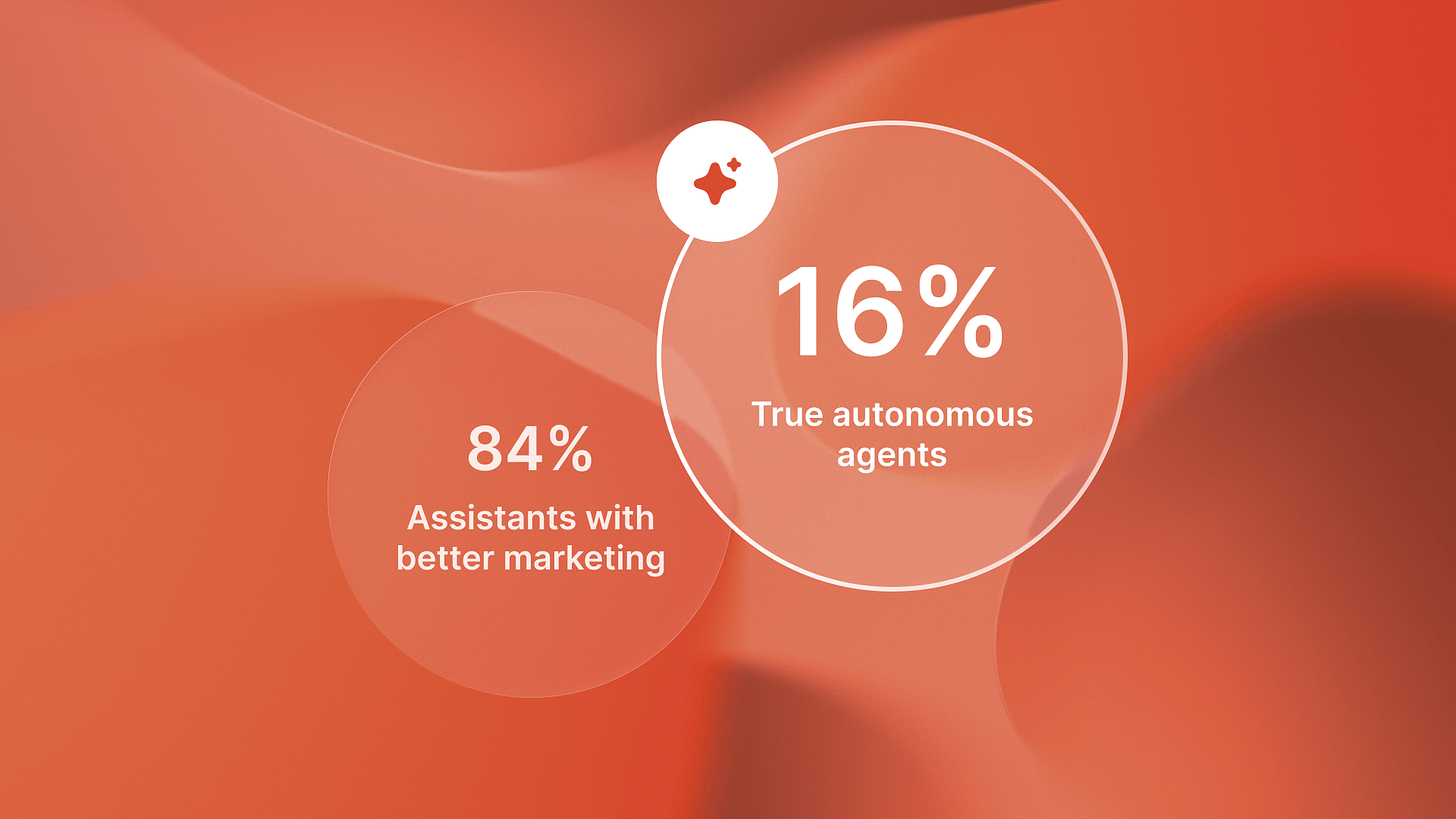

Only 16% of enterprise AI agents are actually autonomous

I’ve been reviewing production AI deployments for the past six months. Same pattern everywhere.

Companies show me their “AI agent” implementations. They’re proud of the productivity gains. Better email drafts. Faster code suggestions. Smarter insights surfacing.

That’s not an agent. That’s an assistant.

The data supports this, and it should worry every technical leader making AI architecture decisions today. Menlo Ventures analyzed enterprise and startup AI deployments in their 2025 State of Generative AI report. Only 16% of enterprise deployments qualified as true agents with planning, execution, feedback, and adaptation capabilities. The other 84% were assistants pretending to be something they weren’t.

Here’s why this matters. Gartner predicts 40% of enterprise applications will embed AI agents by end of 2026, up from less than 5% in 2025. That’s explosive growth. But most companies building toward that 40% are architecting the wrong system. They’re investing in AI transformation while creating expensive technical debt.

The distinction between assistants and agents isn’t semantic. It’s architectural. And it determines whether you get productivity gains or workflow replacement.

What most companies are building

GitHub Copilot is an assistant. ChatGPT is an assistant. Salesforce Einstein is an assistant.

They enhance human work. They make you faster at tasks you were already doing. Code suggestions speed up development. Email drafts reduce writing time. Insight surfacing helps decision-making.

Every one of these tools requires human intervention for execution. You still write the code. You still send the email. You still make the decision.

I was talking with a Series B engineering team last month. They’d built what they called an “AI agent” for customer support ticket routing. Smart system. Used LLMs to understand ticket content, categorize issues, suggest relevant knowledge base articles.

When I asked who actually routed the tickets, they paused. Support team still did that manually. The AI just made better suggestions.

That’s perception without action. It’s assistant architecture.

The Four Layers Most Deployments Skip

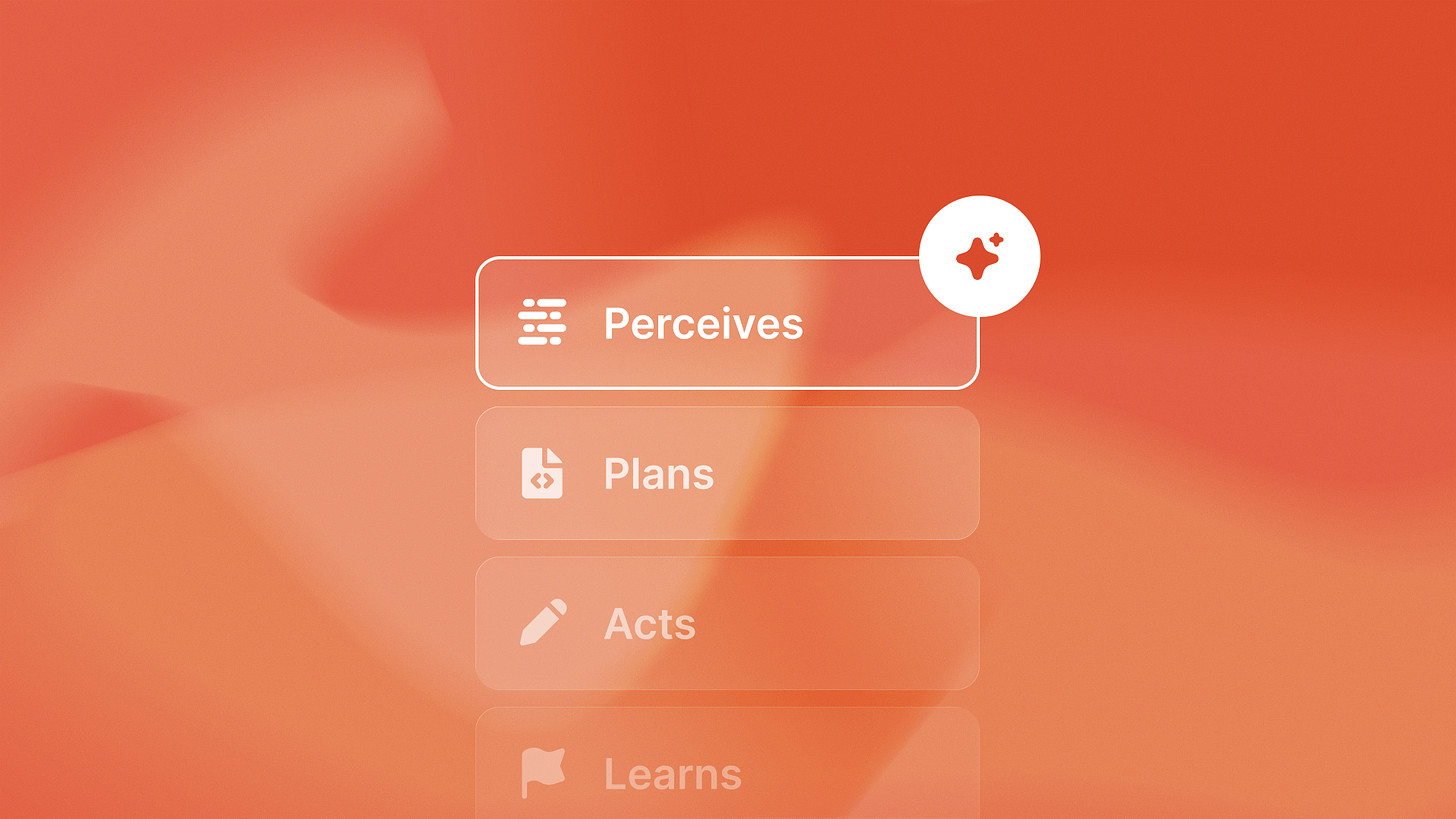

True autonomous agents require four distinct architectural layers. Most companies build one and stop.

Perception: Understanding context from data, conversations, system state. This is what 84% of deployments implement. LLMs excel at perception. They read documents, parse conversations, extract meaning from unstructured data.

Planning: Decision-making based on perceived context. This is where assistant architecture stops and agent architecture begins. Planning means the system decides what to do next without human input. It evaluates options. It chooses actions. It sequences workflows.

Action: Workflow execution without human confirmation. The system doesn’t suggest next steps. It takes them. It calls APIs. It updates databases. It triggers downstream processes.

Learning: Continuous improvement from feedback and outcomes. The system monitors results. It adjusts decision-making based on what worked and what failed. It gets better over time without human retraining.

The 16% vs 84% gap exists because building all four layers requires fundamentally different architecture than building perception alone.

We built QA flow as a true autonomous agent for regression testing. It doesn’t suggest tests you should write. It creates test cases from Figma designs, runs them in your staging environment, finds bugs, and files detailed GitHub issues with steps. No human confirms the test runs. No human reviews before filing issues.

That’s autonomous. Perception, planning, action, learning.

Why the architecture gap creates rebuild costs

Here’s what I’m seeing across portfolios. Companies start with assistant architecture because it’s faster to build and lower risk to deploy. Perception-layer implementations using LLM APIs can ship in weeks.

Then business requirements evolve. The productivity gains from assistants create appetite for workflow replacement. Leadership asks: “Can we make this fully autonomous?”

The answer is technically yes, architecturally no.

You can’t retrofit planning, action, and learning layers onto assistant foundations. The state management is wrong. The error handling is wrong. The human-in-loop assumptions are baked into every component.

Adding autonomy requires foundational rework, not feature additions.

Read it at [https://www.islandshq.xyz/blog/agentic-ai-vs-ai-assistants-why-only-autonomous-systems-deliver-420-roi]. The ROI difference between assistants and agents isn’t marginal. It’s structural. Assistants multiply human productivity. Agents replace human workflows entirely.

84% of enterprise deployments are not true agents. These companies may face rebuild costs if they need autonomous capabilities. That’s expensive technical debt created by architectural decisions made in the first 90 days of AI implementation.

Multi-Agent orchestration as the next pattern

Gartner tracked a 1,445% surge in multi-agent system inquiries from Q1 2024 to Q2 2025. That’s not hype. That’s recognition that complex automation requires orchestrated agent systems, not monolithic implementations.

Single agents handle bounded workflows. Multi-agent systems handle end-to-end processes that cross functional boundaries.

Example from our portfolio: compliance monitoring and contract updates at Shoreline. One agent monitors regulatory changes. Another agent maps those changes to existing contracts. A third agent generates compliant amendment language. A fourth agent routes amendments to appropriate legal review queues.

Four autonomous agents, orchestrated handoffs, no human intervention until legal review.

That’s workflow replacement, not productivity enhancement.

But multi-agent orchestration requires deliberate architectural decisions that most companies building assistants haven’t considered. How do agents communicate state? How do handoffs work when one agent completes and triggers the next? What happens when an agent in the middle of a sequence fails?

These are architectural concerns that don’t exist in assistant implementations. You can’t add them later. They’re foundational.

If you’re planning multi-agent deployments, I covered the implementation patterns here: https://www.islandshq.xyz/blog/ai-agents-2026-predictions.

The market is shifting from single agents to orchestrated systems. The architecture needs to account for that from day one.

The Competitive Advantage Window

Here’s the timing opportunity most technical leaders are missing.

The 1,445% surge in multi-agent inquiries shows the market is recognizing the distinction between assistants and agents. But recognition doesn’t mean implementation. Most companies still build assistant architecture because it ships faster and is easier to explain to nontechnical stakeholders.

That creates a head start window for companies building true agent foundations now.

Gartner predicts 40% adoption by the end of 2026. This means autonomous agents will be standard in enterprise software within 18 months. The companies building agent architecture today will have working systems and learned lessons. The companies building assistants today will be starting rebuilds.

That’s a 12-18 month head start. In markets where AI capabilities determine competitive differentiation, that gap matters.

The reality: building assistants when you need agents creates expensive rebuilds. The opportunity cost isn’t just rebuild effort. It’s the competitive advantage lost while you’re rebuilding instead of iterating.

We’re seeing this play out across Islands portfolio companies. The ones that architected for autonomy from the start are shipping multi-agent systems. The ones that started with assistants are in architecture discussion meetings.

The window for getting architecture right the first time is open. But it’s closing as the 40% adoption deadline approaches. Companies choosing assistant patterns now are choosing rebuild costs later.