Most companies measure time savings. They should measure workflow elimination.

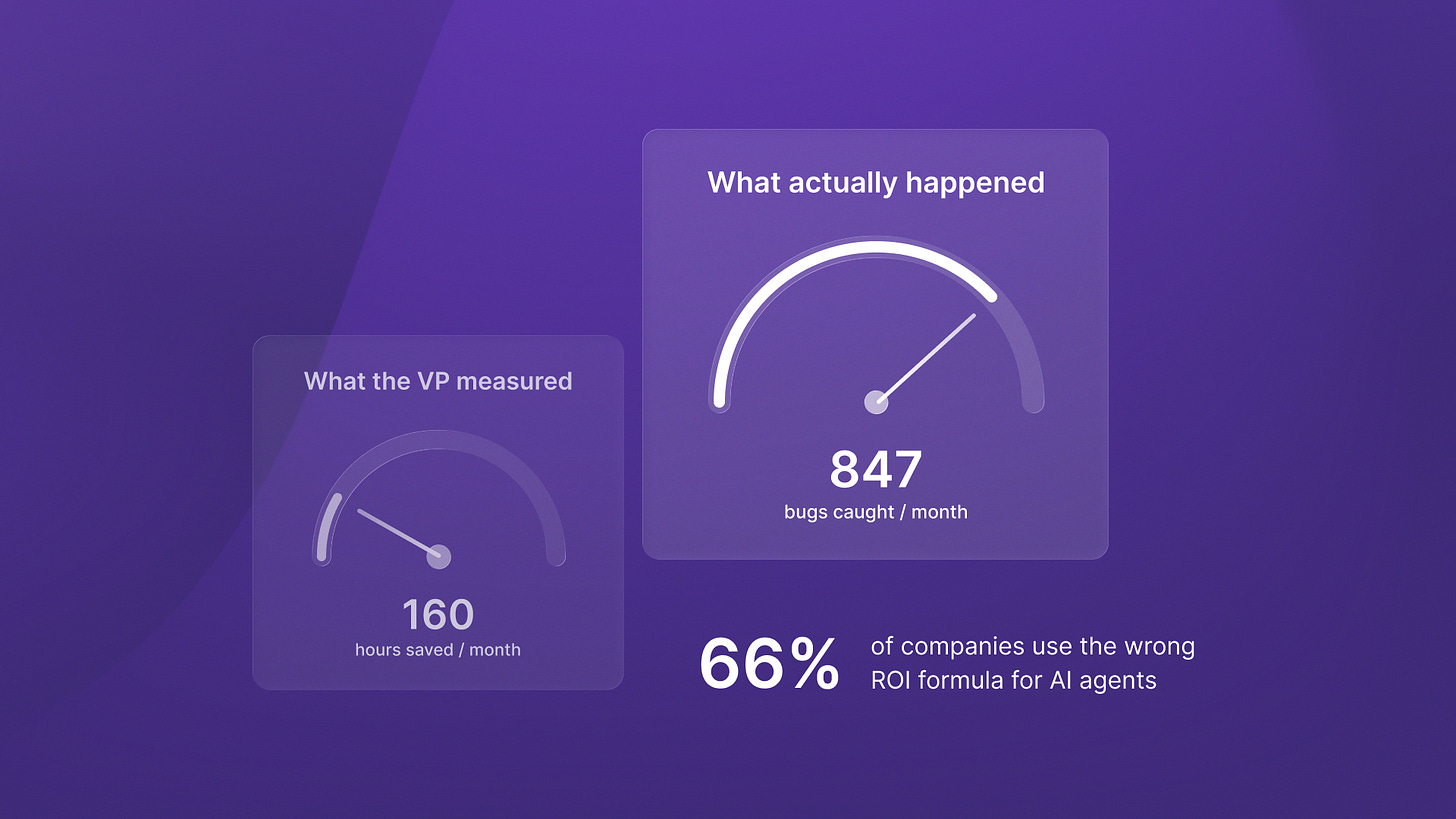

66% of companies can’t measure AI agent ROI. Not because the technology doesn’t deliver returns. Because they’re using formulas designed for SaaS tools and applying them to autonomous systems.

Engineering leaders deploy AI agents and see quick workflow gains. Then they struggle to justify ongoing investment to their boards. The problem isn’t the technology. It’s the measurement framework.

I’ve been thinking about this a lot lately because I keep seeing the same pattern across our portfolio. CTOs build AI agents for production. They watch these agents remove whole types of work. Then they present ROI numbers that miss the real value created. They’re measuring assistants when they deployed agents.

Let me explain what I mean.

The traditional ROI formula breaks for autonomous systems

Most companies use the same ROI framework as they do for productivity tools. They calculate hours saved and multiply by the average salary. They then project a payback period of 7 to 12 months. It’s the standard tech investment formula.

But autonomous systems don’t work like productivity tools. They don’t make existing work faster. They eliminate entire workflow categories.

Here’s the critical distinction: assistants create marginal productivity improvements measured in hours saved. Agents eliminate workflows measured in FTE replacement and new capability creation.

When you measure AI agent value through time-savings calculations, you’re looking at the wrong outcome at the wrong time horizon. You miss 60-80% of the actual returns.

Last month I was talking to a Series B fintech CTO who deployed an autonomous compliance monitoring agent. His board wanted quarterly ROI projections. He calculated hours saved on manual compliance checks and presented a 14-month payback. The board pushed back on the investment.

What he missed: the agent didn’t just speed up compliance checks. It eliminated three categories of regulatory violations that his team couldn’t have caught manually. That’s not productivity improvement. That’s risk elimination. The ROI framework needed to measure what became possible, not what became faster.

Organizations that properly measure agentic AI project average ROI of 171%, with US enterprises forecasting 192% returns. Those aren’t time-savings numbers. They’re business model transformation numbers.

The measurement shift: from hours saved to workflows eliminated

74% of executives report achieving ROI within the first year from AI agent deployments. But only when they track workflow elimination rather than time savings.

This isn’t semantic. It’s a fundamental shift in what you measure.

Traditional productivity metrics ask: “How much faster did this task become?” Agent metrics ask: “What workflows disappeared entirely?”

I was reviewing QA flow deployment data last week and something stood out. The platform doesn’t save QA engineers time on manual testing. It detects 847 bugs monthly that would never have been caught through manual processes. Those bugs would have shipped to production, created customer issues, required emergency patches.

That’s not productivity improvement. That’s capability creation. The engineering teams using QA flow ship faster with higher quality. They’re not doing the same work more efficiently. They’re doing fundamentally different work.

The ROI framework for agents needs different metrics:

FTE equivalents replaced (not hours saved)

Decision latency reduction (end-to-end workflow time, not task time)

Error rate elimination (outcomes prevented, not efficiency gained)

New capabilities enabled (what became possible that wasn’t before)

When you measure these outcomes, you see why conventional 12-month payback calculations systematically undervalue autonomous systems. The returns compound over time through data accumulation, process refinement, and expanding autonomy.

Why early ROI measurements miss most of the value

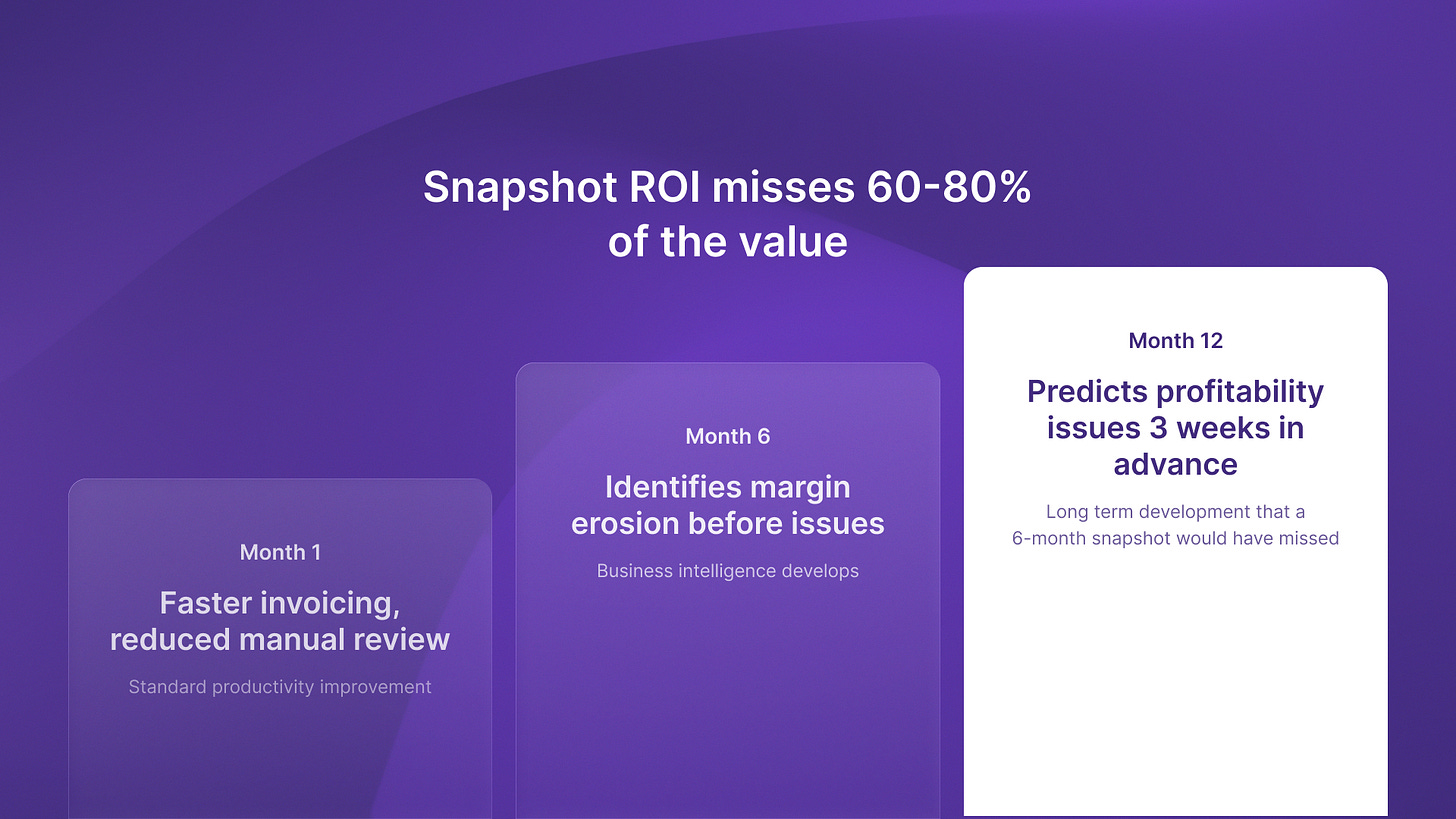

Here’s what most companies get wrong: They measure AI agent ROI at month six or month twelve. Then they make investment decisions based on those snapshots.

Production AI agents improve performance as they accumulate domain-specific data and refine decision-making. Early measurements show early workflow automation but miss growing value. Agents handle edge cases, cut error rates, and expand into nearby processes.

The companies projecting 171-192% ROI aren’t measuring quarterly snapshots. They’re measuring 24-36 month horizons.

I saw this clearly at Timecapsule when we deployed autonomous project profitability monitoring. Month 1 ROI looked modest: faster invoicing, reduced manual timesheet review. Standard productivity gains.

Month 6 told a different story. The agent had learned project patterns, identified margin erosion before it became critical, and enabled proactive scope adjustments. The value wasn’t faster admin work. It was business intelligence that didn’t exist before.

By month 12, the system was predicting project profitability issues three weeks in advance with 89% accuracy. That capability took time to develop. A 6-month ROI calculation would have missed it entirely.

This is why the measurement framework matters as much as the technology. If you only track short-term productivity gains, you will underinvest in systems that need 12 to 18 months to show full value.

Translating technical capability into business model metrics

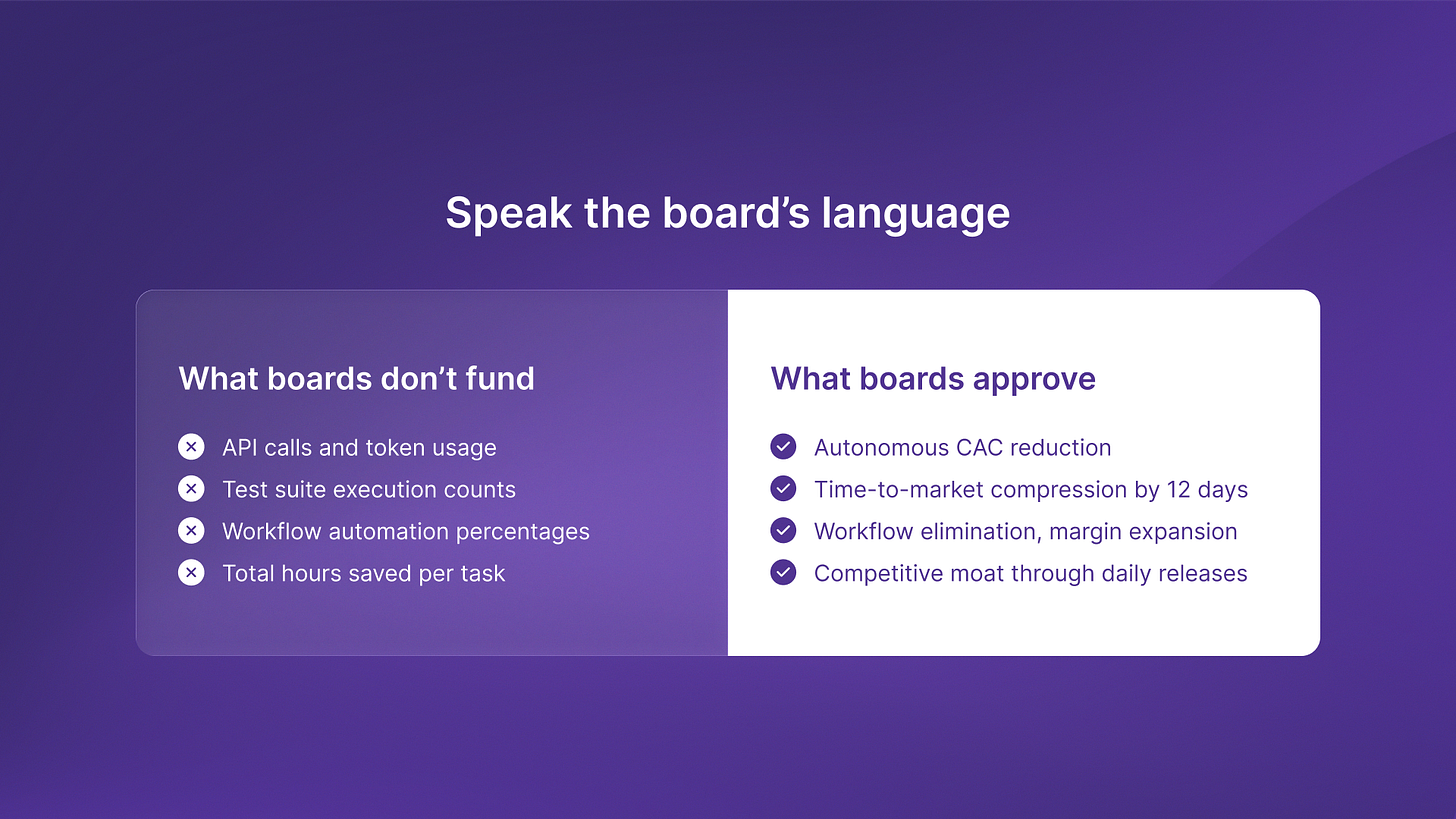

Here’s where most engineering leaders lose their boards: they present technical metrics instead of business outcomes.

API calls, token usage, test suite execution, workflow automation percentages. All valid technical measurements. None of them link to what boards care about. Boards care about customer acquisition cost. They care about time to market. They care about higher gross margins. They care about building a competitive moat.

The measurement framework must translate technical capability into business model transformation.

I’ve been helping portfolio companies reframe their AI agent ROI presentations, and the pattern is consistent. When engineering leaders link agent deployment to lower CAC through autonomous sales orchestration, boards approve continued investment.

When they present time savings and productivity multipliers, boards question the expense.

Last quarter I watched an engineering VP present AI agent ROI to his board. He led with “We automated 2,400 test suites monthly, saving approximately 160 engineering hours.” The board asked why they needed expensive AI to save 160 hours.

The reframe: “We deployed autonomous testing that catches 847 bugs monthly before they reach production. This helped us shorten our release cycle from bi-weekly to daily, cutting time-to-market by 12 days per feature. Our competitors ship quarterly. We ship weekly. That’s the moat.”

Same technology. Different measurement framework. Different board response.

If you’re struggling to justify AI agent investment, check your metrics. Are you measuring what became faster, or what became possible? Are you tracking hours saved, or workflows eliminated? Are you presenting technical capabilities, or business model transformation?

The companies achieving 192% ROI have figured out this measurement shift. They’re not optimizing existing workflows. They’re building entirely new capabilities that competitors can’t easily replicate.

The competitive advantage timeline

Companies that track AI agent ROI well today gain a competitive edge. They build up 18 to 24 months of learning. This learning and refinement helps create defensible moats.

Autonomous systems improve through data accumulation and process refinement. The agent you deploy today won’t be the agent you have in 18 months. It will have learned edge cases, refined decision-making, expanded into adjacent workflows.

That learning curve is your moat. Competitors who start later will be 18-24 months behind, even if they deploy identical technology.

I wrote about this in more detail in our analysis of why only autonomous systems deliver meaningful ROI. The architecture choice between assistants and agents shapes what you build and what results you can measure and share.

The companies winning with AI agents aren’t the ones with the best technology. They’re the ones measuring the right outcomes at the right time horizons. They understand that workflow elimination compounds differently than productivity improvement. They connect technical capability to business model metrics their boards actually care about.

And they’re building competitive advantages that conventional ROI formulas completely miss.