How to build an AI business case that actually gets approved

One in four companies sees negative ROI on AI investments despite spending billions. That’s not a rounding error. That’s a systematic failure to build business cases that survive contact with production reality.

I’ve been thinking about something you won’t see in vendor pitch decks. It’s the gap between approved AI projects and AI projects that deliver value. In 2025, 42% of companies abandoned most of their AI initiatives (AI Statistics 2025 Industry Analysis). That’s up from 17% the year before. The jump isn’t because AI stopped working. It’s because companies are discovering that demo-quality AI and production-quality AI have completely different economics.

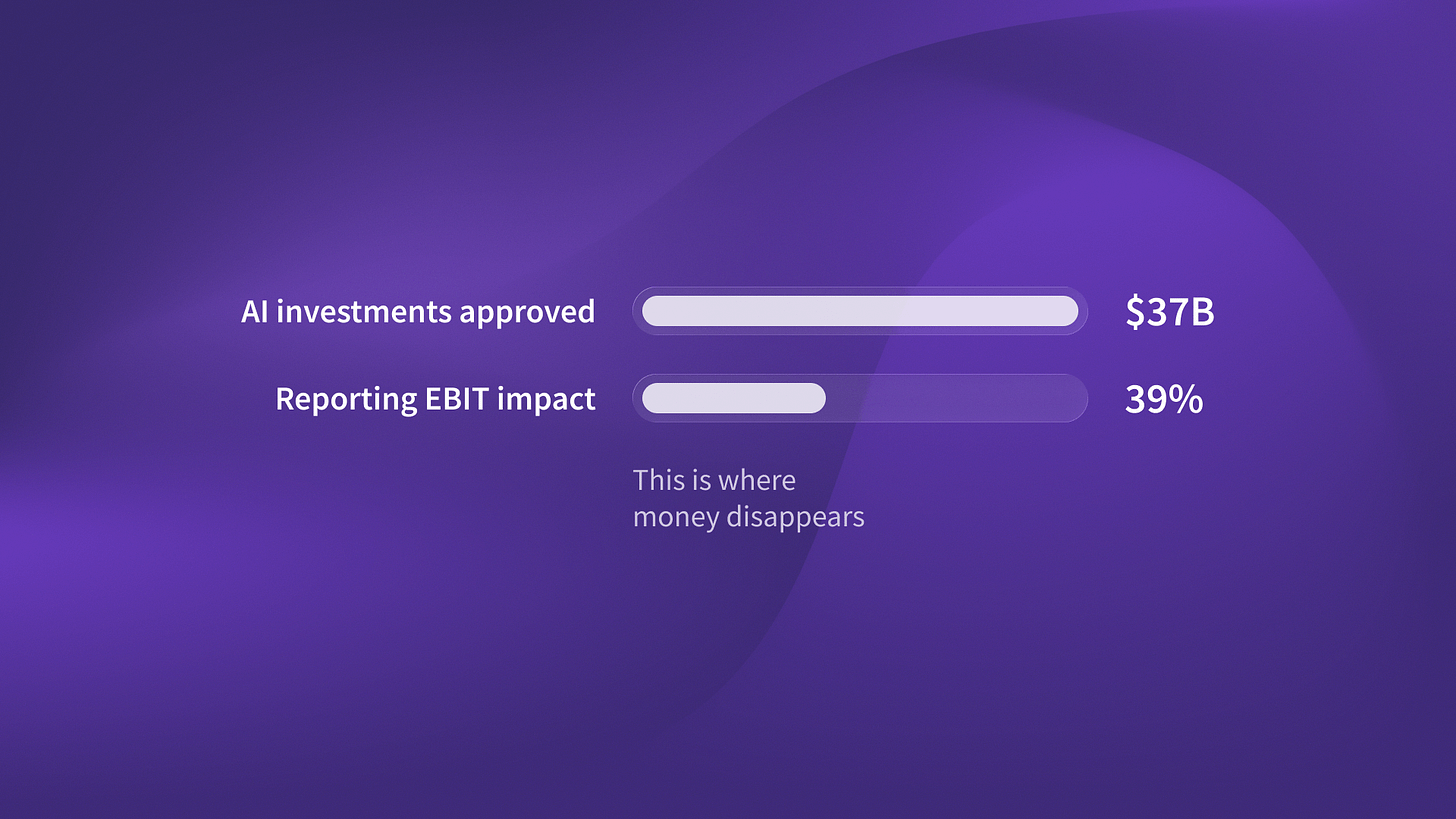

Here’s what’s creating the crisis. Companies spent $37 billion on generative AI in 2025. That is up from $11.5 billion in 2024. Source: Menlo Ventures, State of Generative AI 2025. But when you dig into the outcomes, only three out of four see positive ROI. That means 25% are losing money on AI investments they formally approved and funded.

The most interesting part: 72% of organizations formally measure Gen AI ROI. But only 39% report an EBIT impact at the enterprise level. (McKinsey State of AI 2025). That 33-point gap reveals something critical. Most companies are tracking vanity metrics instead of real business outcomes. They measure model accuracy or deployment speed.

Meanwhile, the CFO asks if this actually affects the bottom line.

Why traditional business cases fail for AI agents

I was talking to our team at QA flow last week about what made their business case work. They’re running autonomous testing that generates and executes test cases from Figma designs. The conversation revealed something that applies across every AI agent deployment we’ve seen.

Traditional software ROI is straightforward. You calculate implementation cost, estimate productivity gains, project those over three years, and present the NPV. For AI agents, that model breaks completely.

Autonomous agents replace entire workflows, not just enhance productivity. That changes everything about the cost structure. When we built the business case for QA Flow’s autonomous testing platform, we did not base ROI on hours saved. It was: “We eliminate an entire job category and improve quality outcomes.”

That’s a different conversation with finance. You’re not asking for budget to make existing teams faster. You’re asking for budget to fundamentally restructure how work gets done. The approval thresholds are higher. The scrutiny is deeper. The failure consequences are more visible.

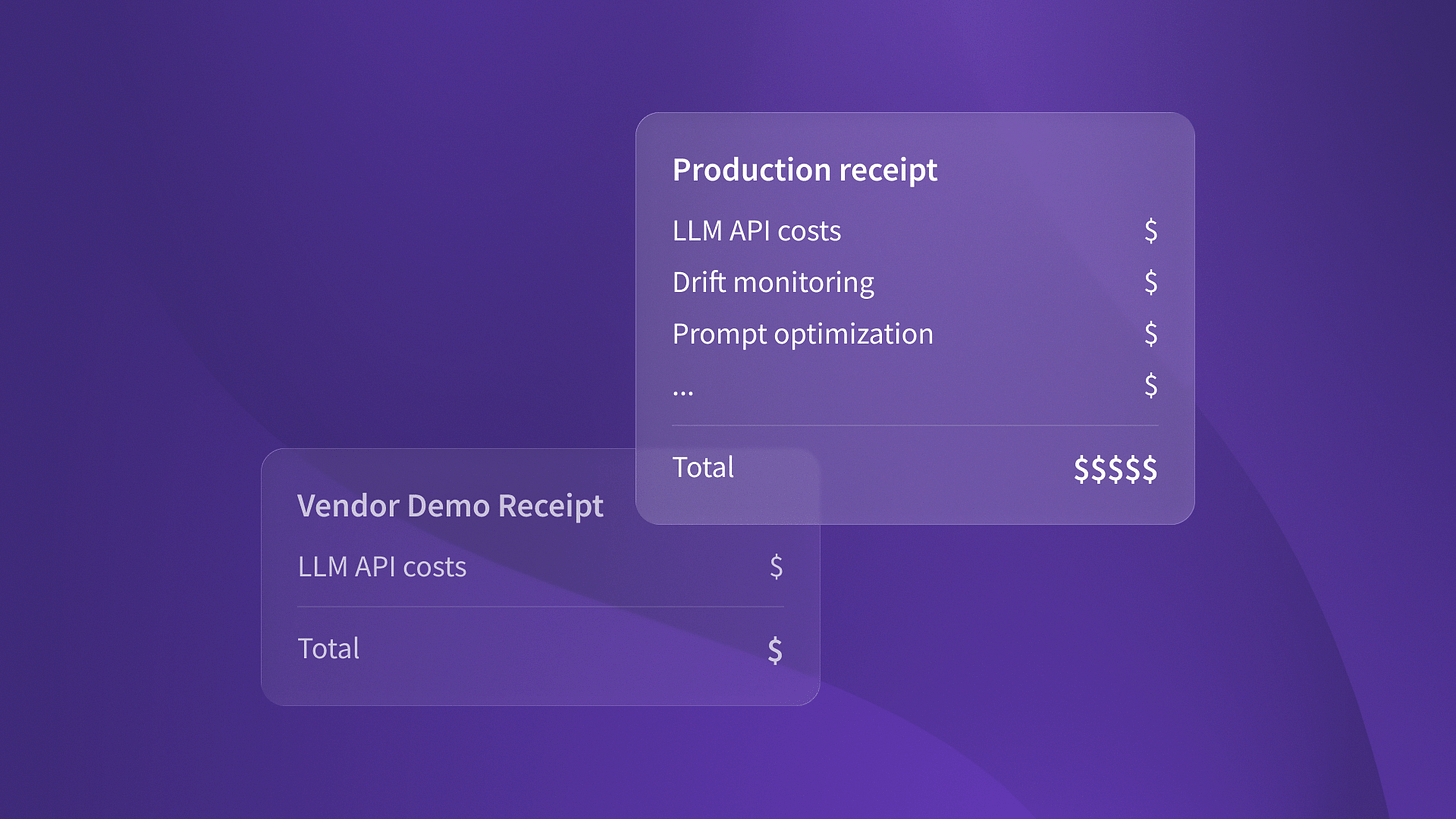

Here’s what the real cost structure looks like. Initial build includes architecture design, model selection and fine-tuning, integration with existing systems, and testing infrastructure. That’s typically 6-12 months of engineering time. Then you have ongoing LLM costs that scale with usage. Then maintenance overhead for model updates, drift monitoring, and performance optimization.

None of that shows up in vendor demos. But all of it shows up in your P&L.

The framework that survives CFO scrutiny

Let me share what actually works when you’re building the business case. This is based on several portfolio companies. They got AI agent investments approved. They deployed them to production. They reported a positive impact on EBIT.

Start with workflow replacement economics. Identify the complete workflow the agent will replace. Map every human touchpoint, every handoff, every quality check. Calculate the fully loaded cost of that workflow today. Include salary, benefits, tools, management overhead, error correction, and opportunity cost of delays.

For Ingage, that meant mapping the entire LinkedIn engagement workflow: profile research, content ideation, engagement timing, response monitoring, and relationship tracking. The human cost wasn’t just the hours spent. It was the inconsistency, missed opportunities, and the cost of sales reps doing manual outreach instead of closing deals.

Next, build the honest cost model for the AI replacement. Include architectural rebuild if you’re starting from scratch. Include ongoing LLM costs with realistic usage projections. Include maintenance overhead at 20-30% of build cost annually. Include failure scenarios and mitigation costs.

This is where most business cases fall apart. They use vendor pricing for LLM costs without accounting for optimization cycles. They ignore architectural decisions that create technical debt. They assume maintenance will be minimal because “it’s just API calls.”

I wrote about the real economics of AI agents based on actual production deployments. The hidden costs add up fast. Model drift monitoring. Prompt optimization cycles. Edge case handling. Integration maintenance as upstream systems change. Budget for these or watch your ROI evaporate.

What makes autonomous agents different

Here’s something I noticed while working with Islands clients on their AI strategies. The fundamental difference between AI assistants and autonomous agents isn’t technical capability. It’s decision rights.

Assistants enhance human decision-making. Agents replace it. That architectural choice determines your entire economic model. I wrote a detailed comparison of agentic AI vs AI assistants. It explains why the ROI gap is so dramatic.

When you’re building the business case, this distinction matters enormously. AI assistants have incremental ROI. Faster email drafting. Better insights surfacing. Improved code suggestions. You’re calculating productivity multipliers on existing headcount.

Autonomous agents have step-function ROI. They don’t make the workflow 30% faster. They eliminate it entirely and replace it with something that runs continuously without human intervention. That’s why the business case either justifies itself immediately or doesn’t justify at all.

The approval conversation is different too. For assistants, you’re asking to enhance existing operations. For agents, you’re asking to restructure them. That means longer approval cycles, more stakeholder alignment, and higher executive visibility. But it also means bigger impact when you succeed.

Getting to Yes: the risk mitigation section

Every business case that survives board scrutiny includes explicit risk mitigation. Not because boards love process. Because 42% abandonment rates make them skeptical of AI investments that don’t account for failure modes.

The risks to address include an architecture mismatch that forces costly rebuilds. LLM costs can balloon as you scale. Performance can degrade over time. Integration can become brittle as upstream systems change. Organizations may resist autonomous decision-making.

For each risk, provide specific mitigation. Architectural mismatch: start with pilot deployment that validates approach before full build. LLM costs: implement cost monitoring and optimization cycles from day one. Performance degradation: build drift detection and model update processes into operations. Integration brittleness: use abstractions that isolate agent logic from integration details. Organizational resistance: involve affected teams in design and demonstrate value before broad rollout.

When Timecapsule built their real-time profitability monitoring, they included explicit risk mitigation for data quality issues and adoption resistance. That’s what made the business case credible. They acknowledged the ways it could fail and showed how they’d prevent or recover from each failure mode.

The competitive advantage hidden in the abandonment rate

Here’s the opportunity that most technical leaders are missing. The 42% abandonment rate is creating board-level skepticism about AI investments. That skepticism is your advantage if you show up with honest economics and real risk mitigation.

Your competitors are still building business cases based on vendor demos and optimistic projections. They’re ignoring the 33-point gap between measuring ROI and reporting EBIT impact. They’re treating AI agents like any other software investment.

You can show up with a business case. It covers production costs. It includes clear risk mitigation. It explains why autonomous agents have different economics than AI assistants. That’s how you get approval while competitors are stuck explaining why their last AI project didn’t deliver.

The companies building rigorous business cases now will deploy autonomous agents while everyone else is still justifying AI assistants. If you want a place to start, I made a 30-day playbook for building your first AI agent. It covers perception, reasoning, action, and learning.

The gap between hype and reality is temporary. Technical leaders who can explain what production AI costs and delivers will get these investments approved. That’s your window.